Build a REST API With Actix Web, SQLx, and PostgreSQL in Rust

Actix Web + SQLx + Postgres is the most battle-tested opinionated path to production Rust APIs, but the real traps are the async boundaries and pooling decisions hiding just past day 1.

Building production-grade APIs in Rust has never been more accessible," Marcus Chen wrote in his recently published hands-on tutorial, and the framing is deliberate. This is not a pitch for Rust as systems code. It is a direct argument that a Rust web backend, wired up with Actix Web 4, SQLx, and PostgreSQL, is ready for teams that care about predictable latency, memory safety, and shipping a single binary to production.

Before the first line of application code, though, you are making three consequential framework decisions. Understanding why this particular stack, and where its edges are, will save you days of confusion.

The Stack Choice: Why Actix + SQLx + Postgres Over the Defaults

The natural comparison point for Actix Web 4 is Axum, currently at version 0.8.8 as of early 2026. Axum is the Tower ecosystem's answer to Rust web frameworks: deeply integrated with Hyper, composable middleware via `tower::Layer`, and a design philosophy that feels friendlier to Rust newcomers. Benchmarks show Actix Web handling roughly 10 to 15 percent more requests per second than Axum under heavy load, though for the vast majority of services that gap is academic. What actually tips the decision is ecosystem maturity: Actix Web 4 has a wider catalog of battle-tested crates and has been running in production deployments longer. If your team is greenfield on Rust and already uses Tower-based infrastructure, Axum is a perfectly reasonable alternative. If you want the largest surface area of documented production patterns, Actix Web 4 is the safer bet.

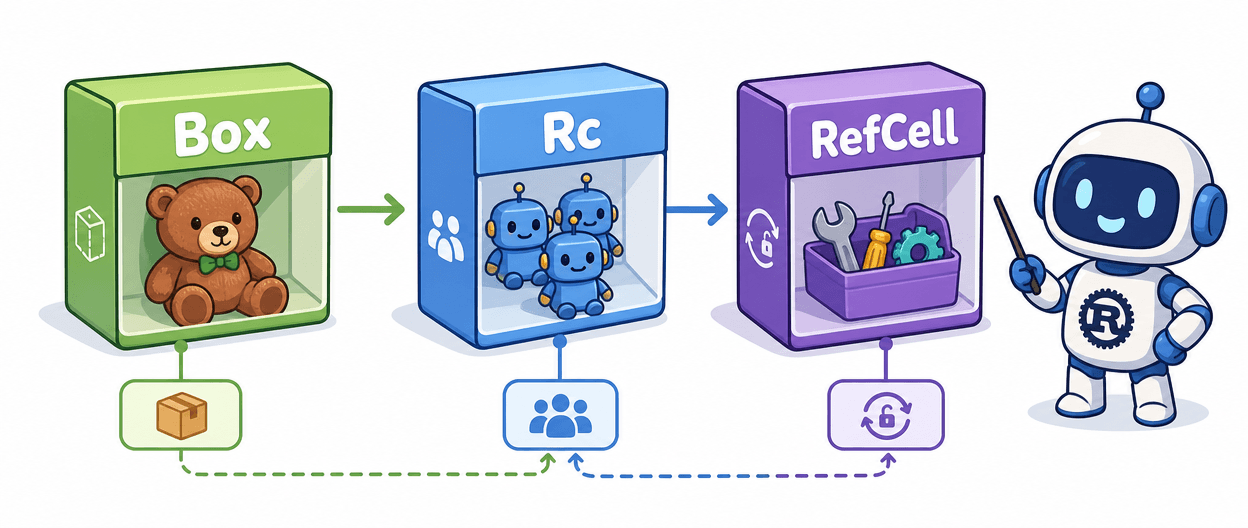

The database driver choice is where the philosophical gap is wider. Diesel is Rust's most established ORM, offering a strongly-typed query builder DSL that generates SQL at compile time without requiring a live database. That sounds ideal until you hit a search endpoint with optional filters: Diesel's static query shapes make dynamic queries genuinely painful, and its async story runs through `diesel-async`, a bolt-on that adds a dependency and mental overhead compared to libraries where async is native. SQLx takes the opposite approach entirely. You write raw SQL strings, and the macro system verifies them against a live database at compile time, or against a cached query plan stored in a `.sqlx` directory for offline builds. SQLx is async-native, supports PostgreSQL, MySQL, and SQLite, and produces compiler errors that are legible rather than Diesel's occasionally 40-line type-system diagnostics. For a production service with complex, evolving queries, SQLx's full SQL control wins.

PostgreSQL over SQLite is less a debate and more a deployment reality check. SQLite is excellent for local development and testing, and SQLx supports it natively, but the moment you need connection pooling across multiple async workers, transactions with real concurrency semantics, or a migration history that lives alongside your schema in version control, SQLite becomes a liability. Start with Postgres locally, run it in Docker, and your production deployment is an environment variable swap, not an architectural rethink.

Bootstrapping: The Cargo.toml That Actually Matters

`cargo new` gives you the seed, but the real project setup lives in `Cargo.toml`. The core dependencies are `actix-web`, `sqlx` with the `postgres`, `runtime-tokio`, and `macros` feature flags, `dotenvy` or a config crate for environment management, and `tracing` plus `tracing-subscriber` for structured logging. Add `serde` and `serde_json` for your request/response DTOs. The `sqlx-cli` tool goes in your dev environment separately; it handles both the compile-time query verification cache and your migration workflow.

Actix Web delegates all scheduling and I/O to Tokio. When you annotate `main()` with `#[actix_web::main]`, it bootstraps a Tokio multi-threaded executor tuned for Actix Web's worker model. This matters more than it seems: mixing Actix Web with other async crates requires verifying they pin to the same Tokio runtime version. Mismatched runtime versions are one of the first "day 2" breakages that don't surface until you add a seemingly unrelated dependency.

Routing, Handlers, and Typed Extraction

Actix Web's handler model is built around typed extractors. A `web::Json<T>` extractor deserializes the request body into your DTO at the framework level, returning a 400 before your handler function even runs if the shape is wrong. `web::Path<T>` and `web::Query<T>` work the same way. The Postgres connection pool gets injected via `web::Data<PgPool>`, which Actix stores in the application state and clones an `Arc` reference into each handler. The pattern looks like this in practice: define a route scope, attach it to the `HttpServer` builder alongside your pool, and every handler that needs the database just declares `pool: web::Data<PgPool>` in its signature.

The Day 2 Gotchas: Pooling, Migrations, Error Handling, Async Boundaries

This is where tutorials typically leave you stranded. Knowing the pattern is not the same as knowing where it breaks.

Pool sizing is the most commonly misconfigured production parameter. `PgPoolOptions::new().max_connections()` defaults conservatively, but the right number is a function of your CPU count, your Postgres `max_connections` setting, and your expected query latency. A common starting formula ties your pool ceiling to two times the number of async worker threads, then subtracts headroom for administrative connections. Undersizing means requests queue on the pool; oversizing means Postgres itself becomes the bottleneck. Neither failure is loud at first.

SQLx migrations via `sqlx migrate` give you timestamped migration files committed to version control, which is the right pattern. The gotcha is the `.sqlx` offline query cache. If you run `cargo build` without a live database and without a populated `.sqlx` directory, the build fails. Commit the `.sqlx` directory to your repository and run `cargo sqlx prepare` before any CI build step that does not have a Postgres sidecar available.

Error handling is where most tutorial projects stay toy-grade. The production pattern is a central error enum that implements `actix_web::ResponseError`, mapping database errors (unique constraint violations, not-found rows, connection failures) to specific HTTP status codes. A `404` from a missing row and a `500` from a connection timeout are not the same error, and collapsing them with a generic handler is a debugging nightmare in production. The `tracing` crate, configured with `tracing_actix_web` middleware for request IDs, gives you structured log output that ties a request through every layer without manual threading of context.

Testing Against a Real Database

The integration test pattern that holds up uses a Dockerized Postgres instance, either a shared test database that each test suite migrates fresh, or per-test ephemeral databases with `CREATE DATABASE` in the test setup. `sqlx::test` attribute macros handle much of this ceremony. Unit tests can mock at the handler level, but compile-time query checks mean most of your logic errors surface before the test runner even loads, which is precisely the point.

For CI, a GitHub Actions workflow with a `postgres` service container wired to the same `DATABASE_URL` your compile step uses gives you end-to-end confidence on every push without external infrastructure.

Deployment and Production Hardening

Multistage Dockerfiles are the standard here: a `rust:latest` builder stage compiles the binary, and an `ubuntu:22.04` or `debian:bookworm-slim` runtime stage copies just the binary and any required shared libraries. The result is a container image in the 20 to 50 megabyte range, a significant operational advantage over JVM or Python runtimes. The single-binary deployment model, one of Rust's most underrated production properties, means no interpreter version mismatches, no `requirements.txt` surprises.

Before calling a service production-ready, run through this checklist:

- Set `DATABASE_URL` and all secrets via environment variables; never hardcode.

- Configure pool `min_connections`, `max_connections`, `connect_timeout`, and `idle_timeout` explicitly.

- Commit the `.sqlx` offline query cache and run `cargo sqlx prepare` in CI.

- Implement a central `ResponseError` enum covering at minimum: not-found, conflict (constraint violation), unprocessable entity (validation), and internal server error.

- Add `tracing_actix_web::TracingLogger` middleware for per-request structured spans.

- Export traces to a Prometheus-compatible collector; annotate slow query paths with explicit span instrumentation.

- Run migrations as a startup step, not a manual operation, using `sqlx::migrate!()` in your `main()`.

- Validate your Docker image starts cleanly against a fresh Postgres instance in your CI pipeline.

The Actix Web + SQLx + Postgres combination is opinionated in all the places it needs to be. Postgres developers already value reliability and compile-time correctness, which is exactly the contract SQLx offers. Rust's safety guarantees do not stop at memory; they extend through your query layer, your error types, and your async boundaries. The teams most likely to succeed with this stack are those willing to trust the compiler enough to let it catch the errors they would otherwise find in production.

Know something we missed? Have a correction or additional information?

Submit a Tip