OpenObserve Rewrites XDrain in Rust, Achieves 40x Log Detection Speedup

OpenObserve's Rust rewrite of XDrain hit 361,000 logs/sec, a 40x jump over Python, using prefix trees and memory-bounded LRU caches.

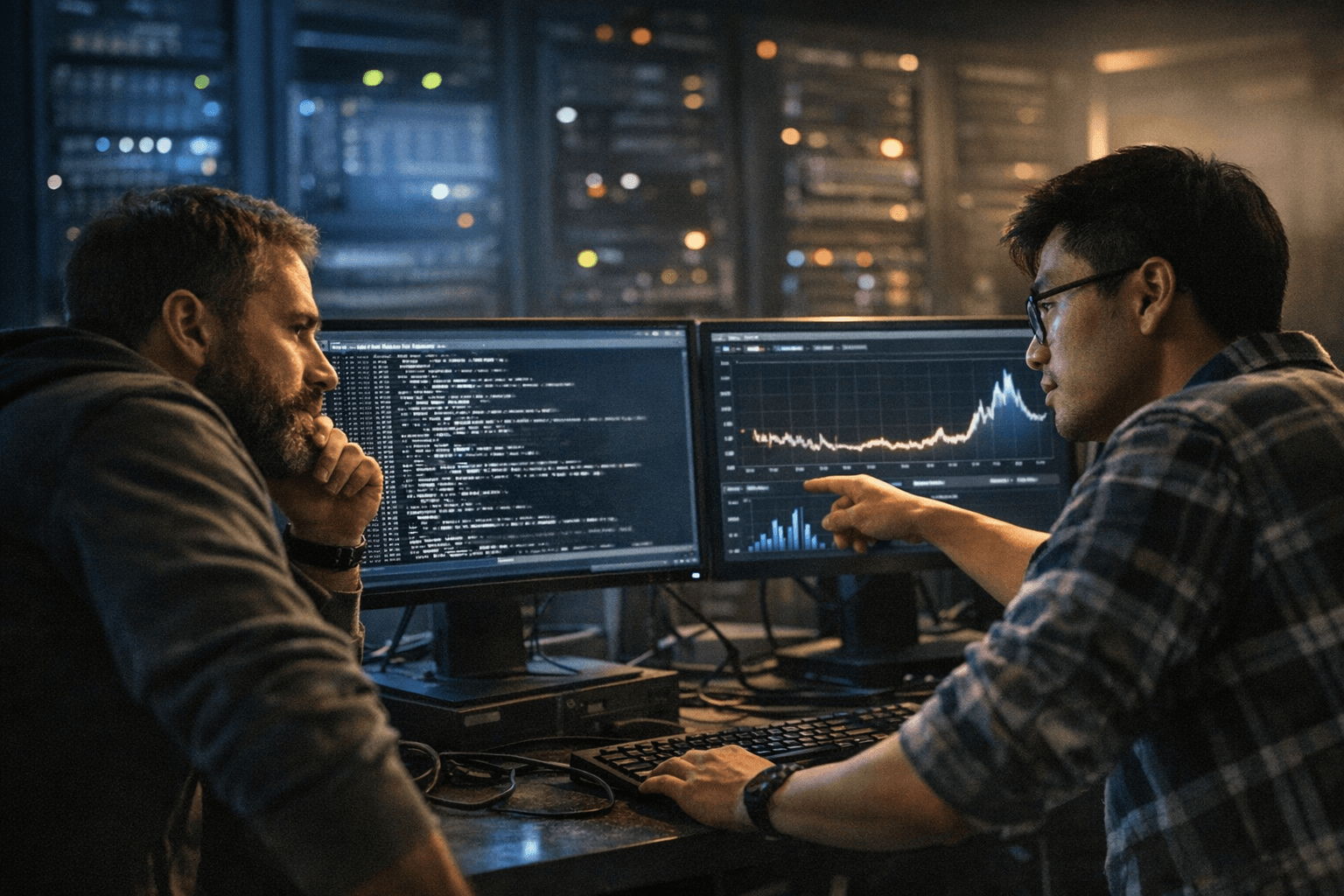

Processing 361,000 log lines per second in real-time is the kind of number that makes observability engineers sit up straight, and that is exactly what OpenObserve's engineering team achieved after rewriting their XDrain log pattern extraction algorithm from Python into Rust.

Ashish Kolhe published the technical post on March 17, 2026, with Manas Sharma following the next day under the same headline: "How We Built XDrain in Rust and Why It Made Log Pattern Detection Actually Fast." The core result is a roughly 40x performance improvement over the previous Python implementation, a gain the team credits to three specific algorithmic choices rather than a simple language swap.

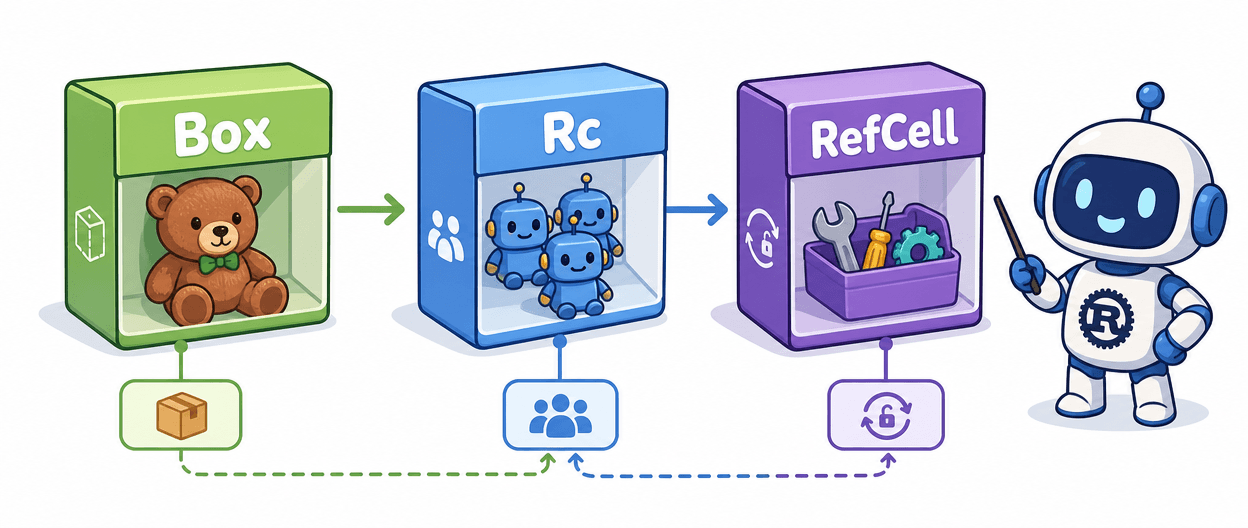

Those choices are prefix trees, systematic sampling, and memory-bounded LRU caches. Prefix trees give XDrain a structured, hierarchical way to group incoming log lines by shared token prefixes, avoiding redundant comparisons that accumulate quickly at scale. Systematic sampling reduces the volume of log lines the algorithm must process exhaustively without sacrificing pattern coverage. Memory-bounded LRU caches keep frequently seen patterns hot in memory while preventing unbounded growth, a concern that becomes acute when you are ingesting hundreds of thousands of lines per second in a long-running process.

XDrain itself is a log pattern extraction algorithm: given a stream of raw, unstructured log messages, it identifies recurring structural patterns and clusters lines accordingly. The problem sits at the heart of observability pipelines because a system emitting a million log events per minute is useless without a way to group "disk I/O error on /dev/sda" from ten thousand hosts into a single actionable pattern rather than ten thousand individual entries. Python's GIL and interpreted overhead make it a natural bottleneck for this kind of tight, per-line processing loop, which explains why the OpenObserve team chose Rust for the rewrite rather than reaching for Cython or a C extension.

The 40x figure was measured against the prior Python implementation, though the engineering post does not detail the hardware configuration or the specific log dataset used in benchmarking. Whether those gains hold across different log morphologies, line lengths, and cardinality distributions remains an open question until the team publishes fuller methodology.

OpenObserve has been building toward faster incident resolution across its platform, framing AI-powered observability around reducing Mean Time to Resolution through detection, triage, diagnosis, and remediation. A log pattern extraction layer that can keep pace with real-time ingestion at this throughput is foundational to that goal: patterns identified faster mean anomalies surface faster, and faster anomaly surfacing is where MTTR reductions actually begin.

Know something we missed? Have a correction or additional information?

Submit a Tip