Rust Concurrency Guide Favors Message Passing Over Shared State

Message passing beats shared state in Rust concurrency, and here's the async/await, Tokio, and channels breakdown that's got developers talking.

Rust's concurrency model has always been one of its sharpest selling points, but knowing *which* tools to reach for, and when, separates clean concurrent code from a tangle of locks and undefined behavior. A recent breakdown from an experienced Rust developer has crystallized the community's thinking around a clear hierarchy: favor message passing over shared state, lean on async/await for non-blocking work, treat Tokio as your runtime backbone, use channels to move ownership cleanly, and keep `Arc<Mutex<T>>` in reserve for the cases where nothing else fits. The post sparked significant developer discussion, and it's easy to see why: the guidance cuts through a topic that trips up even experienced systems programmers.

Why Message Passing Wins

The philosophical core of this guidance is that shared mutable state is the root of most concurrency bugs. When two threads or tasks both hold a reference to the same data and either one can mutate it, you've created a scenario where the compiler's ownership guarantees stop protecting you at runtime. Rust's type system catches a remarkable amount of this at compile time, but the moment you introduce `Arc<Mutex<T>>`, you're handing some of that enforcement back to runtime logic, specifically, to the discipline of whoever holds the lock.

Message passing sidesteps that problem by design. When one task sends data to another through a channel, ownership of that data transfers with the message. There's no shared reference; the sender gives up the value, and the receiver takes it. This maps directly onto Rust's ownership model in a way that feels natural rather than bolted on. The result is code that's easier to reason about, easier to test, and far less prone to the deadlocks and data races that plague concurrent systems written in other languages.

Async/Await for Non-Blocking Operations

Modern Rust concurrency almost always starts with `async`/`await`. Rather than spawning a thread for every unit of work, async functions let the runtime schedule thousands of tasks on a small pool of OS threads, suspending execution at each `await` point and resuming when the underlying I/O or timer is ready. This is the non-blocking model that makes Rust competitive with Go and Node.js for high-throughput networked services, while still giving you the memory safety and zero-cost abstractions the language is known for.

Writing async code in Rust means marking functions with `async fn` and inserting `.await` at every point where the function should yield control. The compiler transforms these functions into state machines under the hood, which is why Rust's async is zero-cost: there's no heap allocation per suspension point unless you explicitly box a future. That efficiency matters enormously at scale, and it's one reason the async model is the default recommendation for any I/O-bound concurrent workload.

Tokio as the Runtime

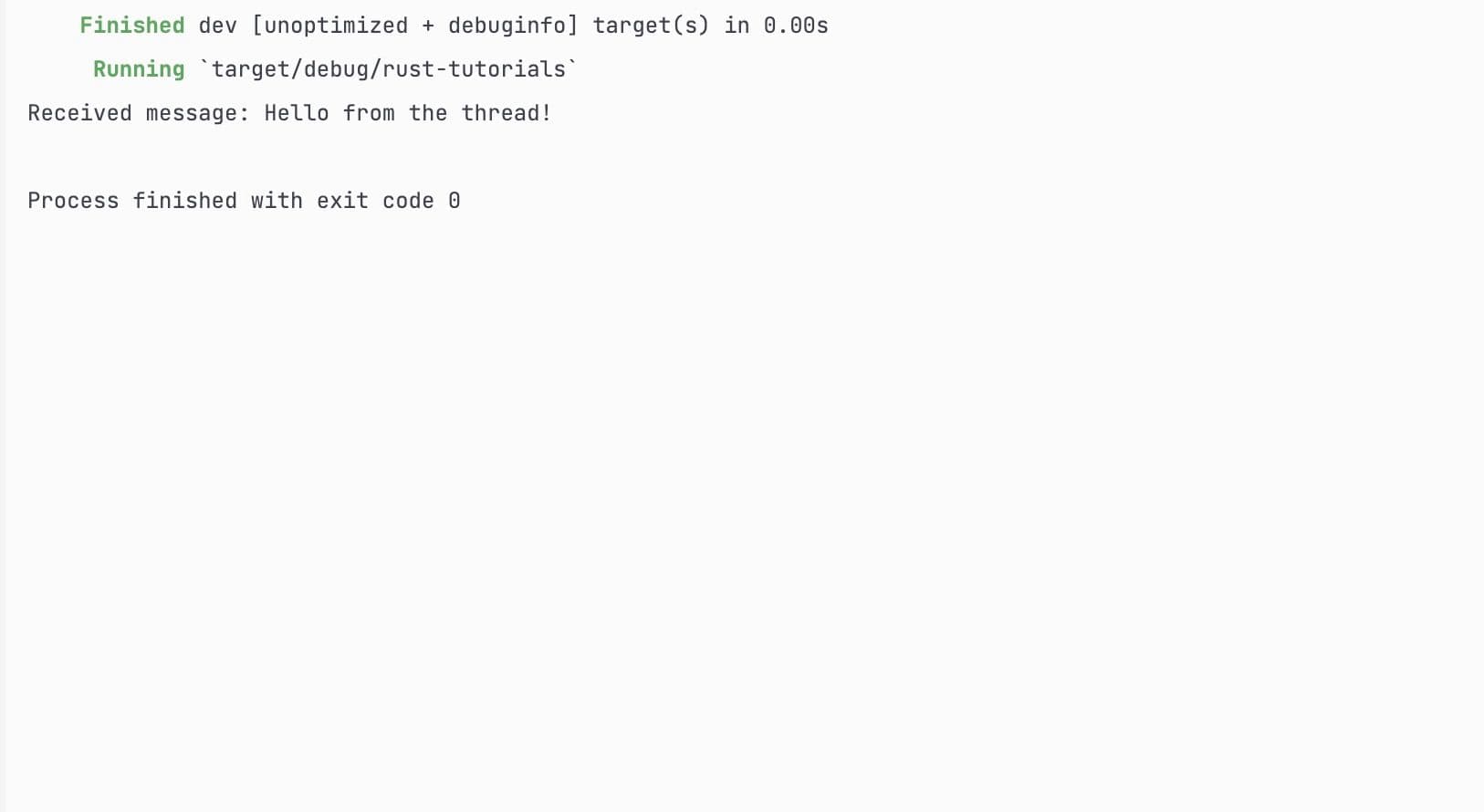

`async`/`await` syntax in Rust is runtime-agnostic by design; the language gives you the building blocks but doesn't ship a built-in executor. In practice, Tokio has become the dominant choice, and for good reason. Tokio is a production-grade async runtime that provides a multi-threaded work-stealing scheduler, async I/O built on epoll/kqueue/IOCP depending on the platform, timers, and a rich ecosystem of compatible libraries. When you annotate your main function with `#[tokio::main]`, you're opting into all of that infrastructure.

The guidance here is to treat Tokio not just as a dependency but as the foundation your entire async stack is built on. Most of the major async crates, including Hyper for HTTP, Axum for web services, and SQLx for database access, are designed to run on Tokio. Picking Tokio early means you're working with the grain of the ecosystem rather than against it. The runtime handles task spawning, scheduling, and I/O readiness notification so your application code can stay focused on business logic.

Channels for Ownership Transfer

Within an async Tokio application, channels are the idiomatic way to pass data between tasks. Tokio ships `tokio::sync::mpsc` for multi-producer, single-consumer scenarios, `tokio::sync::oneshot` for single-use response handles, and `tokio::sync::broadcast` for fan-out situations where multiple receivers need the same message. Each of these channel types transfers ownership of the value being sent, which means the compiler enforces that no two tasks hold a mutable reference simultaneously.

This isn't just a stylistic preference. The ownership transfer through channels is what gives message-passing concurrency its safety guarantees in Rust. When a task sends a value into a channel, it can no longer access that value; the borrow checker enforces this statically. The receiving task gets exclusive ownership and can mutate it freely without any lock. This pattern scales cleanly from simple producer-consumer pipelines to complex actor-style architectures where each task owns a piece of state and communicates with others exclusively through messages.

Choosing the right channel type matters. Use `mpsc` when multiple producers need to feed a single worker. Use `oneshot` when a spawned task needs to return a single result to its caller, which is the async equivalent of a future return value. Use `broadcast` when you have an event bus pattern where many listeners need to react to the same stream of events.

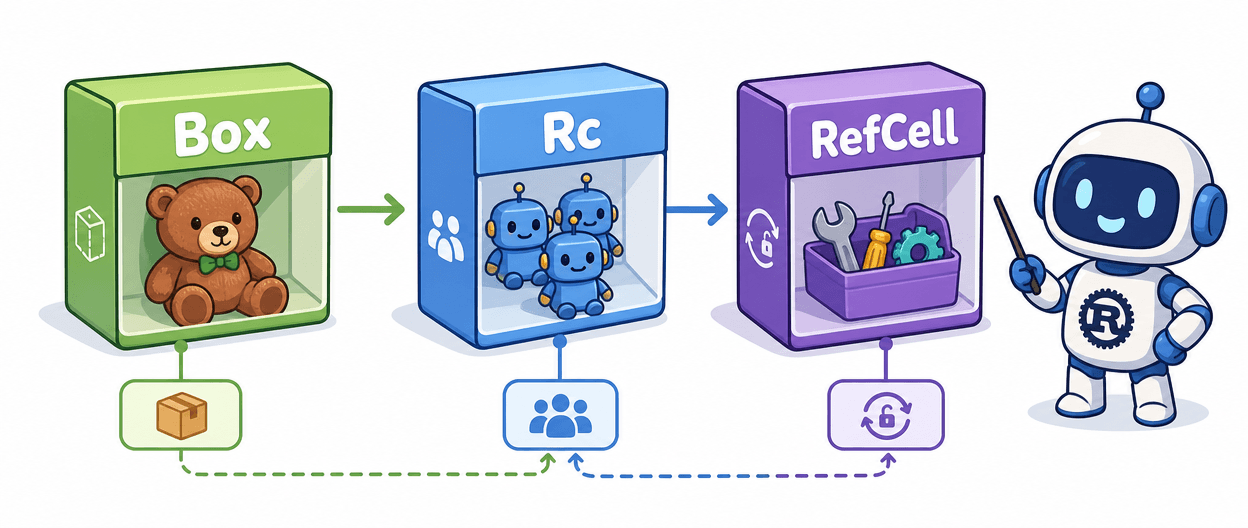

Arc<Mutex<T>> Used Sparingly

None of this means `Arc<Mutex<T>>` has no place in Rust concurrency. It does, and understanding when to use it is as important as knowing when to avoid it. `Arc` (Atomically Reference Counted) allows multiple tasks or threads to hold a reference to the same heap-allocated value. Wrapping the inner value in a `Mutex` ensures only one task can access it at a time.

The guidance to use it sparingly isn't a prohibition; it's a calibration. `Arc<Mutex<T>>` is the right tool when you have a genuinely shared resource, a cache, a connection pool, a config object, where cloning the data for every task would be wasteful and message-passing indirection would add more complexity than it removes. The problem arises when developers reach for it reflexively, as a familiar pattern from other languages, rather than considering whether the data could simply be owned by a single task that serves requests through a channel.

Overuse of `Arc<Mutex<T>>` creates several practical hazards. Lock contention degrades throughput under load. Holding a lock across an `.await` point is a logic error that can cause deadlocks or, at minimum, prevent the Tokio scheduler from making progress on other tasks. And nested locks, where one locked resource acquires another, are a deadlock waiting to happen. These are exactly the class of bugs that message passing makes structurally impossible.

Putting It Together

The mental model this guidance promotes is straightforward: design your concurrent system around tasks that each own their state, communicate through channels, and use `async`/`await` to stay non-blocking throughout. Tokio orchestrates the scheduling. Channels enforce ownership boundaries. `Arc<Mutex<T>>` appears only when a genuinely shared resource has no cleaner alternative.

This isn't a theoretical preference. It reflects the accumulated experience of the Rust community working on production systems where correctness and throughput both matter. The developer discussion this breakdown sparked is a sign that the community is still actively refining its intuitions around concurrency patterns, and that the "channels-first, shared state as a last resort" philosophy continues to resonate as the practical standard for idiomatic Rust.

Know something we missed? Have a correction or additional information?

Submit a Tip