Rust Developers Can Now Run BERT, YOLO, and LLaMA via ONNX Runtime Bindings

Google's Magika and SurrealDB already run on it — ort 2.0.0-rc.12 brings hardware-accelerated BERT, YOLO, and LLaMA inference to Rust with 4x CPU throughput over Python.

With `ort` and ONNX Runtime, you can run models including ResNet, YOLOv8, BERT, and LLaMA on almost any hardware, often far faster than PyTorch, with the added efficiency of Rust. That pitch has been circulating since the project launched, but the growing list of serious production adopters and a wave of new guides in the last week of March 2026 have pushed `ort` into territory that is hard for Rust ML practitioners to ignore.

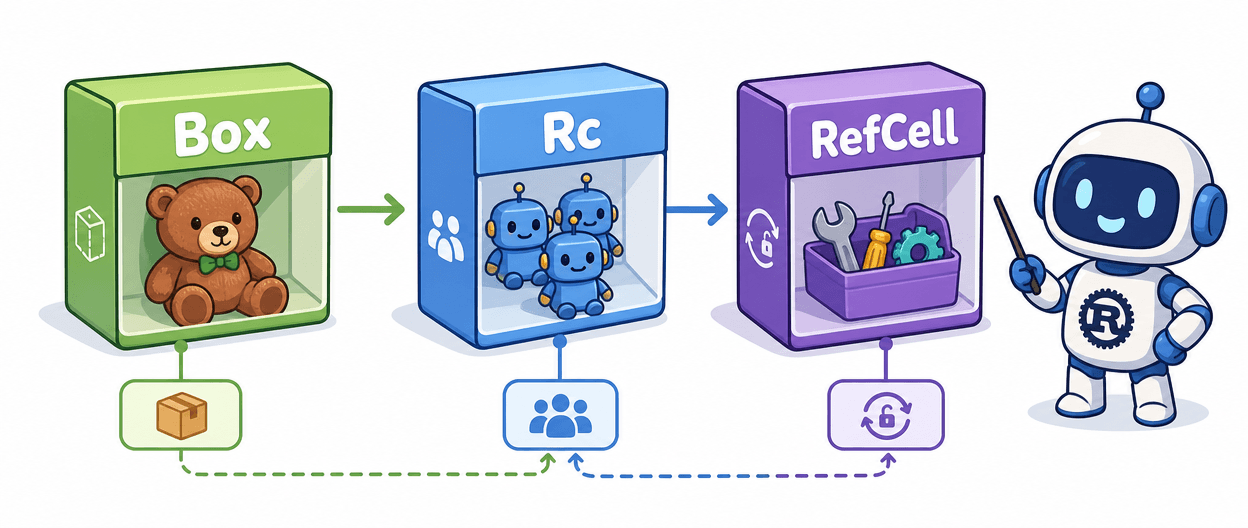

`ort` 2.0.0-rc.12 is described in the official documentation as "production-ready (just not API stable)," with the maintainers recommending it for both new and existing projects. Based on the now-inactive `onnxruntime-rs` crate, `ort` is primarily a wrapper for Microsoft's ONNX Runtime library, but offers support for other pure-Rust runtimes. The API surface is ergonomic by design: the `inputs!` macro constructs `SessionInputs` from either an array or a named map of values, and a single call to `model.run()` returns typed outputs you can extract directly as `ndarray` arrays.

Under the hood, the crate is organized into focused modules. `Environment` is the process-global configuration under which `Session`s are created, while `ExecutionProvider`s provide hardware acceleration to those sessions. The `compiler` module provides `ModelCompiler`, which optimizes and creates EP graphs for an ONNX model ahead-of-time to greatly reduce startup time — a detail that matters enormously in serverless and cold-start scenarios. A `training` module is also present in the crate docs, advertising `Trainer` as "a simple interface for on-device training/fine-tuning," though an earlier version of the feature flag documentation noted that training support was "currently unavailable through high-level bindings." Treat that capability as experimental until the 2.0.0 stable release clarifies scope.

The performance case is the real reason people reach for `ort` over a pure-Rust alternative. Production deployment numbers from comparative guides put the throughput gap in concrete terms: Python with `transformers` and PyTorch hits roughly 100 sentences per second on CPU and around 1,000 on GPU at batch size 32. Rust via ONNX Runtime reaches approximately 400 sentences per second on CPU and 5,000 on GPU under the same conditions. Memory baseline drops from roughly 2 GB in Python to around 100 MB in Rust, and cold-start time collapses from 3 to 5 seconds to 200 to 500 milliseconds. ONNX Runtime in Rust supports hardware accelerators including NVIDIA CUDA, Intel OpenVINO, Qualcomm QNN, and Huawei CANN, with NVIDIA CUDA and TensorRT execution providers enabling efficient GPU inference while Intel OpenVINO optimizes performance on Intel processors.

The adoption list removes any lingering "hobby project" doubt. Bloop's semantic code search is powered by `ort`, SurrealDB's SurrealQL supports calling ONNX models through `ort`, Google's Magika file type detection library uses `ort`, and Wasmtime supports ONNX inference for the WASI-NN standard via `ort`. `rust-bert` implements ready-to-use NLP pipelines with both `tch` and `ort` backends, and Supabase's edge functions AI compatibility runs on `ort`.

For developers evaluating the toolchain combination, `ort` provides a safe and idiomatic interface to the ONNX Runtime C API, pairing naturally with `tokenizers` for HuggingFace-compatible tokenization and `ndarray` for tensor operations. The workflow is: export your PyTorch or TensorFlow model to ONNX, load it via `SessionBuilder`, wire up your execution provider of choice, and call `session.run()`. For WASM deployment, the docs point to `ort`'s `tract` or `candle` backends as alternatives to the full ONNX Runtime backend. One operational note: the download strategy provides CUDA and TensorRT execution providers for Windows and Linux, but enabling other execution providers requires compiling ONNX Runtime from source.

The API is not yet stable, and the training module's actual scope needs verification against the current repository before shipping anything that depends on it. But for inference on standard model architectures, `ort` 2.0.0-rc.12 is the most battle-tested path from a Rust binary to a running BERT or YOLO model today.

Know something we missed? Have a correction or additional information?

Submit a Tip