Rust, Java, and Python Benchmarked for AI-Generated Backend Code Workflows

Rust beats Java by 2-5x on compute benchmarks, but that raw speed edge carries a hidden cost: Rust's ownership model can slow the human review cycles that AI-assisted workflows depend on.

Speed is cheap to measure and expensive to misapply. A recent empirical comparison of Java, Python (PyPy), and Rust across realistic backend workloads lands exactly that lesson: the fastest language for your binary is not always the fastest language for your team, especially now that AI is writing the first draft of your service code.

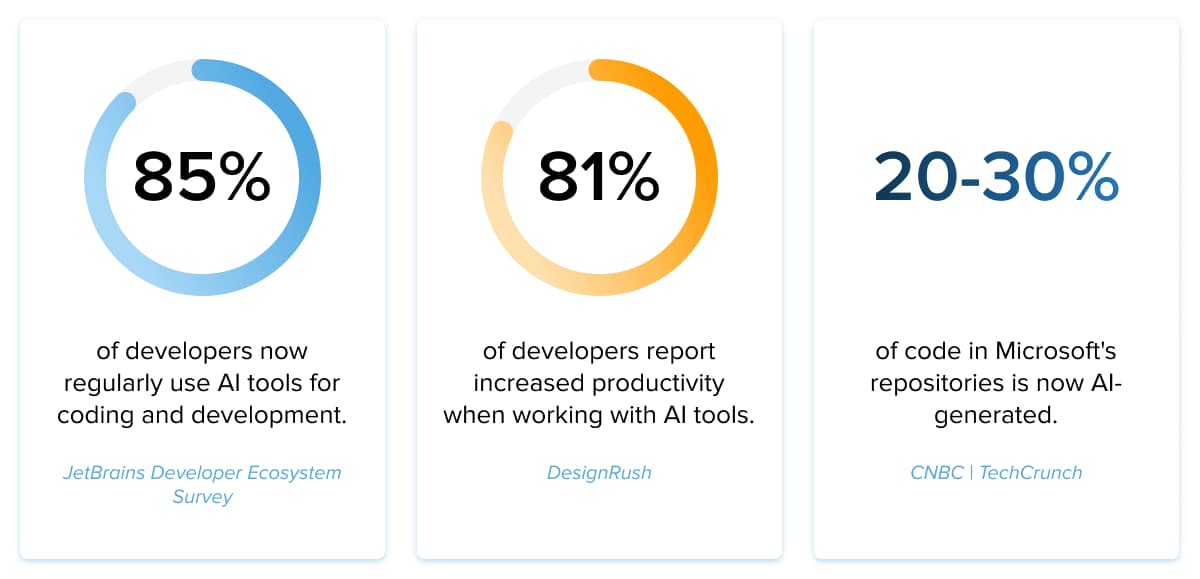

The analysis, published on the BSWEN blog, frames its findings squarely around a question that has become unavoidable in 2026: when an AI tool scaffolds your backend, which runtime gives you the best total workflow cost, counting not just clock cycles but the human review loop that has to validate every generated function before it ships?

What the Benchmarks Actually Show

The workloads chosen are deliberately algorithmic and compute-bound: N-body simulation, fasta sequence generation, knucleotide DNA string processing, and mandelbrot set rendering. These are not contrived microbenchmarks; they are the class of logic that appears in scientific computing, bioinformatics tooling, image processing pipelines, and data-intensive API services. They stress the CPU arithmetic path that separates compiled native code from interpreter overhead.

The headline numbers hold few surprises for anyone who has watched the benchmarks game for a decade. Java runs roughly 4 to 6 times faster than Python across most of these compute workloads, a gap that reflects the JVM's JIT compiler versus even PyPy's tracing JIT on integer-heavy loops. Rust, in turn, outperforms Java by approximately 2 to 5 times on several of these algorithmic tasks, running with no garbage collector pauses and tight control over memory layout. Taken together, that puts idiomatic Rust somewhere in the range of 8 to 30 times faster than Python on the worst-case compute paths, depending on the workload.

For a latency-sensitive service handling thousands of requests per second, that differential is the difference between two cloud instances and twenty.

The Variable the Benchmarks Cannot Capture

Raw throughput, however, is only one term in the cost equation. "Runtime efficiency determines your infrastructure bill, but review efficiency determines how quickly you can ship AI-generated features," as the BSWEN piece puts it directly. This is the observation that makes the article worth reading beyond its timing numbers.

AI-assisted code generation has quietly shifted the bottleneck in many engineering workflows. Generating boilerplate, scaffolding CRUD layers, wiring dependency injection, producing test stubs: these tasks now take seconds, not hours. The constraint has moved downstream, to the human reviewer who must audit what the model produced before it merges. That reviewer needs to verify semantics quickly, catch subtle logic errors, and flag misuse of APIs or concurrency primitives.

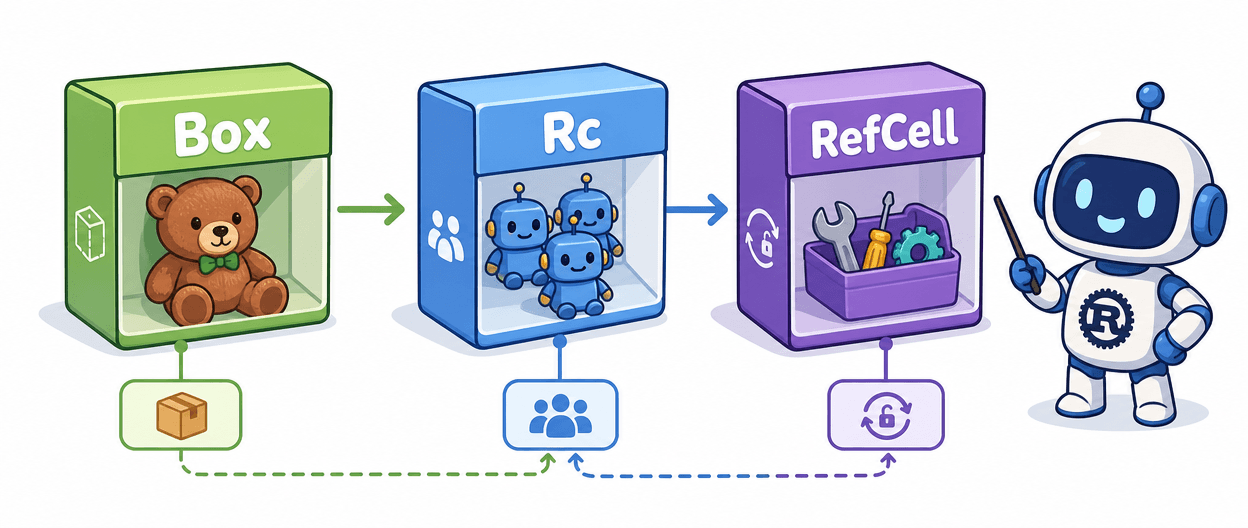

Rust's ownership and borrowing model is among the most semantically rich parts of any mainstream language. It is also, for engineers who are not already fluent in it, among the hardest to eyeball quickly. A lifetime annotation, a non-obvious borrow across an async boundary, or a subtle use of `Arc<Mutex<T>>` in AI-generated code can turn a two-minute review into a twenty-minute archaeology session. That friction compounds fast across a team shipping multiple AI-generated services per sprint. "Language choice is no longer only about peak performance; it's about a total workflow cost in an AI-augmented era."

Java occupies an interesting middle position here. It is not as fast as Rust, but it is fast enough to handle most production backend loads without exotic infrastructure scaling. More importantly, it is deeply familiar to a large fraction of working engineers. An AI-generated Spring Boot controller or a Quarkus endpoint is something a mid-level Java developer can review in minutes. The cognitive overhead of the language itself does not compound the cognitive overhead of auditing AI-generated logic.

Auditing the Methodology

Before treating these numbers as gospel, it is worth examining what the benchmark setup does and does not capture. The N-body, fasta, knucleotide, and mandelbrot tasks are single-threaded or lightly parallel compute loops. They stress raw arithmetic throughput and memory access patterns more than they stress I/O latency, database round-trips, or serialization overhead, which are the dominant costs in most real backend services. A web service that spends 90% of its wall-clock time waiting on Postgres will not see a 2-5x speedup by switching from Java to Rust; the bottleneck is simply elsewhere.

The workloads also favor ahead-of-time compiled languages by design. JVM warmup effects, which can distort short-run benchmarks significantly, matter here. Without visibility into whether the Java numbers reflect steady-state JIT-compiled throughput or include cold-start overhead, the 2-5x Rust advantage could narrow in long-running service scenarios where the JVM has time to optimize hot paths. Similarly, the PyPy results depend heavily on whether the tracing JIT had adequate warmup time on each workload's inner loop.

For production decisions, p95 and p99 latency under concurrent load would tell a more operational story than single-threaded wall time. Still, the directional conclusions are well-supported by a broad body of prior benchmarking work: Rust wins on compute, Java is competitive, CPython-class Python lags substantially.

Rules of Thumb for Hobby and Small-Team Projects

Translating this into practical guidance produces three fairly clean decision points:

- Latency-sensitive hot paths with stable requirements: Rust earns its complexity tax here. If your team has even one or two engineers fluent in the ownership model, a Rust service handling image processing, protocol parsing, or tight numeric loops can meaningfully reduce cloud spend. The review friction is a one-time ramp, not a permanent penalty, for teams that commit to building Rust literacy.

- Rapid iteration with heavy AI generation: Java or Python is the pragmatic choice when your primary constraint is shipping speed, not infrastructure cost. AI tools generate readable Java and idiomatic Python that most reviewers can audit quickly. Unless your compute costs are already alarming, the productivity gain from fast review cycles outweighs the runtime efficiency gap.

- Hybrid architecture: The most defensible architecture for teams navigating this tradeoff is also the most unsexy: put Rust on the hot-path services where deterministic performance and memory safety justify the investment, and keep higher-level languages on the feature-iteration layers where developer velocity and AI-assist throughput matter more. This is not a new idea, but the BSWEN analysis gives it fresh quantitative grounding in the AI-generation context.

What This Means for the AI-Assisted Development Era

The framing here matters for the Rust community specifically. Rust's adoption narrative has leaned heavily on safety and correctness arguments, and the performance story has always been a supporting point. In an AI-assisted workflow, a third dimension enters the picture: how auditable is the code the model generates? Memory safety that comes bundled with a steep review curve is still a net positive for security, but it is not a free lunch for team velocity.

Teams evaluating Rust for new AI-generation-heavy projects should factor in not only whether their engineers can write Rust, but whether they can review AI-generated Rust quickly enough to maintain their deployment cadence. Building Rust-specific CI guardrails, linter rules, and review checklists for AI-generated code is not optional overhead; it is the mechanism that makes Rust's safety guarantees actually hold when the model is writing the first draft.

The 2-5x performance edge over Java is real. Whether it is worth pursuing depends entirely on where your team's current bottleneck actually lives.

Know something we missed? Have a correction or additional information?

Submit a Tip