SigNoz Guide Walks Rust Developers Through OpenTelemetry Instrumentation

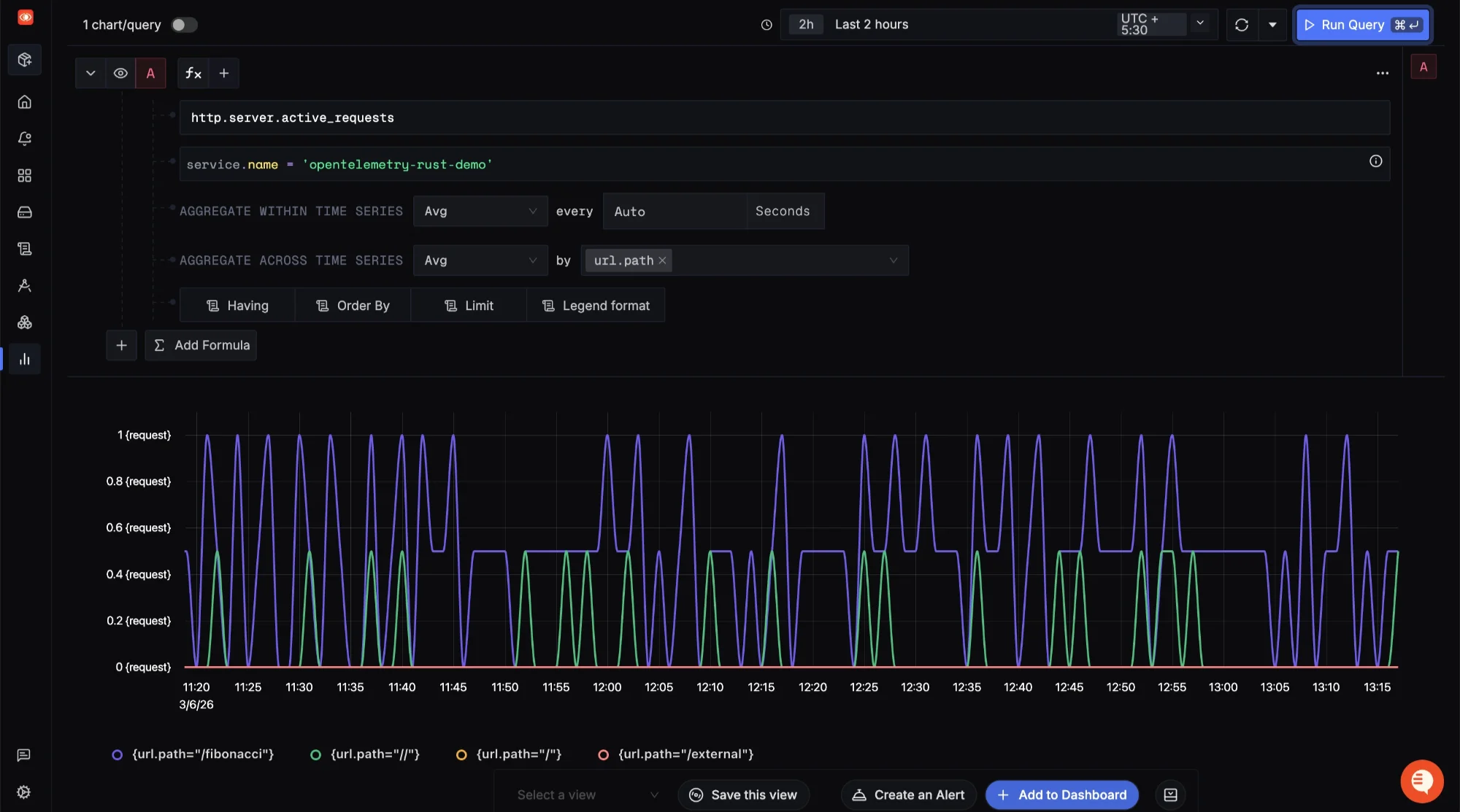

Nawaz Dhandala's SigNoz guide gives Rust developers a full walkthrough of OpenTelemetry instrumentation, covering traces, metrics, and logs with a Tokio-powered demo app.

Observability in Rust has never had a more complete starting point. Nawaz Dhandala published "Implementing OpenTelemetry in Rust Applications" through SigNoz on March 11, 2026, offering a hands-on guide that walks Rust developers through instrumenting all three telemetry signals: traces, metrics, and logs. The write-up pairs implementation depth with a working demo backend, configuring SigNoz, the open-source observability platform, to receive and visualize the telemetry data end-to-end.

Why Rust Needs This Now

Rust has become, as the guide puts it, "the go-to language for reliable, high-throughput systems," and its influence is spreading well beyond systems programming. Teams are increasingly adopting Rust for heavy-duty data processing layers, and the performance ripple effect is tangible even in other language ecosystems. Python developers have seen order-of-magnitude performance gains after switching to tools like Astral's uv for dependency management and ruff for linting, both of which have helped cut CI/CD costs and accelerate engineering productivity worldwide. As Dhandala notes explicitly, "uv is essentially entirely written in Rust." As Rust takes on more production workloads, the need to understand application behavior across its full lifespan becomes critical, which is precisely the gap OpenTelemetry fills.

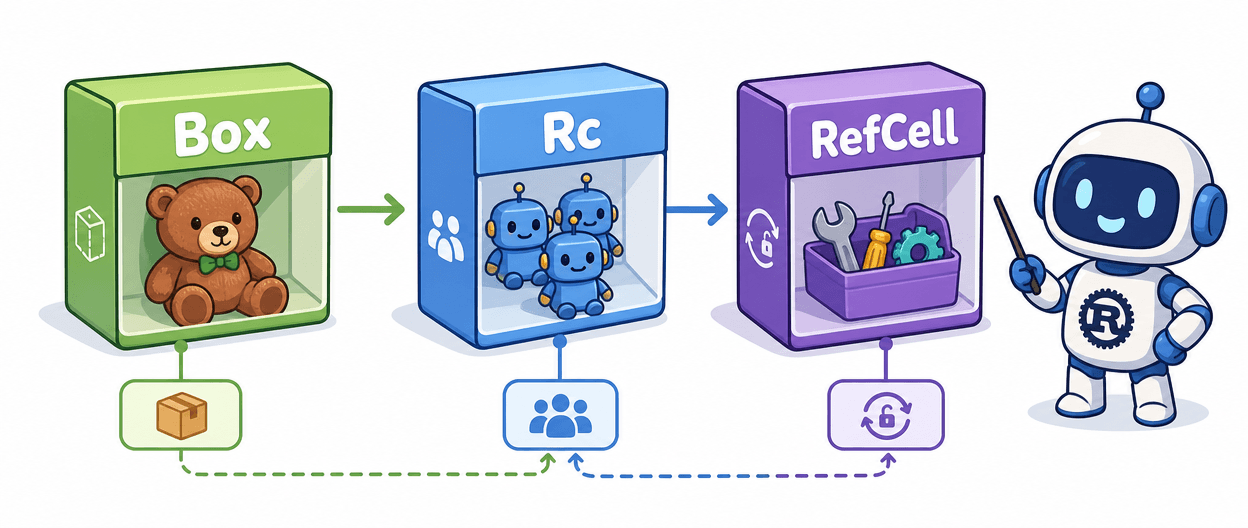

What OpenTelemetry Brings to Rust

OpenTelemetry is vendor-neutral by design, providing a single, standardized way to collect traces, metrics, and logs regardless of the backend receiving them. For Rust specifically, the fit is unusually clean. The SDK offers zero-cost abstractions, meaning disabled spans compile down to no-ops with no runtime penalty. It is async-native, with first-class support for both tokio and async-std. A unified SDK handles all three telemetry signals under one dependency, and the vendor-neutral export layer means you can point your application at Jaeger, Zipkin, or any OTLP-compatible backend without rewriting instrumentation code.

That last point is something Dhandala emphasizes directly in a Reddit post sharing the guide: "SigNoz is just a tool that you have the choice to use. You can switch out providers by changing a few environment variables." The instrumentation itself stays the same; only the destination changes.

Inside the Demo Application

The guide's demo is a Tokio-powered Rust web service built with axum, and its structure is deliberately transparent about the instrumentation architecture. The application is organized into three modules: `mod logging`, `mod metrics`, and `mod telemetry`, each handling a distinct slice of the observability stack. The main function initializes telemetry before any business logic runs, a deliberate design choice that ensures no early application events are missed.

The initialization pattern in `src/main.rs` looks like this:

let _tracer = telemetry::init_tracing("api-service")?; let metrics = metrics::init_metrics("api-service")?; info!("Starting API service"); let state = Arc::new(AppState { metrics });

The application state struct holds an `AppMetrics` instance, and the router is constructed with instrumented handlers. Tracing macros (`info!`, `warn!`, and the `instrument` attribute macro) are imported directly from the `tracing` crate. As Dhandala describes it: "The entire application runs on Tokio, which is often regarded as the one true async runtime, and powers much of Rust's networking ecosystem. The web server uses Tokio for async execution, while the telemetry and logging layer is implemented via `tracing`, another project within the Tokio ecosystem."

Beyond the service itself, the demo ships with two companion artifacts: a load generator written as a bash script, and a Python script that emulates a downstream microservice. The Python emulator calls the Rust service, which in turn calls an external API, creating a realistic cross-service trace chain that shows what distributed operations look like when visualized in an OpenTelemetry backend. This makes trace context propagation and log correlation demonstrable rather than theoretical.

Setting Up the Dependencies

For teams connecting to a Dynatrace backend, the required Cargo.toml entries give a clear picture of the OpenTelemetry Rust crate ecosystem's modularity:

opentelemetry = { version = "~0", features = ["trace", "metrics"] } opentelemetry_sdk = { version = "~0", features = ["rt-tokio", "metrics", "logs", "spec_unstable_metrics_views"] } opentelemetry-otlp = { version = "~0", features = ["http-proto", "http-json", "logs", "reqwest-blocking-client", "reqwest-rustls"] } opentelemetry-http = { version = "~0" } opentelemetry-appender-log = { version = "~0" } opentelemetry-semantic-conventions = { version = "~0" }

The corresponding `use` declarations bring in SDK providers for traces, logs, and metrics alongside OTLP exporters and the `OpenTelemetryLogBridge` from `opentelemetry-appender-log`. Header propagation uses `HeaderExtractor` and `HeaderInjector` from `opentelemetry-http`, which handles the W3C Trace Context headers needed for cross-service correlation.

For Dynatrace specifically, the backend requires version 1.222 or later, and W3C Trace Context must be explicitly enabled. That setting lives under Settings > Preferences > OneAgent features, where you toggle on "Send W3C Trace Context HTTP headers." The OTLP ingestion endpoint URL must end in `/api/v2/otlp`, and access requires a token generated through the Dynatrace Access Tokens interface. Notably, Dynatrace does not support automatic instrumentation for Rust; traces, metrics, and logs are all supported, but instrumentation must be added manually.

Six Best Practices Worth Following

Whether you're targeting SigNoz, Dynatrace, or another OTLP-compatible backend, six implementation principles apply universally:

1. Initialize early: set up telemetry before any business logic runs, so no events are lost during startup

2. Use semantic conventions: follow OpenTelemetry naming standards to keep telemetry consistent and queryable across services

3. Instrument at boundaries: concentrate instrumentation on HTTP handlers, database calls, and external service interactions where latency and failure are most meaningful

4. Avoid high-cardinality attributes: never use user IDs or request IDs as metric labels, as this causes cardinality explosions in your metrics backend

5. Graceful shutdown: always flush pending telemetry before your process exits, or you risk losing the last events before a crash or restart

6. Sample appropriately: use parent-based sampling in production environments to balance observability coverage against data volume

The Developer Experience

The community response to the guide on the Rust subreddit reflects genuine enthusiasm. Dhandala, posting under the username silksong_when, described the experience of building the demo as among the most enjoyable programming sessions in recent memory: "Personally, this was the most fun I've had programming in quite some time! It reminded me of the fun I've had when coding and blogging about the toy projects I built, trying to optimize everything (attempting to avoid clones, heap allocations, etc.), a couple years ago. Coming back, it took me some time to familiarize myself with things again, especially lifetimes, they are a bit scary. But it was refreshing to get such detailed explanations from the compiler when I made mistakes, and it felt satisfying when things worked."

That candor about lifetimes and the learning curve resonates with anyone who has returned to Rust after time away. The guide is explicit about the reasoning behind crate choices, connects implementation decisions to real-world scenarios, and explains why instrumenting an application matters in the first place, not just how to do it mechanically.

The result is a guide that earns its subtitle as a complete implementation reference. With vendor-neutral instrumentation baked in from the start, any team that follows it isn't locked into SigNoz, or any other backend. The same application, the same traces, the same metrics and logs, can flow to wherever your stack demands them.

Know something we missed? Have a correction or additional information?

Submit a Tip