VectorWare Brings Rust Standard Thread Support to GPUs for the First Time

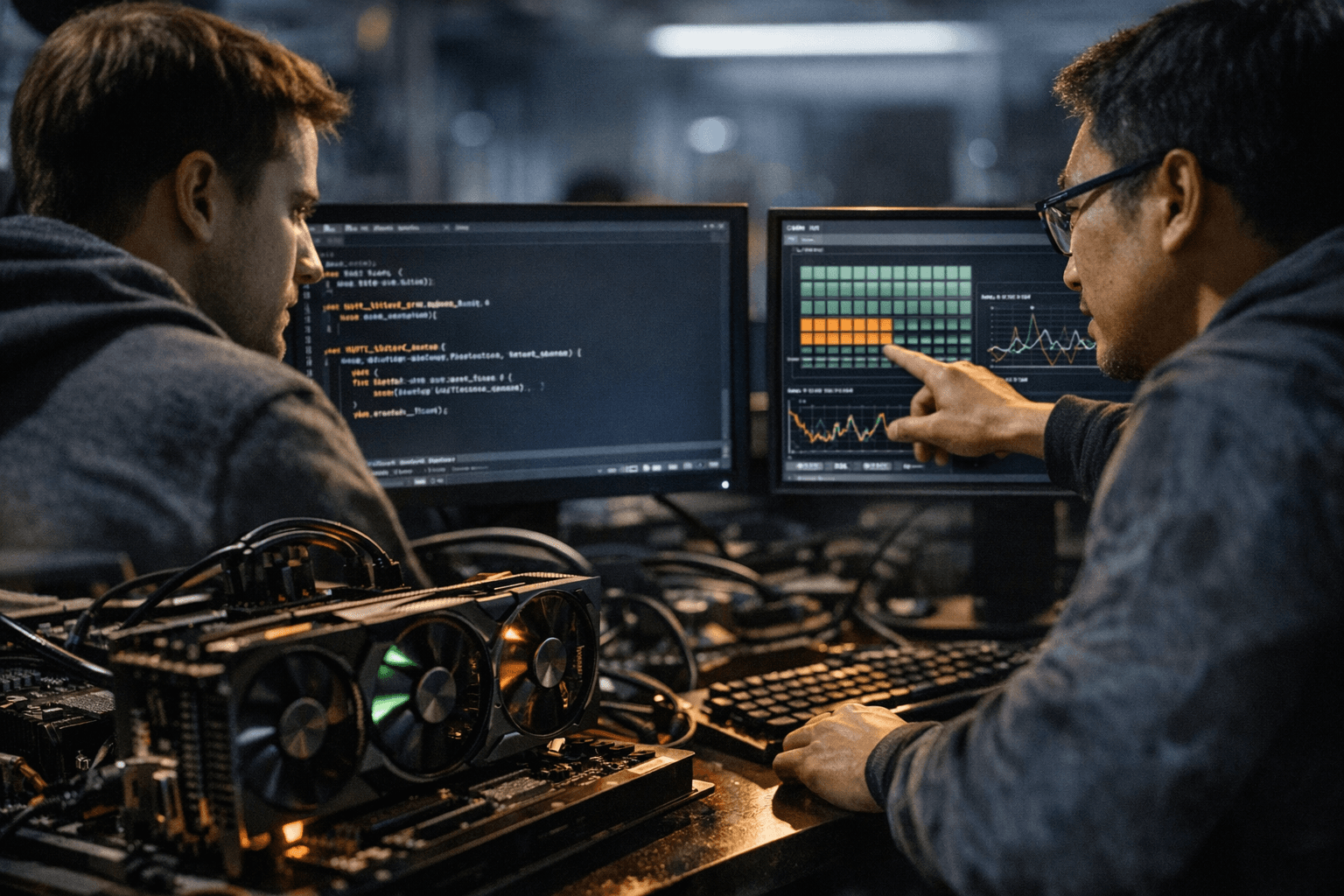

Rust's std::thread now runs on a GPU, borrow checker intact, for the first time. VectorWare compiled thread::spawn as a GPU kernel in a working demo.

Take this Rust snippet: two `thread::spawn` closures, one summing integers from 0 to 1000 and another computing a product over 1 to 20, joined with `.join().unwrap()` and printed with `println!`. VectorWare compiled it as a GPU kernel, launched it on the device, and each thread ran on a separate warp with results printed directly from GPU memory. No shader language, no `#[spirv]` attribute, no CUDA bindings. Idiomatic `std` Rust, running on a GPU.

"We are excited to announce that we can successfully use Rust's `std::thread` on the GPU. This has never been done before," VectorWare wrote in its announcement.

The achievement matters for a specific reason Rustaceans will immediately grasp: the borrow checker and lifetime semantics are untouched. "Most importantly, with this approach Rust's borrow checker and lifetimes just work," the company stated. Every prior path to Rust on GPU hardware required leaving the `std` programming model behind, accepting different abstractions for shader pipelines or GPU kernels. VectorWare's position is the inverse: "We are not introducing a new GPU programming model to Rust. We are mapping Rust's programming model onto the GPU. At VectorWare, we are making GPUs behave like a normal Rust platform."

That framing carries ecosystem-wide implications. With both `std::thread` and `async`/`await` confirmed working on GPU, VectorWare argues that crates already written for threaded parallelism or async I/O can now target GPU hardware "with minimal or no changes."

The limits of what has been disclosed are worth noting carefully. VectorWare's implementation post references "three key observations" that make `std::thread` on the GPU possible but does not reveal what those observations are. No hardware targets, no compiler toolchain details, and no performance numbers appeared in the announcement. The thread-to-warp mapping in the demo is a meaningful architectural constraint: GPU thread scheduling is fundamentally different from CPU thread scheduling, and what happens at scale, across thousands of warps with real memory pressure, is entirely undisclosed.

Atul Khare, an engineer at Microsoft, immediately asked about trait semantics: "Do you have any insights into whether the Send and / or Sync trait attributes are useful for such workloads (vis-a-vis say C++) in improving ergonomics?" It is a precise question with no public answer yet. Sanjoy Das, an NVIDIA figure with 8,000 LinkedIn followers, was among those who engaged with the post, a notable signal for anyone watching the GPU toolchain space.

Syed Muhammad Aqdas Rizvi of Keysight Technologies pushed the speculation further, wondering whether the compiler could eventually route workloads automatically: "A new kind of abstraction on the horizon where the compiler decides if it is better to optimize the workload for CPU or for the GPU and then generate the instructions/kernel accordingly."

VectorWare describes itself as "building the first GPU-native software company," with its organization page currently at 1,002 LinkedIn followers. Full implementation details, including those three enabling observations, toolchain specifics, and availability, are expected in forthcoming posts.

Know something we missed? Have a correction or additional information?

Submit a Tip