Accessibility Becomes Essential as AI Agents Browse, Click, and Act

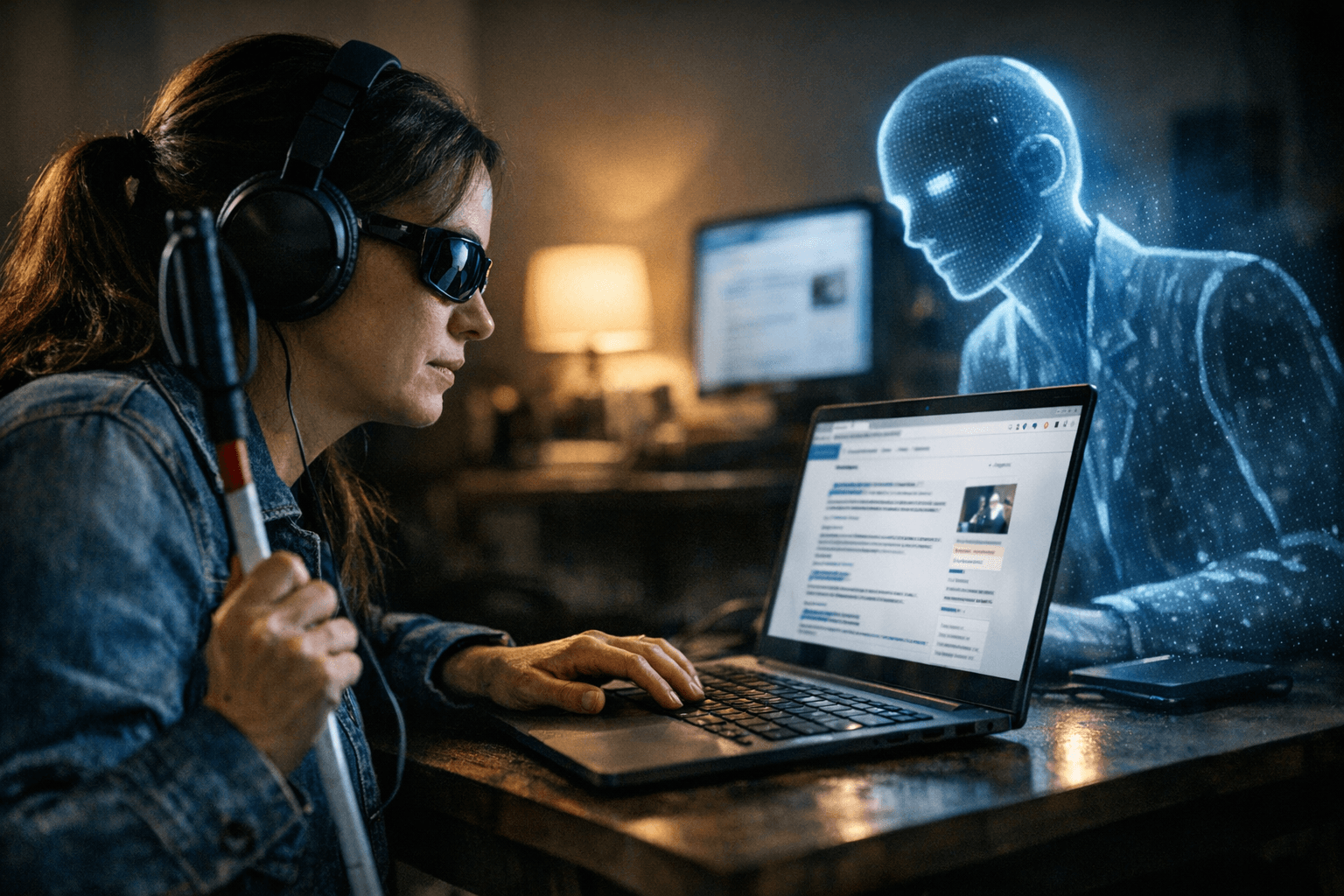

AI agents are now browsing like users, which makes accessibility a discovery and action problem, not a side project.

The website now has two audiences

AI agents are no longer just reading the web, they are browsing it, clicking through it, and filling out forms on their own. That changes the job for agencies immediately: a site is no longer only judged by how it ranks or how well people can use it, but by whether autonomous systems can interpret it, move through it, and act on it.

Search Engine Journal’s framing makes the shift hard to ignore. The publication’s piece sits inside a five-part series on optimizing for the agentic web, which signals that this is being treated as a structural change, not a one-off tactic. For agencies, the practical message is clear: accessibility, technical SEO, and AI visibility are converging into one delivery model.

Why accessibility is becoming the agent interface

The reason accessibility matters so much is that AI agents do not experience a site the way a human does. They lean heavily on the accessibility tree and other structural signals that tell machines what a page contains and how it is organized. W3C guidance explains that the accessibility tree is a subset of the DOM used by screen readers, while semantic elements make structure available to the user agent.

That means the same choices that help a screen-reader user understand a page also help an AI agent make sense of it. Correctly marked-up headings and labels, clear landmark structure, and semantic page elements are no longer just accessibility best practices in the narrow sense. They are also part of whether an autonomous system can browse efficiently, understand context, and decide what to do next.

OpenAI’s own public descriptions sharpen the point. Operator uses its own browser and can interact with webpages by typing, clicking, and scrolling. OpenAI later said the o3-based Operator can interact with webpages much like a human would. In both cases, the agent is not simply indexing content, it is navigating interfaces.

What to build for machines that browse

The build brief now starts with making the page legible to a machine. Semantic markup matters because it gives structure to content without forcing an agent to infer meaning from visual layout alone. Clear hierarchy matters because headings, labels, and page sections help both human users and automated systems understand what belongs where.

That has direct implications for how agencies handle technical work. It is no longer enough to polish copy and fix traditional SEO issues around crawling and indexing. The site architecture has to be readable as a sequence of meaningful actions, with content blocks, forms, and navigation exposed in a way that an autonomous browser can follow.

A useful way to think about this is that every page now needs to answer two questions at once: Can a person use it easily, and can an agent use it reliably? If the answer to either is no, discoverability and actionability both suffer.

Agent-friendly UX is now part of the SEO brief

AI agents can scroll, click, fill forms, and research across tabs. That means user experience is becoming operational infrastructure for machine workflows, not just a design concern. If a checkout flow, lead form, content hub, or support path is visually polished but structurally vague, it may be easy for a human and still brittle for an agent.

- Use clear, semantic headings so sections are obvious to both browsers and agents.

- Label forms precisely so a system can identify fields and submit actions with confidence.

- Keep navigation predictable so agents can move through the site without guessing.

- Preserve content hierarchy so important information is not hidden behind decorative layout choices.

- Treat accessibility checks as AI-readiness checks, because the same structural fixes improve both.

That is where agencies can get specific about recommendations:

OpenAI’s Atlas browser, described as a browser with ChatGPT built in, makes this trend even more concrete. Google’s Antigravity codelab goes in the same direction, describing an agent-first development environment where agents can plan, code, validate, iterate, and browse the web. The ecosystem is moving toward software that does not just surface information, but takes steps inside interfaces.

Where agencies can turn this into new services

This shift opens up a new way to package work for clients. Instead of selling accessibility, technical SEO, and site architecture as separate buckets, agencies can position them as a single agent-readiness program. That creates room for audits, redesigns, content restructuring, and QA services that are easier to explain and easier to renew.

- Agent accessibility audits that map a site’s DOM, headings, labels, and structural clarity.

- Structured-content redesigns that make pages easier for machines to parse and cite.

- Navigation and form-flow remediation that reduces friction for autonomous browsing.

- AI visibility assessments that test whether content can be interpreted and used inside workflows.

- Ongoing monitoring that treats machine compatibility as a performance metric, not a one-time cleanup.

The strongest service opportunities are likely to look like this:

This is also where the agency pitch gets sharper. A client that once asked for better rankings now needs a site that can be discovered, understood, and acted on by systems that browse like users and decide like software. That is a larger mandate, but also a richer one, because it connects design, development, content, and SEO into a single commercial story.

The operating model is changing

The real lesson in the agentic web conversation is not that accessibility suddenly matters more than everything else. It is that accessibility has become inseparable from AI discoverability. The same structure that helps people with assistive technology helps agents read, evaluate, and act, which is why the old split between “UX work” and “SEO work” is getting harder to defend.

For agencies, the future-proof move is to build sites as if machine users are already in the room. The winners will be the teams that treat semantic structure, clean architecture, and agent-friendly interaction as core product requirements, because the web is no longer being visited only by people who click. It is being worked by systems that do.

Know something we missed? Have a correction or additional information?

Submit a Tip