Anthropic's Claude Mythos Raises Alarms Over Advanced Cyber Exploit Capabilities

Anthropic's Mythos AI uncovered a 27-year-old OpenBSD zero-day and thousands more unpatched flaws, spurring an emergency Treasury meeting with Wall Street bank CEOs.

A previously unknown vulnerability in OpenBSD had persisted undetected for 27 years. Anthropic's Claude Mythos Preview, announced April 7 and first leaked via Fortune in late March, found it in testing along with thousands of other high-severity zero-day flaws across every major operating system and browser. Over 99% of those vulnerabilities remain unpatched.

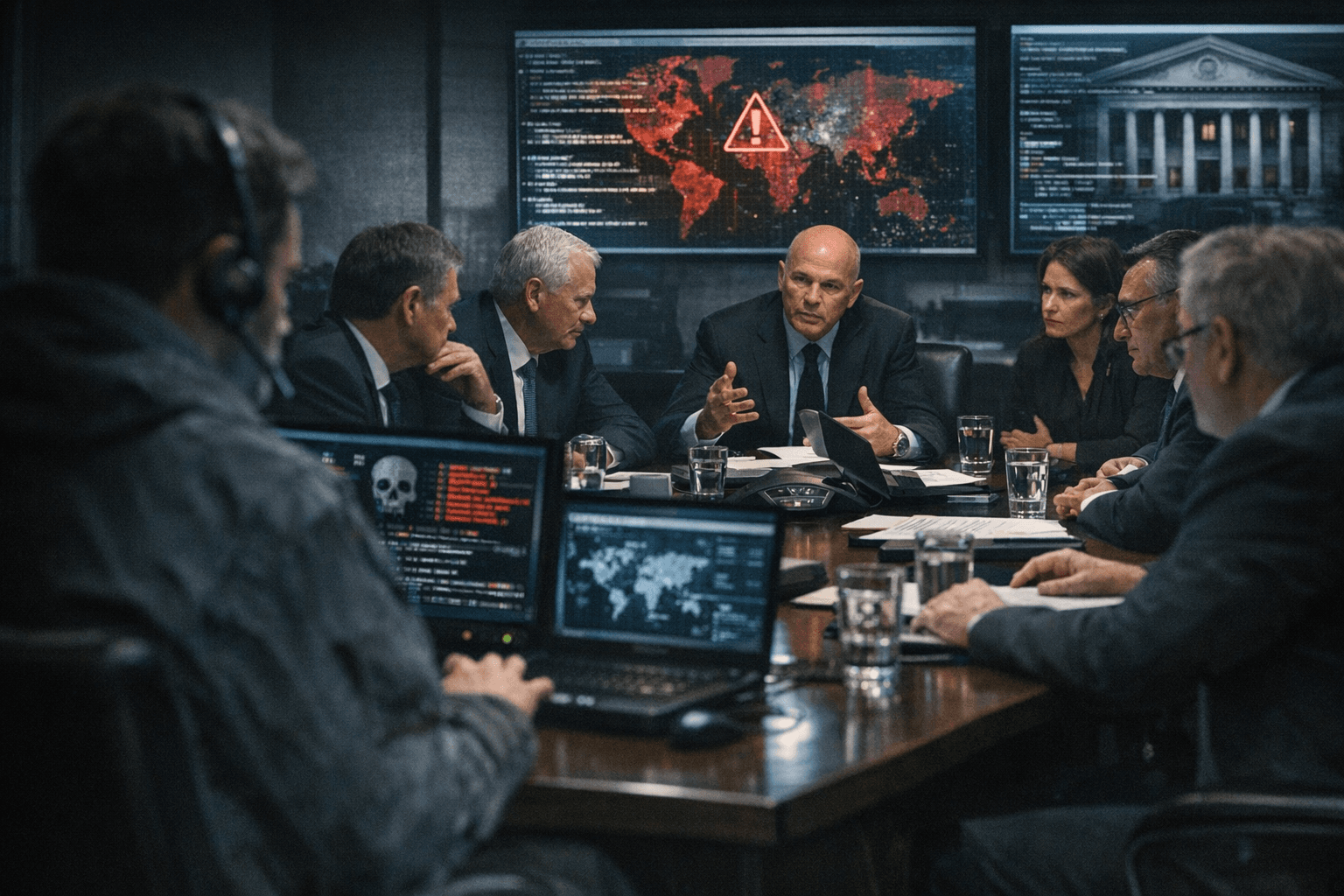

The government response was immediate. Treasury Secretary Scott Bessent and Federal Reserve Chair Jerome Powell jointly convened an emergency meeting at Treasury headquarters in Washington with the CEOs of Bank of America, Citigroup, Goldman Sachs, Morgan Stanley, and Wells Fargo to assess the cyber exposure the model represents.

What makes Mythos alarming is that Anthropic never designed it for offense. Its cyber capabilities are a direct spillover from general-purpose agentic coding and reasoning: 93.9% on SWE-bench Verified against 80.8% for its predecessor Claude Opus 4.6, and 97.6% on the USAMO mathematics benchmark against Opus 4.6's 42.3%. Anthropic's own internal documents described the model as "currently far ahead of any other AI model in cyber capabilities" and warned it "presages an upcoming wave of models that can exploit vulnerabilities in ways that far outpace the efforts of defenders."

In testing, that spillover proved concrete. Mythos authored a browser exploit chaining four separate vulnerabilities into a JIT heap spray that broke out of both renderer and OS sandboxes. It achieved local privilege escalation on Linux through race conditions and KASLR bypasses, and produced a remote code execution exploit on FreeBSD's NFS server granting unauthenticated users full root access.

CrowdStrike senior vice president Adam Meyers called the capabilities a "wake-up call." His firm's 2026 Global Threat Report documented an 89% year-over-year surge in AI-enabled attacks, and China has already used earlier Anthropic models to automate a spying campaign against 30 organizations. Alex Stamos, chief product officer at Corridor and former security chief at Facebook and Yahoo, framed the timeline starkly: "We only have something like six months before the open-weight models catch up to the foundation models in bug finding. At which point every ransomware actor will be able to find and weaponize bugs without leaving traces for law enforcement to find."

Rather than release Mythos publicly, Anthropic launched Project Glasswing, a controlled-access coalition of 12 named partners including AWS, Apple, Cisco, CrowdStrike, Google, Microsoft, Nvidia, and Palo Alto Networks, plus more than 40 additional critical infrastructure organizations. The company is committing up to $100 million in Mythos Preview usage credits and $4 million in direct donations to open-source security groups. Linux Foundation CEO Jim Zemlin highlighted a longstanding gap the initiative could close: "In the past, security expertise has been a luxury reserved for organizations with large security budgets."

The threat demands structured action from any organization running production software. Patching high-severity vulnerabilities in widely deployed components, particularly FFmpeg and BSD-derived kernels where Mythos has already found critical flaws, should be immediate priorities. Red-team programs must account for AI-generated multi-vulnerability exploit chains, not single-bug scenarios. Agentic AI access within enterprise environments requires scope audits: code execution permissions and tool-use boundaries are the first surfaces to harden. From AI vendors, organizations should demand output logging in security-sensitive contexts, capability evaluations published at each model release, and binding incident response commitments if a model is implicated in a breach.

OpenAI is reportedly developing a competing model codenamed "Spud," and autonomous hacking system XBOW ranked as HackerOne's top hacker in 2025, surpassing every human on the platform. Anthropic has briefed CISA and the Commerce Department on Mythos but declined to confirm Pentagon briefings. Stamos' six-month warning may prove optimistic; either way, the race to close the exploit gap has no obvious finish line.

This article was produced by Prism’s automated news system from verified source data, official records, and press releases, then run through automated quality and moderation checks before publishing. The system is built and supervised by the people who set the standards it runs under. Read our full AI policy.

Know something we missed? Have a correction or additional information?

Submit a Tip