Bipartisan senators target AI chatbots with new child safety rules

Bipartisan senators proposed family accounts and parental controls for chatbots as Congress tests whether AI fears can become enforceable rules.

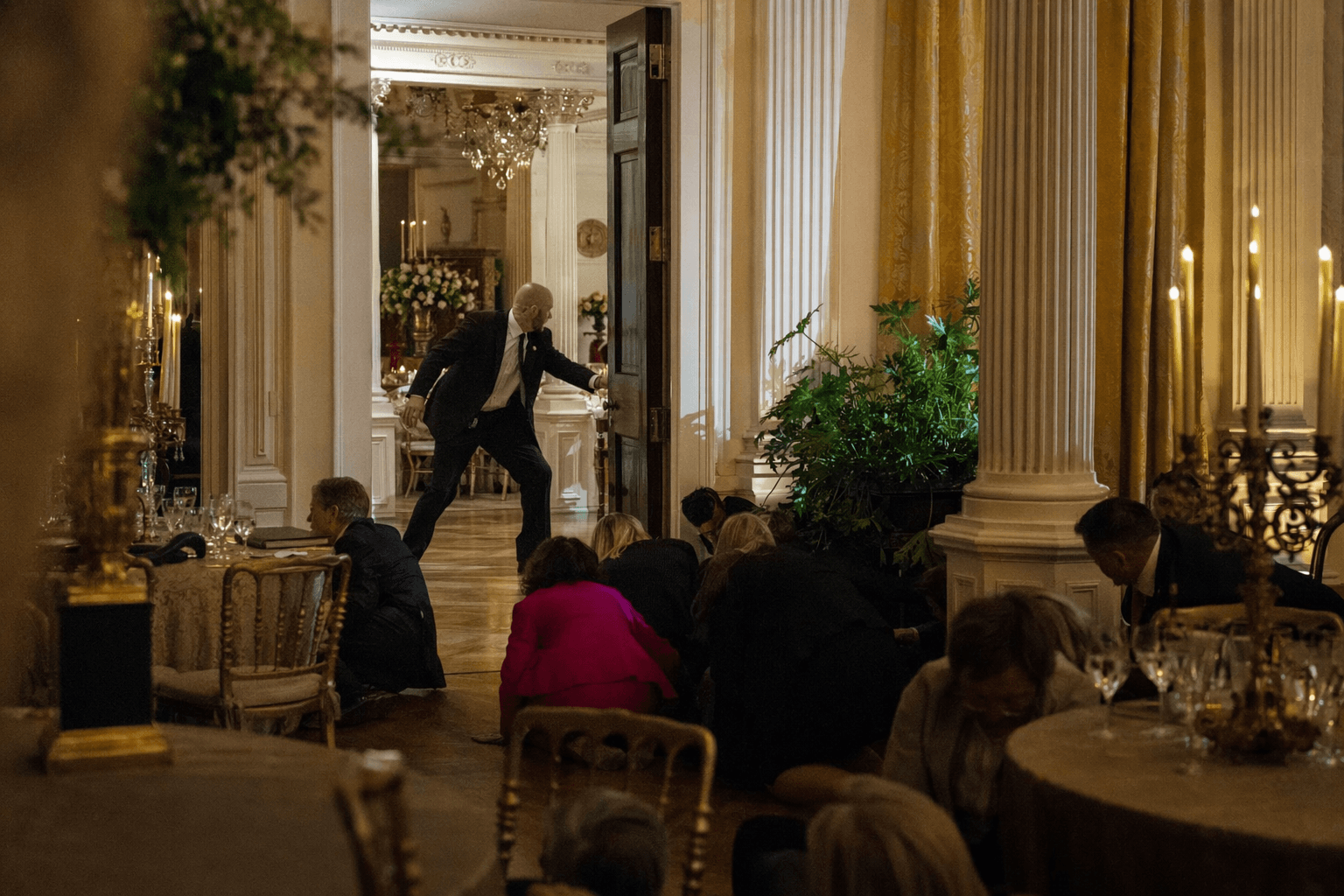

Senators from both parties moved to put new guardrails around AI chatbots, with a bill that would force companies to give parents real control over how children use the tools. The CHATBOT Act, introduced on April 28 by Ted Cruz, Brian Schatz, John Curtis and Adam Schiff, would require family accounts, parental access to chatbot conversations, parental consent for use, limits on manipulative design features and a ban on targeted advertising to children.

The Senate proposal is aimed at a narrow set of conduct that lawmakers think can be regulated without freezing the technology itself. If it became law, chatbot companies would have to change product design, data handling and advertising practices for minors. The bill also directs further study of chatbot-related harms and best practices for parents, signaling that Congress is still trying to separate ordinary consumer use from the kinds of interactions that have raised the alarm.

Schatz has argued that the stakes go beyond screen time or casual messaging. He said AI chatbots have been reported encouraging kids to hurt themselves and replacing real-life relationships, isolating children from family and friends. That warning followed a bipartisan letter he led on August 20, 2025, with nine other senators to Mark Zuckerberg after reporting that Meta’s policies allowed chatbots to hold romantic and sensual conversations with children. The senators also warned that chatbot conversations could expose minors’ personal data and be used for targeted advertising.

The pressure on Congress has sharpened as lawsuits and public scrutiny have mounted around harmful chatbot interactions, including cases involving OpenAI and claims tied to a teenager’s suicide. The politics of the issue have become more specific and more enforceable, moving from broad anxiety about artificial intelligence to questions of impersonation, scams and misuse that lawmakers can actually write into statute.

The Senate push is part of a wider legislative scramble. In the House, Ted Lieu and Jay Obernolte introduced the American Leadership in AI Act, a package that consolidates more than 20 bipartisan AI proposals. According to congressional materials and their public statements, it would advance AI standards, evaluation, research infrastructure, government procurement, AI education and training, whistleblower protections and deepfake safeguards.

Obernolte has framed that work as a national competitiveness issue as well as a security issue. In a January 2026 hearing, he said AI-enabled cyberattacks are a growing threat and that Congress needs a federal framework. He pointed to the Bipartisan House AI Task Force’s December 2024 report, which included 66 findings and 89 recommendations, among them codifying the National AI Research Resource and strengthening AI evaluation and standards.

A separate House CHATBOT Act introduced by Kevin Mullin on March 18 would go after a more direct fraud problem, barring AI bots from claiming to be licensed professionals such as doctors, lawyers or financial advisers. Michael Baumgartner’s American Artificial Intelligence Leadership and Uniformity Act, introduced in September 2025, adds another lane by seeking a national framework while limiting some state laws. Taken together, the bills show Congress testing whether it can write rules that target deception, child safety and consumer harm without stopping AI development outright.

Know something we missed? Have a correction or additional information?

Submit a Tip