California probes xAI over alleged nonconsensual sexual imagery generation

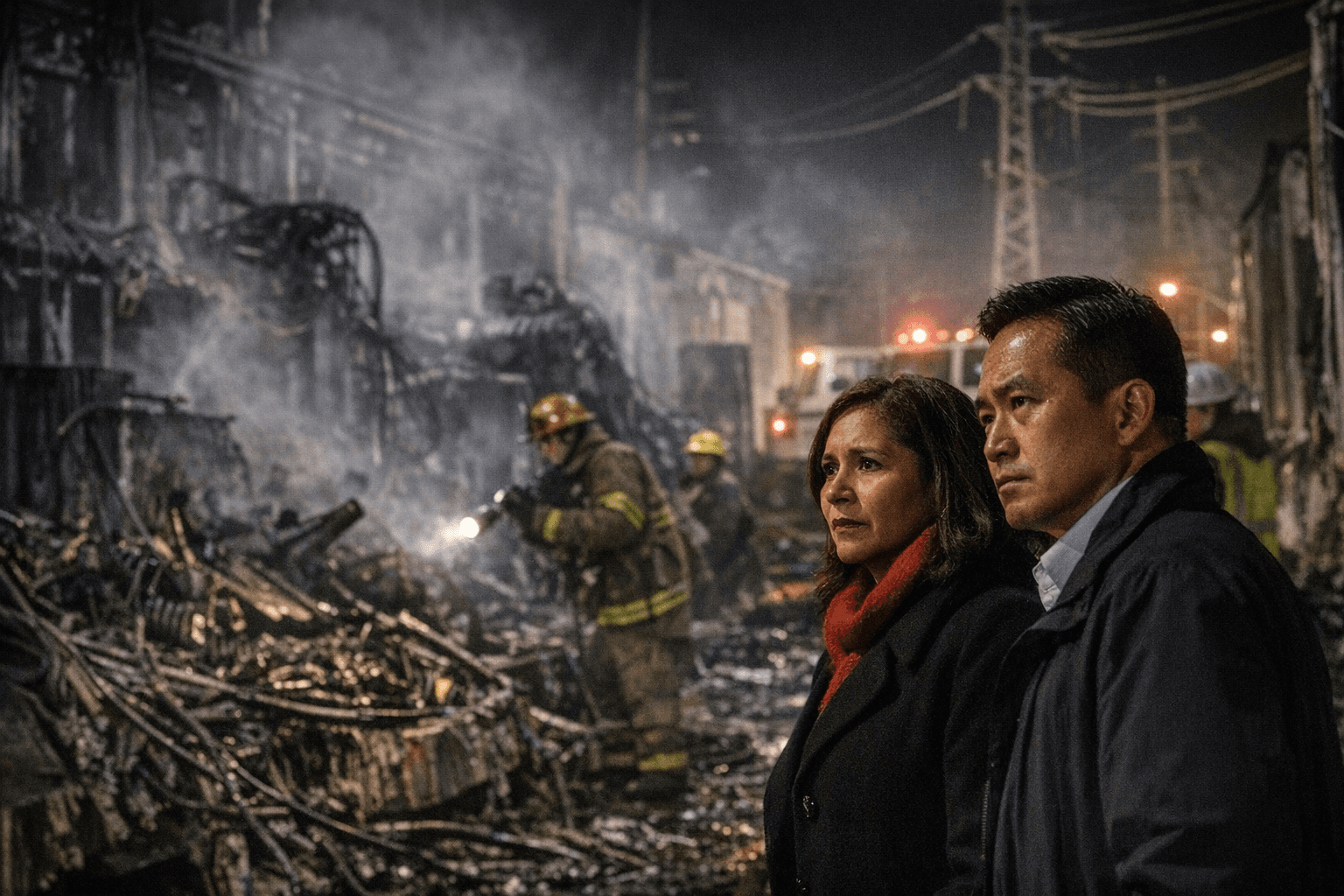

Attorney general Rob Bonta opened a probe into xAI after complaints that Grok produced explicit images without consent. The inquiry matters for Bay Area users and local tech operations.

California Attorney General Rob Bonta opened an investigation into xAI and its Grok AI tool after officials received numerous complaints alleging the platform enabled the creation or dissemination of explicit sexual imagery without consent. The probe, initiated on Jan. 14, 2026, focuses on state legal concerns around non-consensual nudity and raises questions about content moderation and the legal exposure of AI image-editing features.

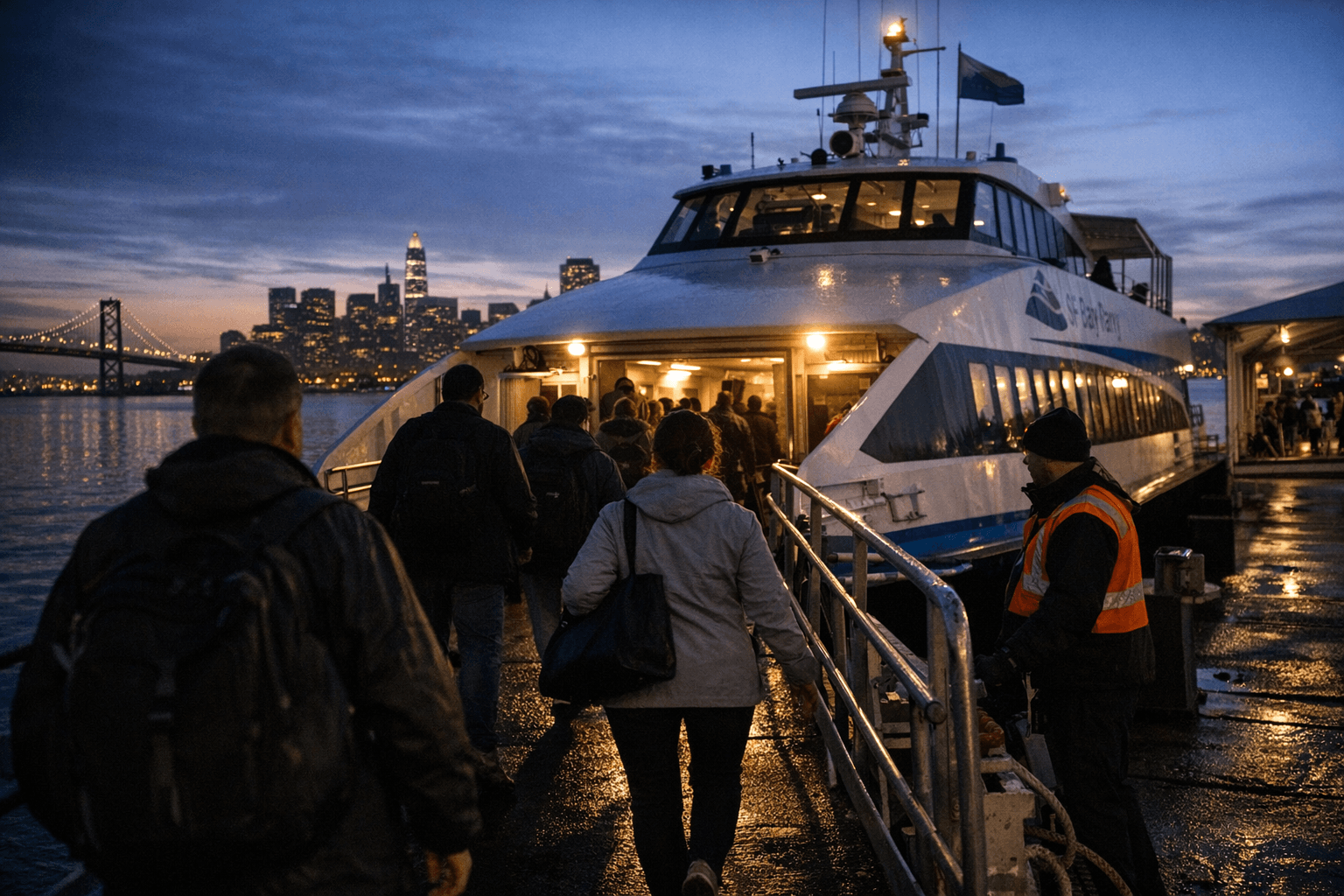

The investigation places a spotlight on Bay Area operations linked to the company, including local staff and infrastructure tied to X and xAI. For San Francisco residents, the inquiry signals heightened scrutiny of how companies headquartered or operating in the region design safety controls, enforce content policies, and respond to complaints from users whose images may be manipulated.

At the center of the inquiry are two overlapping issues: whether the platform’s generative tools facilitated non-consensual sexual content and whether moderation systems were adequate to prevent or remove that content. State officials are examining complaints alleging that Grok could produce explicit imagery that purportedly depicted private individuals without their consent, and are assessing how image-editing features may enable misuse. The legal questions raised by the probe hinge on California’s existing prohibitions and policy frameworks regarding non-consensual intimate images and the obligations companies have to prevent harms enabled by technology.

The investigation could have immediate operational consequences for local users and for companies with Bay Area teams. Developers may face pressure to harden safeguards, add verification and consent flows, or temporarily disable features that enable image manipulation. Local content moderators and engineers could be called on to respond to subpoenas or compliance requests as state investigators seek internal records and explanations of product design choices.

Beyond the immediate company, the probe may influence broader policy debates at City Hall and among state lawmakers about regulating generative AI. San Francisco policymakers who have been navigating tech accountability and public-safety trade-offs will likely watch the outcome as they consider whether municipal or state-level rules should impose stricter transparency, reporting, or technical standards on AI services offered locally.

For residents, the inquiry underscores the practical risks of sharing images online and the limits of current platform protections when AI tools can alter or recreate likenesses. As the investigation proceeds, outcomes will shape how companies build moderation systems and how regulators balance innovation with individual privacy and consent. The next steps will determine whether this enforcement action spurs technical changes, legal clarifications, or new oversight models that affect Bay Area tech operations and everyday users.

Sources:

Know something we missed? Have a correction or additional information?

Submit a Tip