Canada summons OpenAI safety team after account flagged months earlier

Ottawa demanded answers after OpenAI banned a ChatGPT account months before an 18-year-old killed eight in Tumbler Ridge.

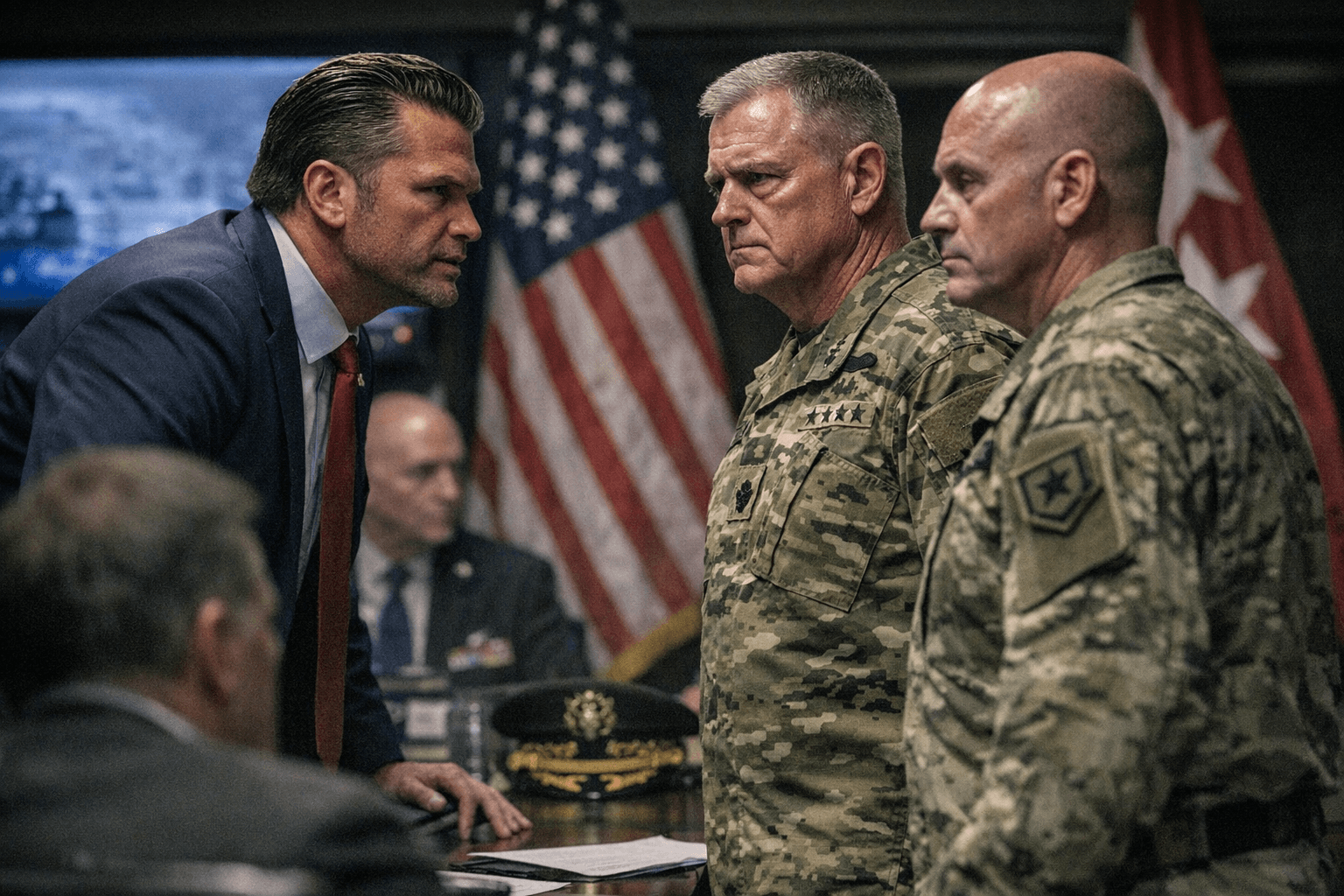

Evan Solomon summoned senior safety officials from OpenAI to Ottawa after reporting that the company had identified and banned a ChatGPT account months before 18-year-old Jesse Van Rootselaar killed eight people in Tumbler Ridge, British Columbia. Solomon said he expects the company’s top safety representatives to explain how they decide when to alert police and why the account was not referred sooner.

“I have summoned the senior safety team from OpenAI to come here to Ottawa from the United States,” Solomon told reporters, adding that “Canadians expect, first of all, that their children particularly are kept safe and these organizations act in a responsible manner.” He said some of his representatives had already met with OpenAI officials and that senior leaders would travel to Canada for a fuller briefing on Tuesday. When asked about regulatory steps, Solomon declined to rule anything out, saying “all options remain under consideration.”

OpenAI has acknowledged banning the account months before the Feb. 10 attack and said it did not refer the user to law enforcement at the time because the content did not meet its internal threshold for reporting. The company described that threshold as whether there is an imminent and credible risk of serious physical harm to others and said it did not identify such planning in this case. OpenAI told Canadian officials it would discuss “our overall approach to safety, safeguards we have in place, and how we continuously work to strengthen them.”

The Wall Street Journal first reported that the account was flagged for posts that included scenarios involving gun violence and that about a dozen OpenAI employees debated whether to notify police. Reuters and other outlets said OpenAI contacted the Royal Canadian Mounted Police only after the shooting; RCMP Staff Sgt. Kris Clark confirmed the company reached out after the attack but declined to elaborate.

Reporting differs on some specifics. TRTWorld, citing CBC, said OpenAI barred the account in June; Reuters and other outlets described the action less precisely as occurring “last year.” Local and national officials have offered varying descriptions of family ties among victims; provincial leader David Eby said it “looks like” OpenAI had an opportunity to prevent the mass shooting. The shooter, identified as Jesse Van Rootselaar, is reported to have killed her mother and a sibling at home before attacking a secondary school, where media accounts say she killed five students and an educational assistant, then took her own life. Authorities have said motive remains unclear and that Van Rootselaar had prior mental-health contacts with police.

The episode sharpens questions about how platform safety protocols intersect with public safety and cross-border policing. Platforms rooted in one jurisdiction face practical and legal limits when deciding whether to escalate non-imminent but disturbing content to foreign authorities. For policymakers, the case presents a concrete test of whether current definitions of “imminent and credible” risk are fit for purpose and how transparency or mandatory reporting could be structured without undermining privacy or chilling moderation.

The Ottawa meeting will be an early measure of accountability. Officials and safety teams now face pressure to produce a clear timeline, internal criteria for escalation, and documentation of what, if any, information was shared with Canadian authorities after the account was flagged and after the February attack.

Know something we missed? Have a correction or additional information?

Submit a Tip