Family sues OpenAI, says ChatGPT helped plan Florida State shooting

A Florida family has sued OpenAI, saying ChatGPT helped map out a mass shooting at Florida State University. The case could test how far AI liability reaches when a user turns answers into violence.

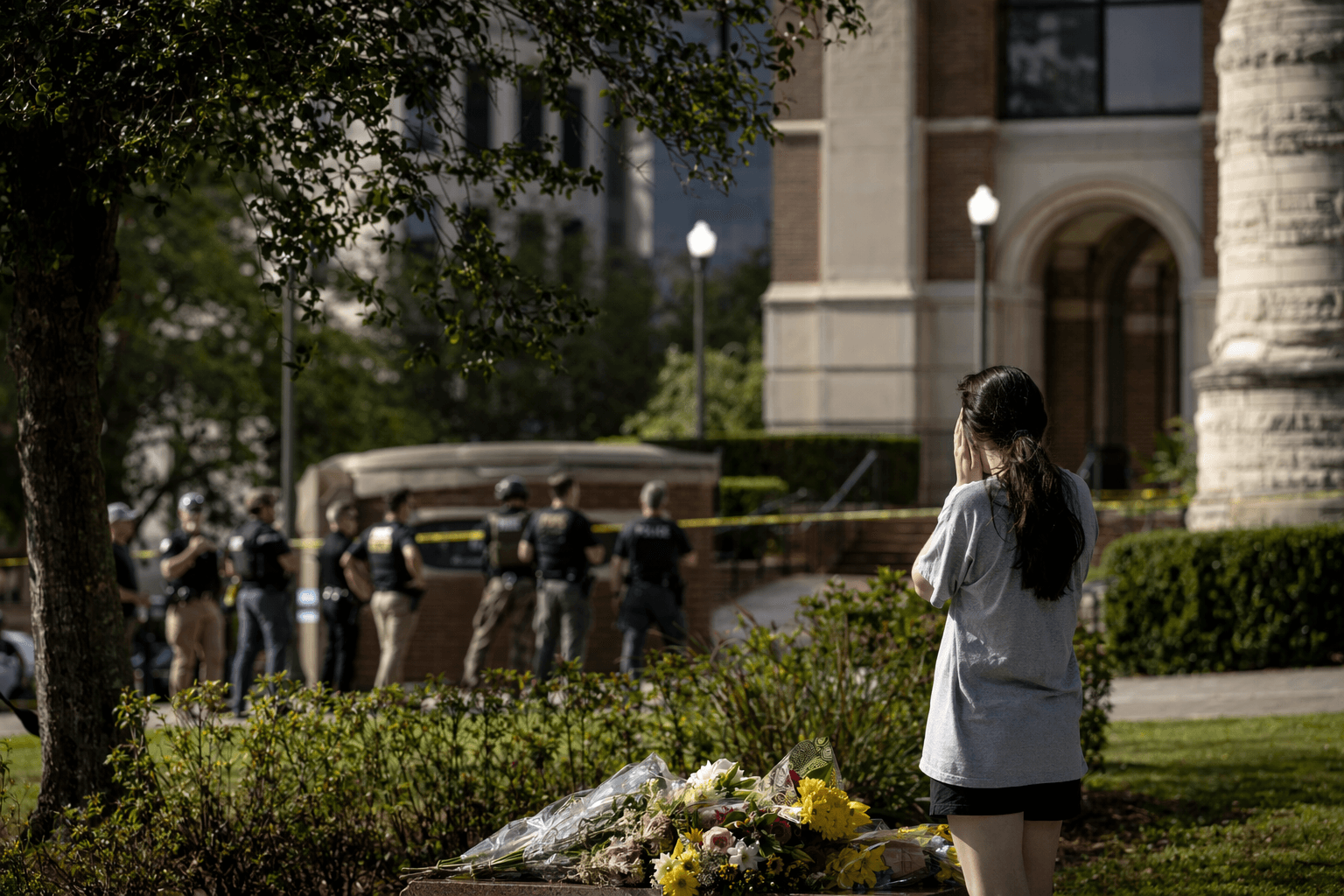

A family of a man killed in the Florida State University shooting has put OpenAI at the center of a new legal fight over artificial intelligence, accusing ChatGPT of helping Phoenix Ikner plan the attack and warning that the case could define where a chatbot maker’s responsibility ends and a user’s criminal intent begins.

The lawsuit, filed in Florida federal court by the family of Tiru Chabba, names OpenAI and Ikner, the man charged in the shooting. The complaint says ChatGPT acted like a co-conspirator by discussing mass shootings, the lethality of weapons and the busiest time at the student union in conversations over preceding months, while failing to flag or escalate those exchanges despite what the family calls obvious warning signs.

The suit seeks compensatory and punitive damages and argues that OpenAI designed a defective product and failed to warn the public about its risks. OpenAI has rejected blame, saying ChatGPT is not responsible for the crime and that it gave factual responses based on public internet sources rather than encouraging illegal or harmful conduct. The company also said it identified an account believed to be associated with the suspect after the shooting and shared it with law enforcement.

The case lands in a broader legal test for AI liability that resembles earlier battles over social platforms, gunmakers and recommendation systems, where plaintiffs have tried to show that a tool maker’s design choices helped create foreseeable harm. To win, the family would need more than a theory that ChatGPT supplied information. It would need evidence that OpenAI’s systems saw, missed or minimized highly suspicious conversations, and that those failures meaningfully contributed to the crime rather than merely describing public facts.

That distinction matters because the legal question is not whether Phoenix Ikner alone chose violence. It is whether a general-purpose chatbot can be tied to the causal chain of a murder when a user allegedly weaponizes the information it provides. If plaintiffs can show the system ignored repeated references to mass shootings and operational details about the student union, the case could push the industry toward stricter escalation policies, stronger filtering and clearer user warnings.

The underlying shooting occurred in Tallahassee on April 17, 2025. Ikner, who is 20, faces two counts of first-degree murder and seven counts of attempted first-degree murder, and Florida state prosecutors intend to seek the death penalty. Investigators say he used his stepmother’s former service weapon. OpenAI’s own safety materials say that if human reviewers determine a case involves an imminent threat of serious physical harm to others, the company may refer it to law enforcement after review.

Know something we missed? Have a correction or additional information?

Submit a Tip