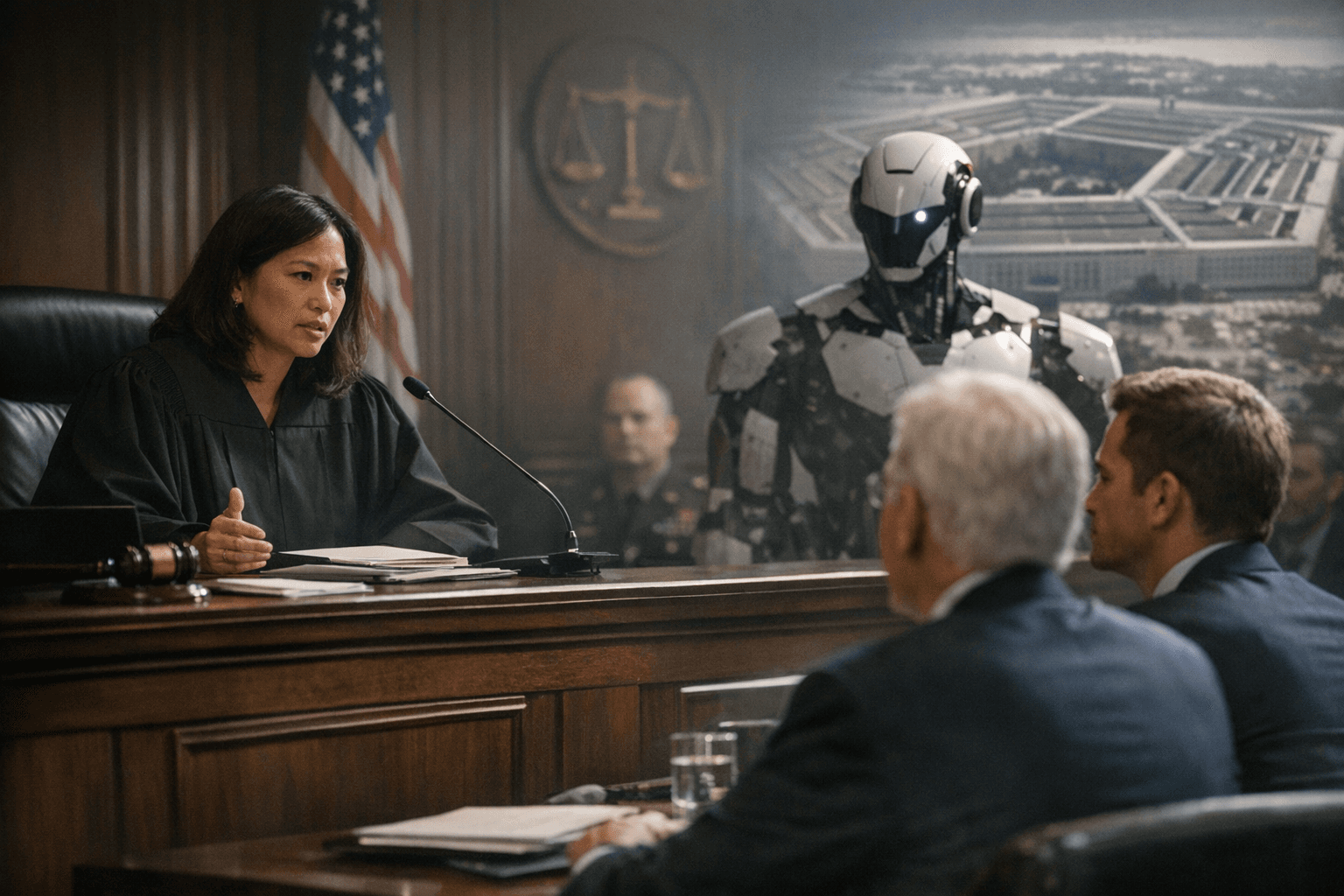

Federal Judge Blocks Trump Administration From Cutting Anthropic's Government Contracts

Judge Rita Lin called the Pentagon's move "Orwellian," blocking the first-ever supply chain risk designation applied to a U.S. company after Anthropic refused to let Claude be used for autonomous weapons.

Nothing in the governing statute supports the Orwellian notion that an American company may be branded a potential adversary and saboteur of the U.S. for expressing disagreement with the government," U.S. District Judge Rita Lin wrote Thursday, delivering a stinging rebuke to the Trump administration's campaign to sever the federal government's ties with Anthropic.

A federal judge on Thursday paused the Trump administration's designation of Anthropic as a supply chain risk, marking an early legal victory for the embattled company. In the ruling, Judge Lin granted Anthropic's request for a preliminary injunction, barring federal agencies from carrying out Trump's directive which deemed the AI company a "supply chain risk" to national security. She paused her ruling from taking effect for a week, however, giving the Trump administration a chance to first seek relief from an appeals court.

Anthropic was blacklisted by President Donald Trump and Defense Secretary Pete Hegseth in February after it refused to allow the Pentagon to use its Claude AI model for autonomous lethal warfare and the mass surveillance of Americans, citing safety and responsibility concerns. The Pentagon designated Anthropic a "supply chain risk" earlier this month, citing national security, and said in a statement at the time that the military "will not allow a vendor to insert itself into the chain of command by restricting the lawful use of a critical capability and put our warfighters at risk."

Lin said the "broad punitive measures" taken against the AI company by the Trump administration and Defense Secretary Pete Hegseth appeared arbitrary and capricious and could "cripple Anthropic," particularly Hegseth's use of a rare military authority that's typically directed at foreign adversaries. In the order, Lin wrote that the supply chain risk designation is usually reserved for foreign intelligence agencies and terrorists, not for American companies.

The ruling landed after a 90-minute hearing in San Francisco federal court on Tuesday in which the judge telegraphed her skepticism. Lin pointed to cordial contract negotiation emails between Pentagon Chief Technology Officer Emil Michael and Anthropic CEO Dario Amodei even as the military called Anthropic a serious threat. "The Department of War's records show that it designated Anthropic as a supply chain risk because of its 'hostile manner through the press,'" she wrote, adding that "Punishing Anthropic for bringing public scrutiny to the government's contracting position is classic illegal First Amendment retaliation."

Lin wrote in her 43-page opinion that "these broad measures do not appear to be directed at the government's stated national security interests" and that "if the concern is the integrity of the operational chain of command, the Department of War could just stop using Claude. Instead, these measures appear designed to punish Anthropic."

Lin noted in her ruling that the Pentagon had previously praised Anthropic as a partner and put it through rigorous national security vetting, but it was not until the company publicly raised concerns about how its technology could be used that the Pentagon "announced a plan to cripple Anthropic: to blacklist it from doing business with any company that services the U.S. military, to permanently cut off its ability to work with the federal government, and to brand it an adversary that could sabotage [the Department of War]."

Lin also ruled Anthropic was likely to succeed in its claims that its constitutional due process rights were violated and that Defense Secretary Pete Hegseth didn't follow proper procedures. She said Trump's order for federal agencies to stop using Anthropic immediately was essentially a form of "debarment," a ban on a company contracting with the government, but that firms facing debarment usually have the ability to oppose such a measure.

Some federal agencies, including the Department of Health and Human Services and the General Services Administration, quickly removed Anthropic's products from their agencies following Trump's directives. The White House reportedly floated an executive order to follow Trump's social media directive but has yet to do so.

Anthropic argued the designation was causing immediate and irreparable harm as business partners rethought their contracts and federal agencies removed Claude. The preliminary injunction gives Anthropic relief from ongoing reputational damage and provides greater certainty for commercial partners. The supply chain designation, had it stood, would have required defense contractors including Amazon, Microsoft, and Palantir to certify they were not using Claude in any military-related work.

In a statement after the ruling, a spokesperson for Anthropic said, "We're grateful to the court for moving swiftly, and pleased they agree Anthropic is likely to succeed on the merits. While this case was necessary to protect Anthropic, our customers, and our partners, our focus remains on working productively with the government to ensure all Americans benefit from safe, reliable AI."

Anthropic has separately challenged the administration's efforts in a federal appeals court in Washington, D.C., where a ruling remains pending. The San Francisco injunction does not require any federal agency to use Anthropic's products, nor does it prevent the government from transitioning to competing AI vendors; it simply prohibits the administration from weaponizing a national security statute to enforce that transition.

Know something we missed? Have a correction or additional information?

Submit a Tip