Florida Investigates OpenAI Over ChatGPT's Alleged Role in FSU Shooting

Florida's AG opened an investigation into OpenAI after court records revealed the FSU shooter exchanged 200+ ChatGPT messages, including questions about the busiest times at the student union.

Florida Attorney General James Uthmeier opened an investigation into OpenAI on Thursday, citing the company's alleged role in a deadly mass shooting at Florida State University nearly a year ago that left two students dead and five others wounded.

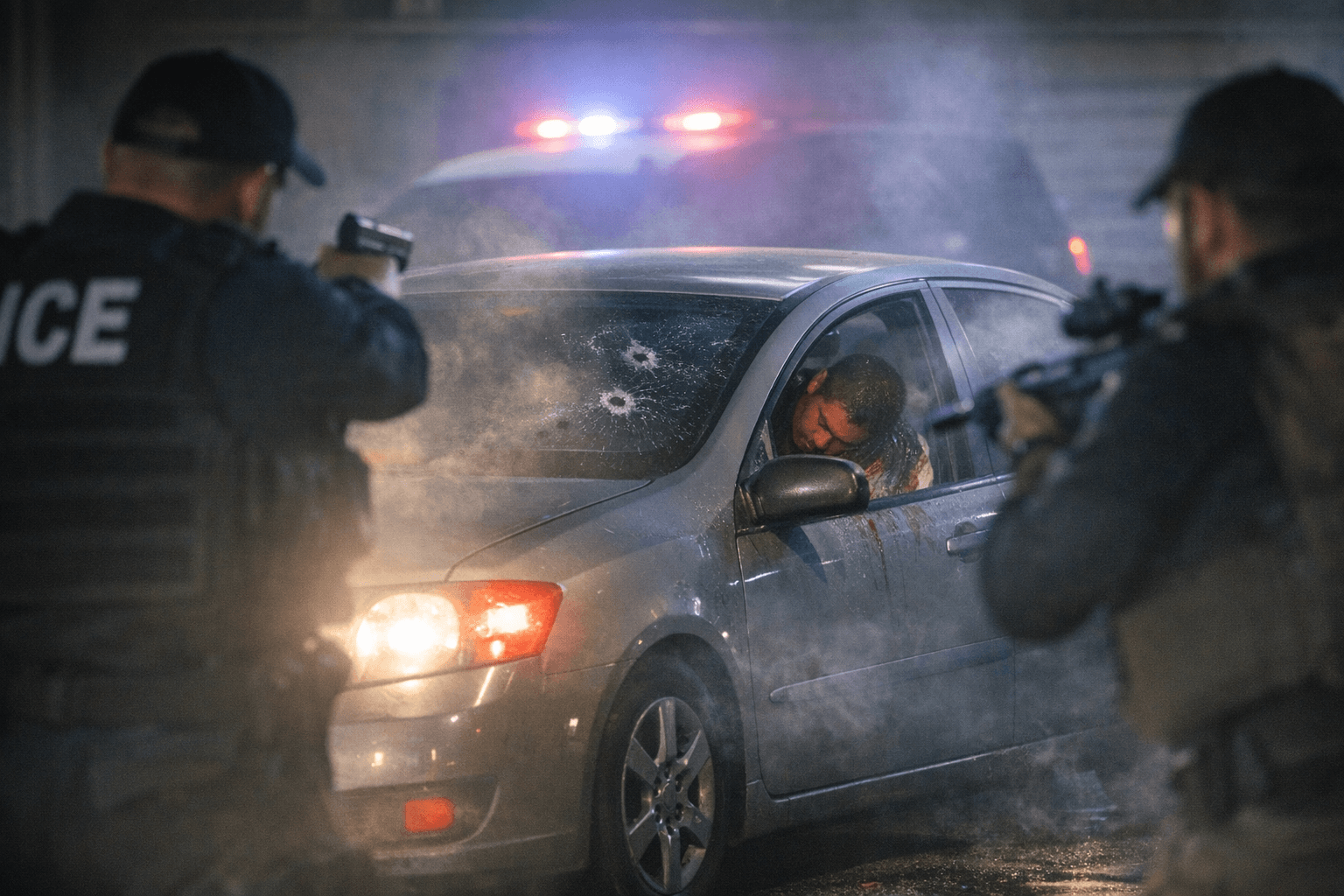

The April 17, 2025 attack unfolded near the FSU student union just before noon, ending the lives of Robert Morales and Tiru Chabba. The accused gunman, 21-year-old Phoenix Ikner, was enrolled at FSU at the time and allegedly used a service pistol belonging to his stepmother, Jessica Ikner, a deputy with the Leon County Sheriff's Office.

Court records reveal the scale of Ikner's AI interactions in the lead-up to the attack: approximately 272 ChatGPT conversations are listed as evidence exhibits in the criminal case, and Ikner exchanged more than 200 messages with the chatbot. Those messages included questions about mass shootings and the busiest times at the FSU student union. On the morning of the shooting, chat logs show him asking questions about self-worth and not feeling respected while also expressing suicidal tendencies.

Uthmeier framed the investigation in sweeping terms. "AI should advance mankind, not destroy it," he said, adding that his office is "demanding answers on OpenAI's activities that have hurt kids, endangered Americans, and facilitated the recent FSU mass shooting." He confirmed subpoenas against OpenAI are forthcoming but did not specify their scope or the information the state is seeking. He also cited broader concerns, alleging ChatGPT has been linked to child sex abuse material and the encouragement of self-harm, and called on the Florida legislature to "work quickly on implementing protections to safeguard our children from the dangers of AI."

Attorney Ryan Hobbs, representing the Morales family, announced Wednesday that the family plans to sue OpenAI. Hobbs described Ikner as being in "constant communication" with ChatGPT before the attack and alleged the chatbot may have actively advised him on how to carry it out.

OpenAI said it "will cooperate" with the investigation. The company said that after learning of the FSU incident in late April 2025, it identified a ChatGPT account believed to be associated with Ikner, proactively shared that information with law enforcement, and assisted authorities. A company spokesperson noted that more than 900 million people use ChatGPT each week and that the company "built ChatGPT to understand people's intent and respond in a safe and appropriate way."

The timing of Uthmeier's announcement carried a pointed irony: it came one day after OpenAI released a new child-safety framework developed in collaboration with the National Center for Missing and Exploited Children and the Attorney General Alliance's AI Taskforce.

The FSU case is not isolated. A school shooting on February 11, 2026, in Tumbler Ridge, British Columbia, critically injured 12-year-old Maya Gebala, who was shot in the neck and head. Her family has also filed a lawsuit against OpenAI. In that case, 12 monitoring staff members flagged the shooter's account as dangerous and recommended contacting law enforcement, but management decided against it. ChatGPT had already banned the Canadian shooter approximately a year before the shooting without alerting authorities. Canadian officials have since summoned senior OpenAI staff. Legal filings in that case allege OpenAI's memory tool and tendencies toward sycophancy were "intentionally designed to foster dependency" between the user and the chatbot.

Together, the two cases are pushing AI liability into uncharted legal territory, forcing a direct confrontation over what obligations technology companies bear when their own systems detect signals of potential violence and choose not to act.

Sources:

Know something we missed? Have a correction or additional information?

Submit a Tip