George Hotz's Tiny Corp Brought High-End AI Hardware to Your Garage

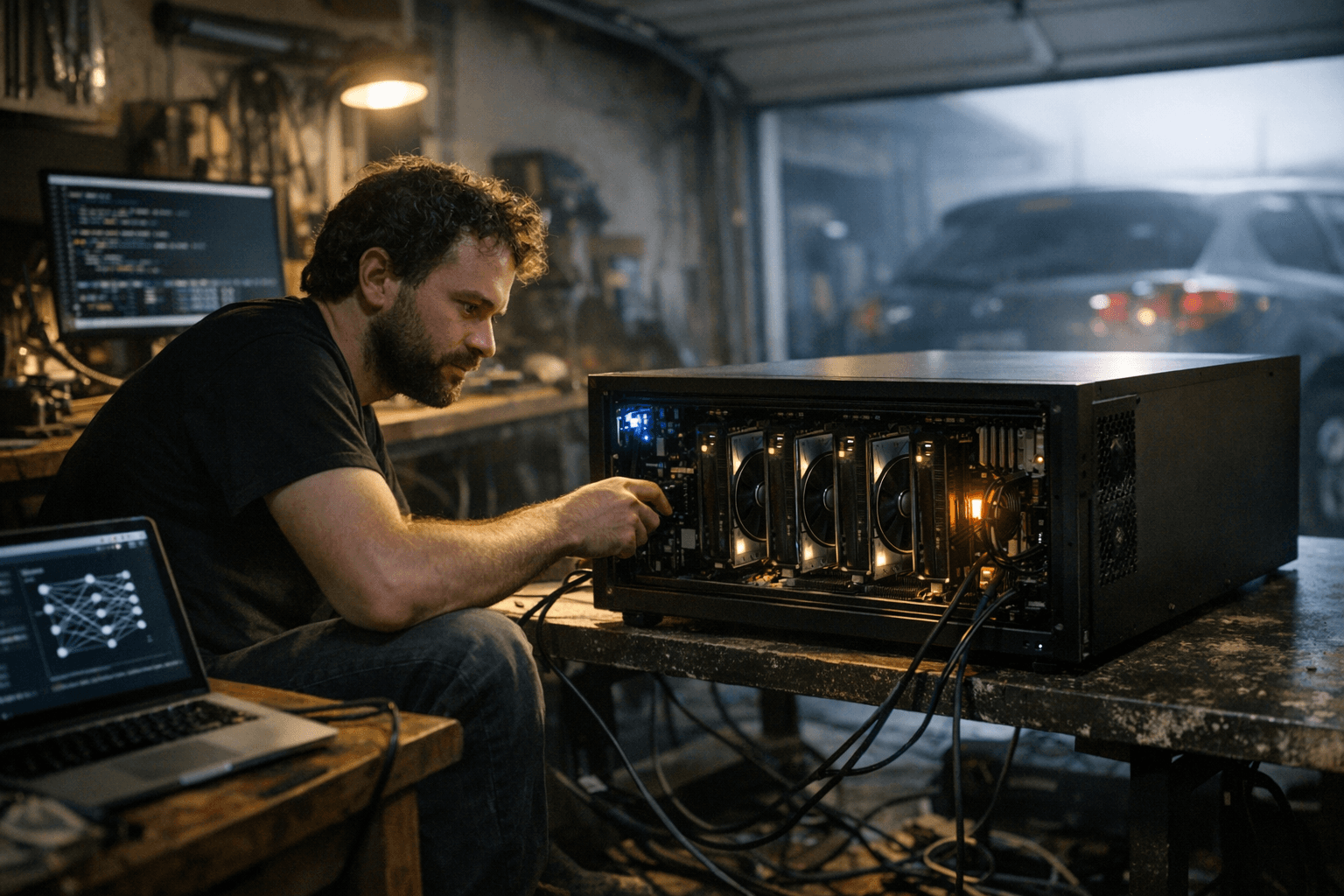

Tiny Corp launched the Tinybox, putting serious AI training and inference power in reach for individuals and small teams outside the cloud.

George Hotz's Tiny Corp took direct aim at cloud computing dominance this weekend, announcing availability and technical specifications for its Tinybox hardware line, a family of machines designed to run and train artificial intelligence models entirely offline, without sending data to a remote server.

The move positions Tiny Corp as one of the few companies willing to bet that serious AI compute belongs on-premises, not rented by the hour from Amazon or Google. For researchers, hobbyists, and small organizations who have grown wary of cloud costs and data privacy exposure, the Tinybox represents something the market has largely refused to offer: a purpose-built, high-performance machine that ships to your door and runs locally.

Tiny Corp grew out of the tinygrad project, an open-source deep learning framework that Hotz, the hacker and entrepreneur known for jailbreaking the original iPhone and founding comma.ai, used to demonstrate that capable AI software did not require the massive engineering teams behind PyTorch or TensorFlow. The framework attracted a loyal developer community drawn to its minimalist design and Hotz's confrontational approach to the AI establishment.

The Tinybox family extends that philosophy into hardware. Rather than pushing users toward managed cloud services, the machines are designed to give operators full control over their compute, their data, and their software stack. That framing carries particular appeal in a market where enterprise AI customers have grown increasingly sensitive to the terms under which major cloud providers store and process training data.

The broader context makes the timing pointed. Cloud AI compute costs have remained stubbornly high even as model inference has become more efficient, and several high-profile data leakage incidents have pushed compliance-sensitive industries, including healthcare, legal services, and financial firms, to reconsider architectures that route sensitive information through third-party infrastructure. A capable local inference machine removes that vector entirely.

Tiny Corp is a small operation by the standards of the AI hardware space, where Nvidia's dominance and the capital requirements of chip fabrication have discouraged most competitors. The company does not manufacture its own silicon, instead building systems around existing GPU hardware, which keeps costs lower but also means performance is bounded by what commercial graphics cards can deliver. That constraint distinguishes the Tinybox from the custom accelerators that hyperscalers design in-house, but it also means the machines can be serviced and upgraded without proprietary support contracts.

Hotz has long cultivated a reputation for dismissing the complexity that large institutions use to justify large budgets. The Tinybox is a physical expression of that argument: that most practical AI workloads do not require a data center, and that the industry's gravitational pull toward cloud dependency reflects business incentives more than technical necessity.

Whether that argument finds a large enough customer base to sustain the company remains the central question. The market for local AI hardware has attracted genuine interest but has not yet produced a breakout commercial success. Tiny Corp's advantage is a community that was already building with tinygrad before the hardware existed, giving the Tinybox a ready audience that most hardware startups spend years trying to cultivate.

Know something we missed? Have a correction or additional information?

Submit a Tip