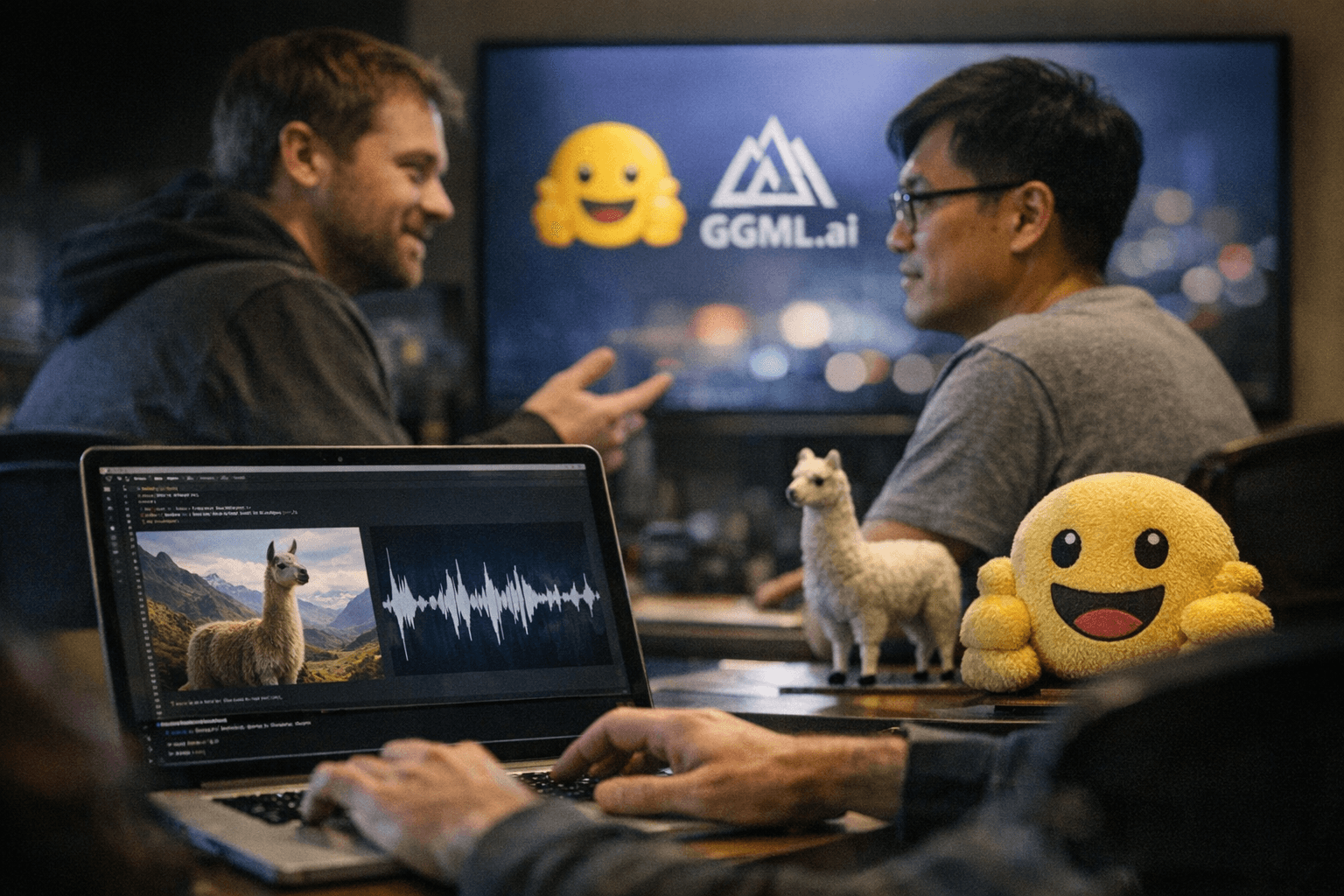

GGML.ai joins Hugging Face, bringing GGML projects like llama.cpp, whisper.cpp

The ggml organization announced it joined Hugging Face on February 20, 2026, bringing flagship local-LLM projects including llama.cpp, whisper.cpp, and LlamaBarn into Hugging Face’s fold.

The ggml organization announced it joined Hugging Face on February 20, 2026, transferring stewardship of high-profile local-LLM projects such as llama.cpp, whisper.cpp, and LlamaBarn. The move links the GGML tensor and machine-learning tooling family with Hugging Face at a moment when on-device and local inference tooling remain central to many Rust and systems-oriented workflows.

ggml-org is the team behind the GGML tensor/ML tooling that powers projects used for CPU-efficient inference and model quantization. The projects named in the announcement — llama.cpp, whisper.cpp, and LlamaBarn — are widely used in local-LLM setups for text generation and speech transcription, and they were explicitly called out in the February 20, 2026 statement of affiliation with Hugging Face.

The change is likely to reshape how those repositories are surfaced and maintained in the broader ecosystem because Hugging Face provides model hosting, community tools, and packaging pathways that many developers use to distribute models and inference runtimes. Developers who integrate llama.cpp for low-latency local inference or run whisper.cpp for offline speech-to-text now have an organizational alignment with Hugging Face that could influence model distribution and tooling integration across Python and Rust stacks.

For maintainers and contributors, the announcement clarifies an organizational home for GGML-related work: ggml-org’s tooling and the identified projects are now affiliated with Hugging Face as of February 20, 2026. That affiliation matters for downstream users running local-LLM tooling on laptops and edge hardware, as integration with Hugging Face workflows often affects release distribution, model card hosting, and discoverability in package indexes and community hubs.

Community watchers should record the February 20, 2026 date as the pivot point for GGML projects moving under Hugging Face’s banner. As ggml-org’s llama.cpp, whisper.cpp, and LlamaBarn begin their association with Hugging Face, Rust-focused developers who rely on those projects for efficient, on-device inference will want to track repository settings, transfer notices, and any forthcoming changes to packaging or model hosting tied to this organizational move.

Know something we missed? Have a correction or additional information?

Submit a Tip