Samsung to begin HBM4 production next month, supply Nvidia

Samsung will begin HBM4 wafer production in February and is expected to allocate capacity to Nvidia, a move that could ease AI memory bottlenecks.

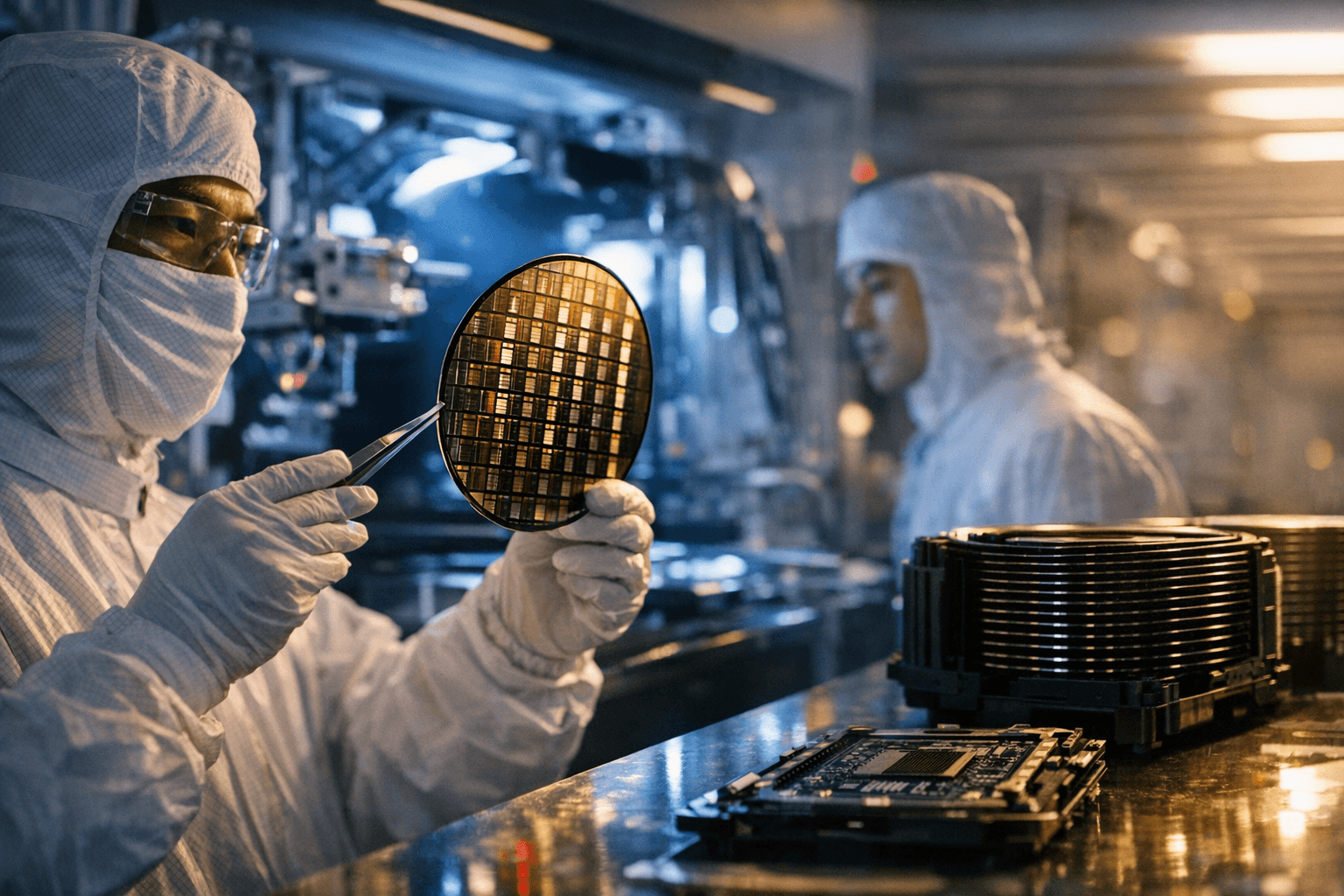

Samsung Electronics plans to start production of next-generation high-bandwidth memory wafers in February and is expected to supply at least some of that capacity to Nvidia, a person familiar with the matter said. The decision marks a significant step toward wider commercial deployment of HBM4, the stacked memory technology designers see as essential for forthcoming AI accelerators.

HBM4, the successor to HBM3, is anticipated to deliver higher bandwidth and better energy efficiency per bit, enabling chipmakers to push compute performance while managing power and thermal limits in data centers. Samsung’s move from development to wafer production signals confidence in yields and a readiness to meet the surging demand for memory optimized for large language models and other AI workloads.

Nvidia, which relies on high-performance memory for its data center GPUs and accelerator products, has been scaling production of AI chips to serve cloud providers, enterprises and hyperscalers. Securing supply from Samsung could help Nvidia expand shipments of next-generation accelerators and shorten lead times that have affected buyers since the AI compute boom began.

Memory suppliers have been racing to expand HBM capacity amid persistent shortages and long delivery schedules. Samsung’s ramp could relieve some pressure in a market where only a handful of companies have the technology and manufacturing scale to produce advanced stacked DRAM. However, industry analysts caution that wafer production is only one part of the supply chain: packaging, testing and integration into finished modules remain complex steps that can limit how quickly additional capacity reaches customers.

For Samsung, the move reinforces its strategic emphasis on high-value memory segments as overall semiconductor markets evolve. The company has invested heavily in developing stacking and interconnect technologies that underpin HBM generations, and a successful HBM4 ramp would strengthen its position against competitors in the memory market.

The implications extend beyond corporate competition. Easier access to HBM4 could accelerate the deployment of more powerful AI systems, lowering costs for datacenter operators and potentially spurring innovation in applications that demand extreme memory bandwidth. At the same time, increased compute capacity raises questions about energy consumption and the environmental footprint of large-scale AI training and inference. Improvements in HBM efficiency may mitigate some impacts, but broader industry attention to energy and lifecycle considerations will be needed.

Market watchers will be looking for two critical indicators in the months ahead: the pace at which Samsung converts wafer production into shipped HBM4 modules, and the proportion of that output allocated to major customers such as Nvidia. Those signals will determine whether the announcement represents a tactical supply adjustment or the beginning of a substantial easing of the memory bottleneck that has shaped the AI hardware race.

Sources:

Know something we missed? Have a correction or additional information?

Submit a Tip