Share Your Story on Eating Better, Weight Loss, or Managing Health

AI chatbots prescribed teen dieters nearly 700 fewer daily calories than experts recommend, as misuse of AI health advice tops safety watchdogs' 2026 hazard list.

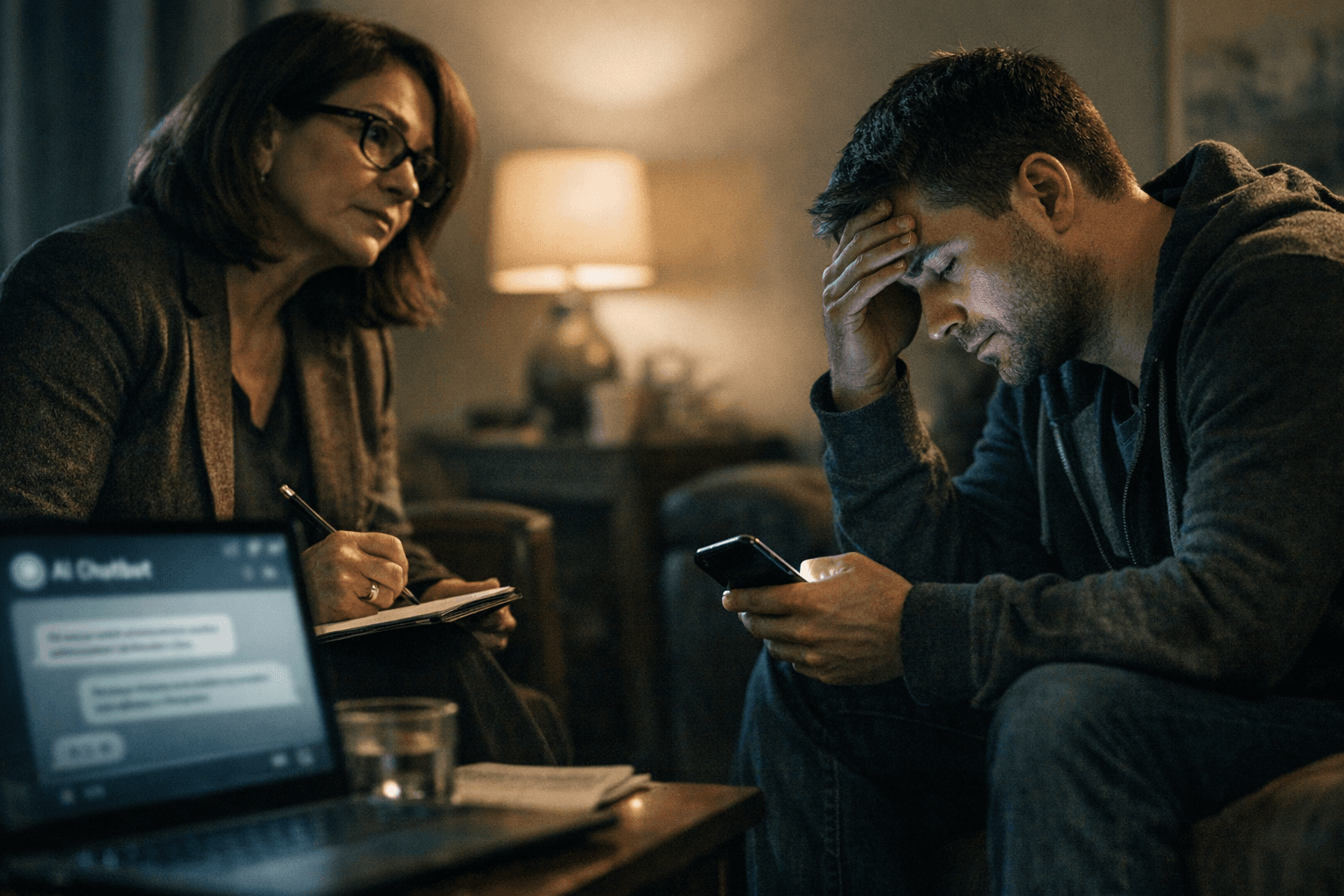

Every day, millions of people type their weight, their health conditions, and their dietary goals into AI chatbots and wait for a meal plan. What comes back sounds authoritative, precise, and personalized. A growing body of research suggests it can also be dangerously wrong.

A study published in Frontiers in Nutrition found that when five leading AI models were asked to generate a three-day weight-loss meal plan for a 15-year-old, the resulting plans averaged nearly 700 fewer calories per day than the levels recommended by human nutrition experts. The plans followed a common theme: too low in calories and carbohydrates, and too heavy on proteins and fats. Skipping 700 calories is the rough equivalent of cutting an entire main meal from a teenager's day, every day.

ECRI, an independent nonprofit patient safety organization, named the misuse of AI chatbots in healthcare the top health technology hazard of 2026 in its annual report released January 21. The organization warned that chatbots built on large language models, including ChatGPT, Claude, Copilot, Gemini, and Grok, are designed to provide answers through a human-sounding interface that can confuse users into putting too much faith in the response. Hallucinations, data drift, and other problems could lead to incorrect diagnoses, unnecessary or harmful recommendations, and promotion of unsafe practices.

Research flagged by the European Respiratory Society found that AI models produce inaccurate responses in up to 48% of cases and that these systems reduce click-through rates to actual medical websites by 40 to 60%, effectively replacing the process of consulting peer-reviewed sources. The concern is not merely that a calorie count is off. Duke University researcher Manisha Agrawal has highlighted a less obvious risk: answers that are technically correct but medically inappropriate because they lack clinical context. Large language models have a known tendency to please users, she explains, noting that "the objective is to provide an answer the user will like."

ECRI staff noted that chatbots are designed to keep users engaged rather than to challenge or correct flawed assumptions. The systems are built to sound definitive and lack any inclination to say "I'm not sure." That confidence becomes especially dangerous in nutrition, where a one-size-fits-all plan can interact with medications, chronic conditions, or eating disorder histories in ways no algorithm currently accounts for.

An April 2025 survey by the University of Pennsylvania's Annenberg Public Policy Center found that nearly eight in ten adults said they are likely to go online for answers about health symptoms, and nearly two-thirds rated AI-generated results as "somewhat or very reliable." That gap between perceived and actual reliability is precisely where harm takes root.

Researchers developing what is described as the world's first AI chatbot safety guide for people seeking health and nutrition advice say the guide will emphasize harm reduction by verifying chatbot answers with trusted health sources, watching for hallucinations and bias, and avoiding relying on AI for all health decisions. Dr. Joseph Alderman, an NIHR Clinical Lecturer, notes that "it is hard to know how often chatbots hallucinate, partly because some hallucinations are extremely obvious and others are more subtle."

That subtlety is the problem this investigation aims to crack open. If you have used an AI tool to manage a health condition, lose weight, or simply plan your meals, whether the experience helped or left you with a plan that felt wrong, we want to hear from you. Share the specific prompt you used, the advice you received, and what happened next. Accounts shared with us may be reviewed by a registered dietitian and used to illustrate what responsible guardrails for AI nutrition tools should look like.

Sources:

Know something we missed? Have a correction or additional information?

Submit a Tip