Spotify Launches Artist Profile Protection Tool to Block AI Music Fakes

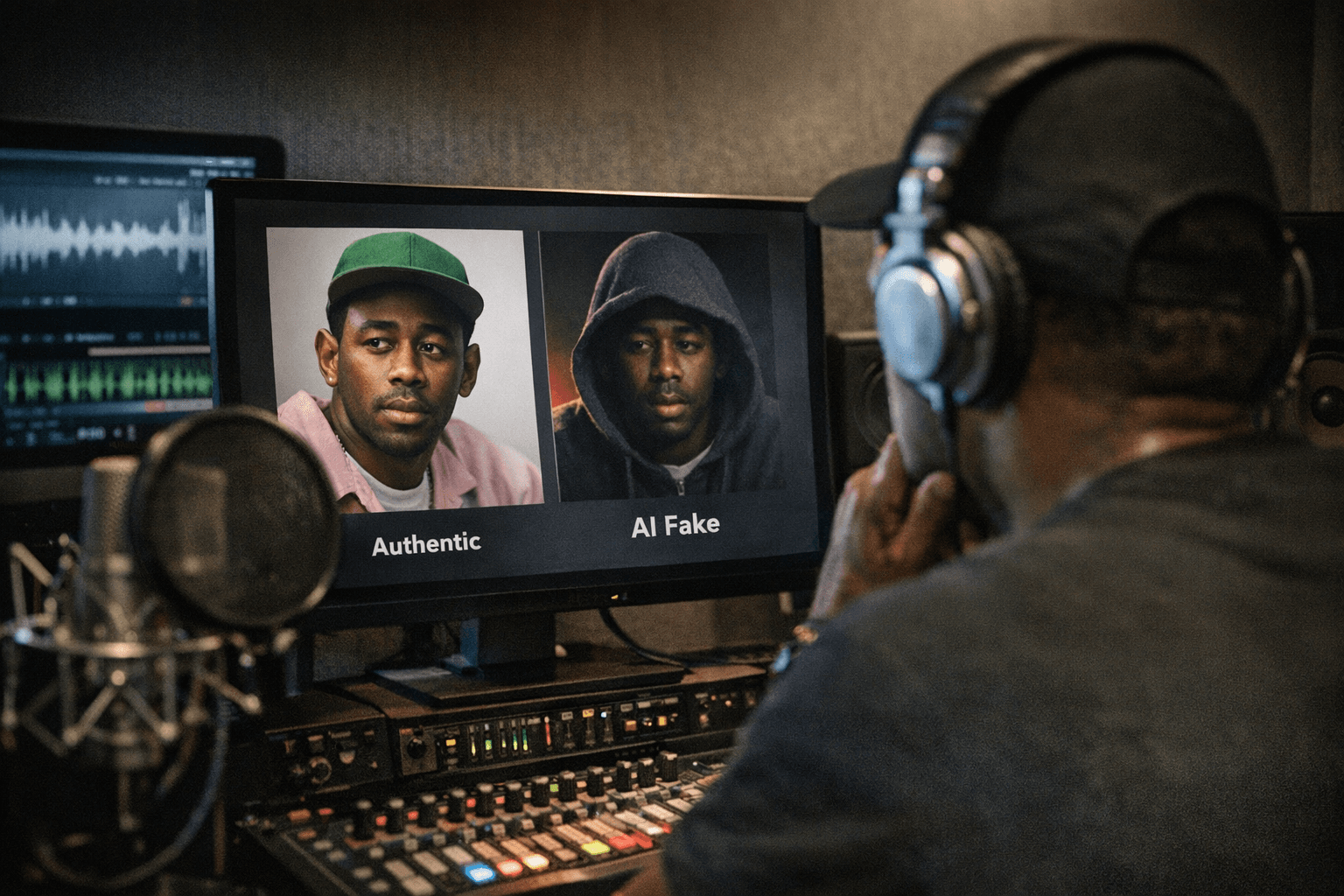

Spotify's new Artist Profile Protection lets musicians approve or block releases before they go live, after AI fakes briefly pushed a counterfeit Tyler, the Creator track to No. 2 on the platform.

A fake version of Tyler, the Creator's "Don't Tap the Glass" briefly held the number two spot on Spotify under the album name before the record's official release. Father John Misty and Jeff Tweedy found AI-generated tracks attached to their profiles without consent. Now Spotify is giving artists a gate.

Rolling out in beta on March 24, Spotify announced Artist Profile Protection, a new opt-in system that puts artists in direct control over what new music appears under their name, adding an approval stage before eligible releases are uploaded to the platform.

Spotify framed the move in pointed terms: "Music has been landing on the wrong artist pages across streaming services, and the rise of easy-to-produce AI tracks has made the problem worse," the company said. "That's not the experience we want artists to have on Spotify, and that's why we've made protecting artist identity a top priority for 2026."

The mechanics are straightforward. Artists included in the beta will find the feature in their Spotify for Artists settings on desktop and mobile web. Once enabled, they receive an email notification when music is delivered to Spotify with their name attached, and can then approve or decline the request. Approved releases upload normally and contribute to artist stats and listener recommendations; declined releases, or those on which no action is taken, will not appear on the artist's Spotify profile, though they may still go live on other platforms. Spotify recommends notifying the distributor in those cases.

To avoid bottlenecking legitimate releases, Spotify assigns each enrolled artist what it calls an "artist key," a unique code musicians can share with trusted music distributors. When music is delivered to Spotify with the artist key attached, the release is automatically approved.

The feature has been designed to combat ongoing issues with misattributed releases, whether from metadata errors, artists sharing the same name, or "bad actors" who are "maliciously" attaching music to artists' profiles. Spotify is upfront that the feature "isn't necessary for every artist," saying it is "best for those who are comfortable very actively managing their catalog." Notably, if an artist fails to act on an incoming release, it is blocked by default, meaning legitimate releases could be delayed if an artist forgets to respond.

The scale of the broader problem is measurable. Rival platform Deezer recently disclosed that it was receiving approximately 60,000 fully AI-generated tracks per day, around 39% of all daily deliveries. A recent U.S. case, cited in coverage of the rollout, involved a guilty plea tied to AI-made tracks and bot-driven streams that generated fraudulent royalty payouts, illustrating how the volume of automated content can be weaponized for financial gain.

Spotify describes Artist Profile Protection as a "first-of-its-kind" solution, offering artists a proactive layer of control on top of existing reporting tools. The company characterized the feature's relationship to its existing tools this way: "This new feature builds on our reporting tools already in place, giving you proactive review and reactive reporting, so you have the chance to act both before and after a release connects to your profile."

The contrast with competitors is deliberate. Unlike Apple Music's "Transparency Tags," which leave the responsibility of disclosing AI music to labels and distributors, Spotify's new tool tightens the screws on the approval process itself, taking direct aim at how fraudsters have been able to upload AI-generated music to impersonate bigger artists and steal royalties through streams.

The new pilot arrives a week after it emerged that Sony Music asked streaming platforms to take down more than 135,000 songs it says were created by fraudsters using generative AI to impersonate artists on its roster. Throughout the beta, Spotify said it will "collect feedback from artists using Artist Profile Protection and keep refining it before rolling it out to all artists as soon as we possibly can.

Know something we missed? Have a correction or additional information?

Submit a Tip