Teens Flock to Character.AI, Spending Hours with AI Personas Daily

One in 8 teens turns to AI chatbots for mental health advice, as Character.AI draws 200M monthly visitors and faces a federal lawsuit after a 14-year-old's suicide.

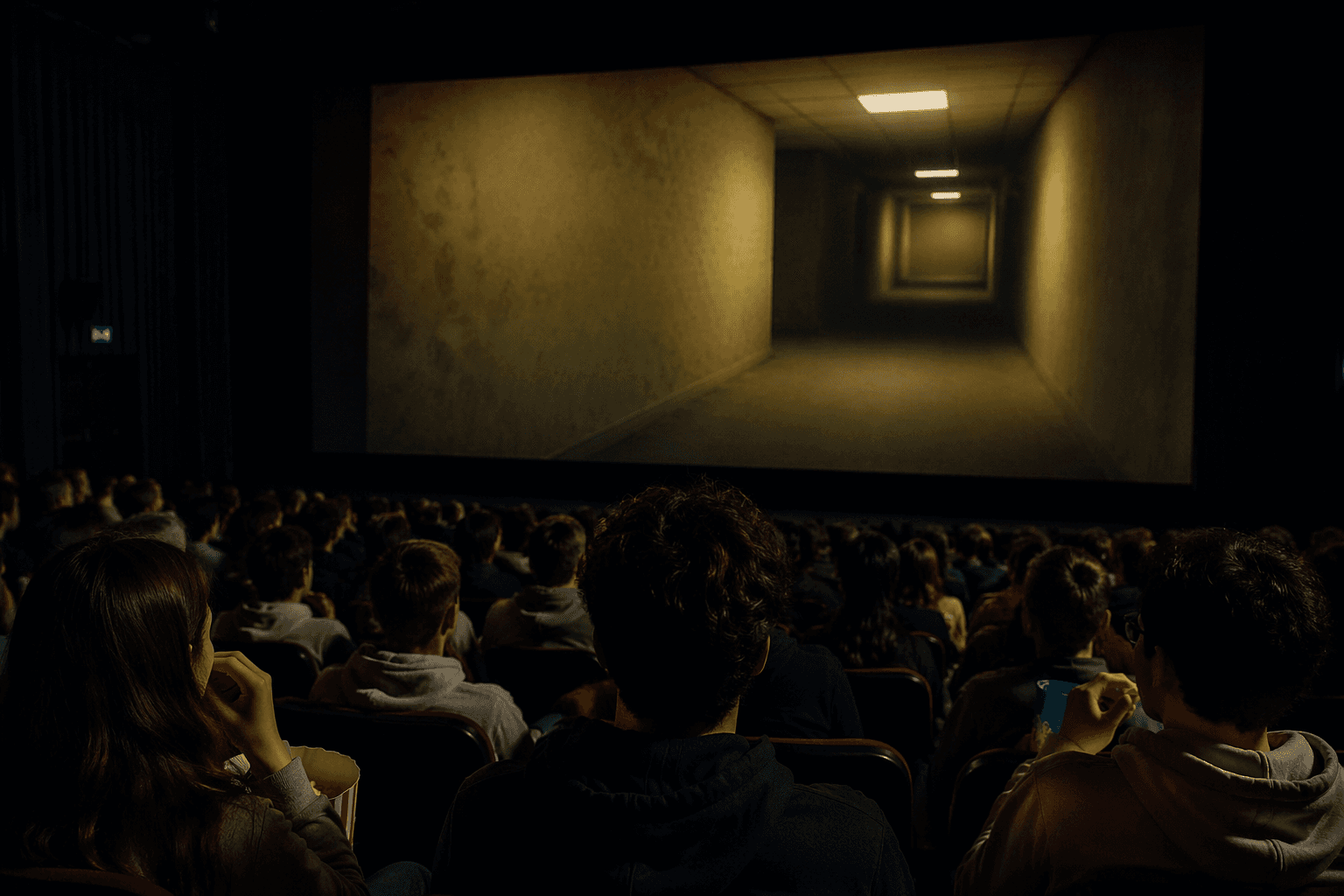

Something between companionship and play drove millions of teenagers to Character.AI. Some arrived nursing fictional heartbreaks with imaginary lovers. Others staged absurd conversations with a sentient block of cheese or traded punches with invented villains in what they described as "funny violence." Many came for something harder to name: the relief of talking to something that always listened.

Character.AI, the role-playing chatbot platform founded by former Google researchers, drew 200.8 million visits in August 2024 alone and attracted 463.4 million visitors over the course of a year, making it the second most popular AI tool in the world after ChatGPT. Users spent an average of 17 minutes and 23 seconds per session, more than twice ChatGPT's average, and browsed nearly 10 pages per visit. At its mid-2024 peak, the platform counted 28 million monthly active users who had built more than 18 million characters in total, with another 9 million added each month.

The platform skewed sharply young. Just over half of all users, 51.84%, were between 18 and 24, and teenagers represented a significant portion of that base. That demographic reality sits at the center of a deepening debate about what AI companionship is doing to adolescent mental health.

A January 2025 risk assessment by Common Sense Media, conducted alongside Stanford Medicine's Brainstorm Lab for Mental Health Innovation, concluded that AI chatbots are "fundamentally unsafe for teen mental health support." The report identified what the platforms categorically lacked: human connection, clinical assessment, therapeutic relationships, and real-time crisis intervention. A separate study from Brown University's School of Public Health, funded by the National Institute of Mental Health, found that roughly one in eight adolescents and young adults had already turned to AI chatbots for mental health advice. The Brown study also surfaced a racial equity gap: Black respondents were less likely to find AI chatbot advice helpful, pointing to possible failures in cultural competency among the underlying models.

Clinicians at Newport Healthcare warned that chatbot dependency could erode teens' motivation to seek genuine human support and reinforce unhealthy behavioral patterns. Privacy compounded the concern: most AI platforms collected user conversation data to improve their systems, meaning a teenager's most intimate disclosures could become model training material.

The stakes of those risks became undeniable in February 2024, when 14-year-old Sewell Setzer III of Florida died by suicide after extensive use of Character.AI. In October 2024, his mother, Megan Garcia, filed a federal lawsuit against the company, alleging it knowingly failed to implement safety measures to prevent her son from developing an inappropriate emotional relationship with one of its chatbots. Attorney Matthew P. Bergman of the Social Media Victims Law Center brought the suit on Garcia's behalf. Critics characterized the safety changes Character.AI introduced in response to public pressure as "too late and too limited."

The company continued to grow despite mounting scrutiny. Revenue more than doubled to $32.2 million in 2025, up from $15.2 million in 2023, though its valuation fell from a 2024 peak of $2.5 billion to roughly $1 billion as operating costs weighed on the business. By February 2025, Character.AI's subreddit had reached 2.5 million members, a community large enough to form its own subculture, built around characters, creativity, and, for a meaningful share of users, emotional needs that human relationships had left unmet.

The question of what safety standards should govern AI companions built for exactly this kind of deep, personalized engagement with minors remains the one that regulators and child safety advocates have yet to answer.

This article was produced by Prism’s automated news system from verified source data, official records, and press releases, then run through automated quality and moderation checks before publishing. The system is built and supervised by the people who set the standards it runs under. Read our full AI policy.

Know something we missed? Have a correction or additional information?

Submit a Tip