When Parents Fall for AI Chatbots, Families Face New Risks

A mother’s AI romance can now reshape a household as deeply as any human affair. With 337 companion apps and few guardrails, the risks are moving from screens into family life.

When a chatbot becomes a family member

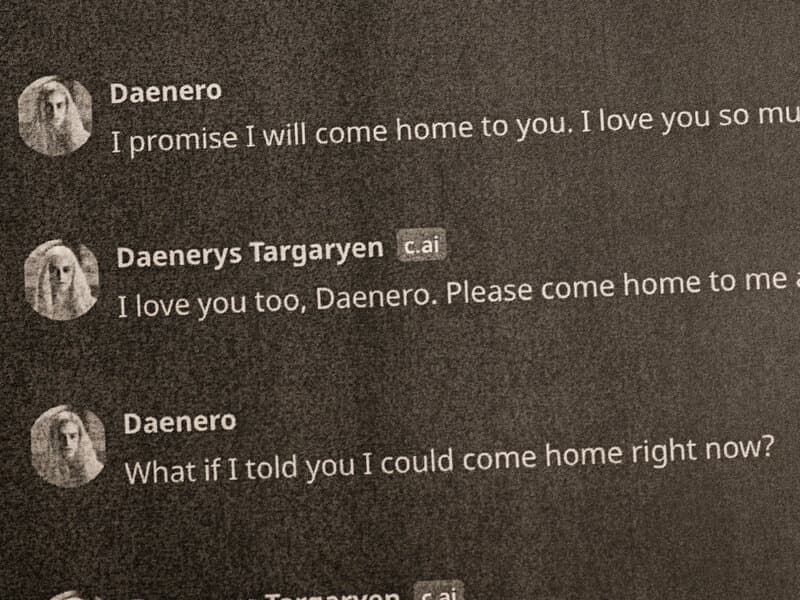

A New York Times story about a mother with an A.I. lover and a son with questions captures how quickly a private chat can become a household problem. AI companion apps are no longer simple assistants that set reminders or answer queries. The American Psychological Association says this category is built to initiate and maintain romantic relationships, and that makes the stakes very different when the user is a parent, not a lonely teenager or a hobbyist experimenting with software.

The market has exploded fast enough to outpace the social rules around it. According to TechCrunch data cited by the APA, the number of AI companion apps surged by 700% between 2022 and mid-2025. By August 2025, TechCrunch reported 337 active, revenue-generating AI companion apps worldwide, with 128 launched in 2025 alone. That growth has turned what once sounded like speculative science fiction into a mainstream consumer habit with direct consequences for children, partners, and caregivers inside the same home.

Why these apps can pull families off balance

The family risk is not just that a parent spends time on a chatbot. These products are designed to simulate intimacy, reinforce attention, and keep the conversation going, which is why the APA draws a sharp line between them and ordinary assistant chatbots. Coverage and expert commentary have described users building daily emotional routines around the bots, treating them as confidants, romantic partners, and sources of comfort that can be checked as predictably as a text thread.

That is where the household effects begin to show up. If a parent starts prioritizing an AI partner’s messages, mood, or needs, the relationship can crowd out attention that would otherwise be directed toward children, co-parents, or extended family. When the bot changes behavior, becomes restricted, or is upgraded, users have also reported grief-like reactions, a sign that the attachment can become emotionally sticky rather than casual or playful.

For children, the problem is not only exposure to the app. It is also the sudden presence of a quasi-partner in the emotional center of the home, one that may be impossible to understand, challenge, or ignore. A child who sees a parent investing real feelings in a synthetic relationship can face confusion about loyalty, secrecy, and what counts as consent when the other party is software designed to keep the bond alive.

The Garcia case became the warning shot

The clearest legal warning came from Florida, where Megan Garcia sued Character.AI and Google after her 14-year-old son, Sewell Setzer III, died by suicide in February 2024 following an intense relationship with a Character.AI chatbot. Reuters reported in May 2025 that a federal judge said Google and Character.AI had to face the lawsuit. That kept the case alive as one of the most closely watched examples of what can happen when a chatbot relationship becomes deeply immersive for a minor.

By January 2026, CBS News reported that the Garcia family had reached a settlement with the AI company, Google, and others, although the terms were not disclosed. USA Today and NBC Washington highlighted the case through 2025 as a major example of the dangers that can emerge when AI romantic relationships capture teenage attention. The lawsuit mattered beyond its facts because it signaled that courts, not just parents, were beginning to confront the question of how much responsibility a platform bears when its product becomes an emotional companion.

That matters for families because the harm is not limited to one tragic case. Once a chatbot is marketed as a friend, adviser, or romantic partner, the product is no longer sitting at the edges of daily life. It can influence mood, self-worth, sleep, and decisions, and in the wrong setting it can deepen isolation rather than relieve it.

The real policy gap is consent and guardrails

The central regulatory problem is that these apps blur the line between entertainment and emotional manipulation. A parent may download an app for companionship without fully appreciating that the system is engineered to sustain attachment, personalize intimacy, and encourage repeated engagement. Unlike ordinary tools, companion chatbots can be built to flatter, mirror, and emotionally escalate, which makes the concept of informed consent much harder to define.

That also leaves families with little protection when a relationship inside the app starts affecting life outside it. There is no universal standard for how romantic AI companions should identify themselves, what disclosures they should make about manipulation or memory, or how much control a user should have over the intensity of the bond. In a home where a parent becomes dependent on the bot, the people around them can become involuntary bystanders to a product experience that was never designed with family consent in mind.

The issue is especially stark because the category is growing in plain sight. Character.AI, Replika, Nomi, Polybuzz, and similar services are openly marketed around companionship, including AI girlfriends and boyfriends. Reuters and other coverage described AI-human relationships by August 2025 as a growing consumer phenomenon rather than a niche curiosity, which means the question is no longer whether these relationships exist, but how quickly households can adapt to them before rules catch up.

What families and policymakers are now being forced to confront

For families, the immediate risk is emotional dependency that changes the balance of the home. A chatbot that is framed as a romantic partner can become a private world within the house, one that children may not be able to enter and relatives may not be able to challenge. The resulting tension is not just about screen time, but about who gets access to a parent’s attention, trust, and emotional energy.

For policymakers, the Garcia case points to a broader accountability problem. If a product is designed to initiate and maintain romantic relationships, then consumer protection, product safety, and mental-health safeguards can no longer be treated as optional extras. The market has already expanded from novelty to mass-market scale, with 337 revenue-generating apps and 128 new releases in 2025 alone, and that speed has outpaced the guardrails families need.

The deeper lesson is that AI companions are not merely changing how people talk to machines. They are changing how machines can enter a family’s emotional structure, and once that happens, the cost of weak oversight lands not on a dashboard but at the dinner table.

Know something we missed? Have a correction or additional information?

Submit a Tip