YouTube expands likeness detection to help celebrities find deepfakes

YouTube is letting celebrities search for AI fakes of their faces, but the tool still leaves ordinary users exposed and removal far from automatic.

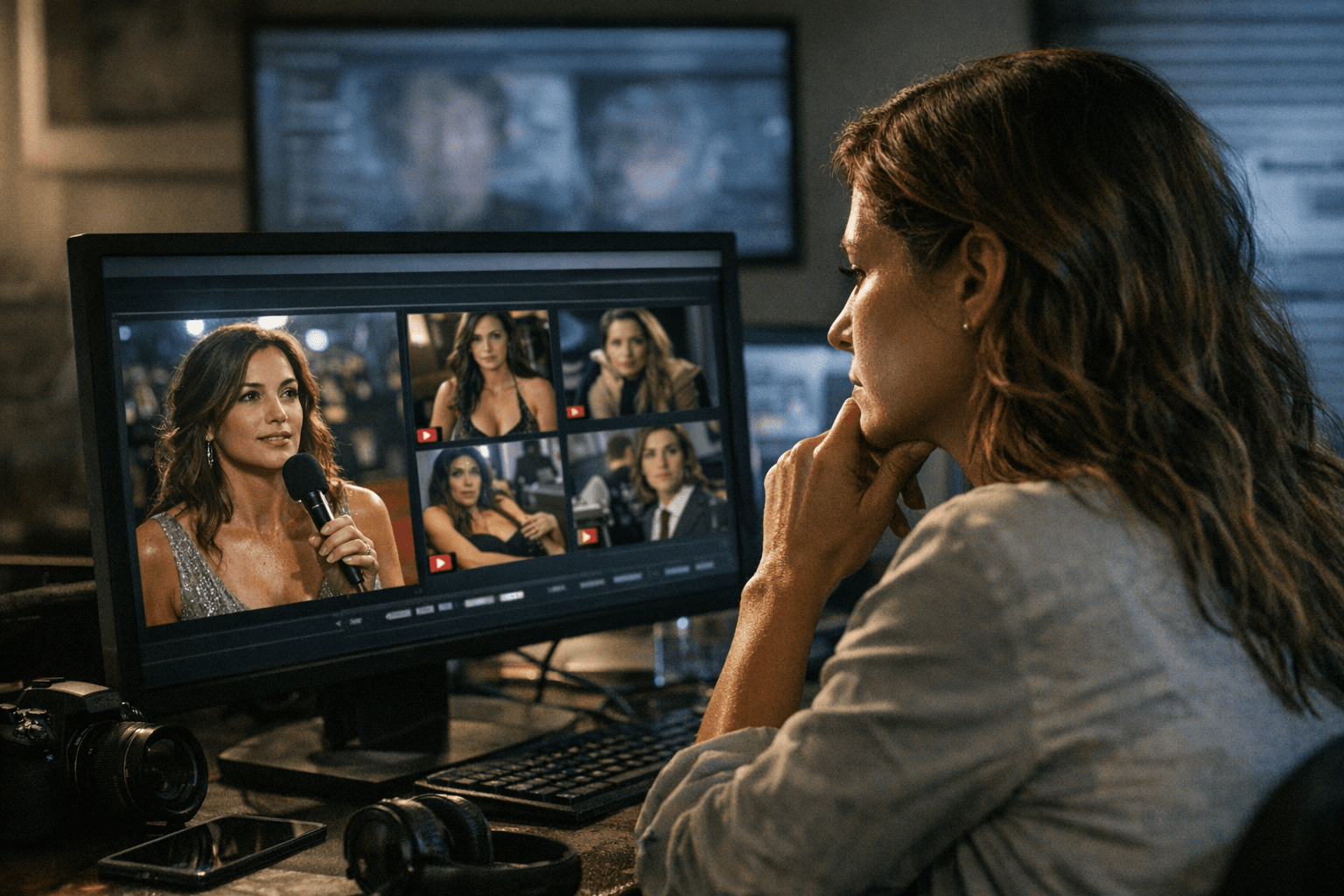

YouTube is widening access to its likeness detection system for the entertainment industry, giving celebrities and their representatives a way to search for AI-generated videos that imitate their faces. The move puts YouTube squarely in the middle of a fast-growing deepfake problem, but it also exposes the limits of platform self-policing: the new protection is built for people with managers, agencies and public profiles, not for the far larger pool of ordinary users whose images can also be cloned and circulated without consent.

The company said on April 21, 2026, that talent agencies, management companies and the celebrities they represent can now use the tool. YouTube has framed the feature as similar to Content ID, but instead of matching protected audio or video, it scans for facial likenesses. Once a person verifies identity and enrolls, the system can surface AI-generated videos that appear to use that person’s image, then let the rights holder review the material and choose whether to request removal under privacy rules, file a copyright-related request or leave it alone.

That flexibility is deliberate. YouTube has said detection does not guarantee removal, and that parody and satire can be preserved after review. In other words, the company is trying to separate obvious frauds, scam ads and impersonations from legitimate commentary or comedic use. But the policy also underscores a central weakness of the platform’s approach: the burden of review still falls on the person being copied, and the system depends on users knowing they have been targeted in the first place.

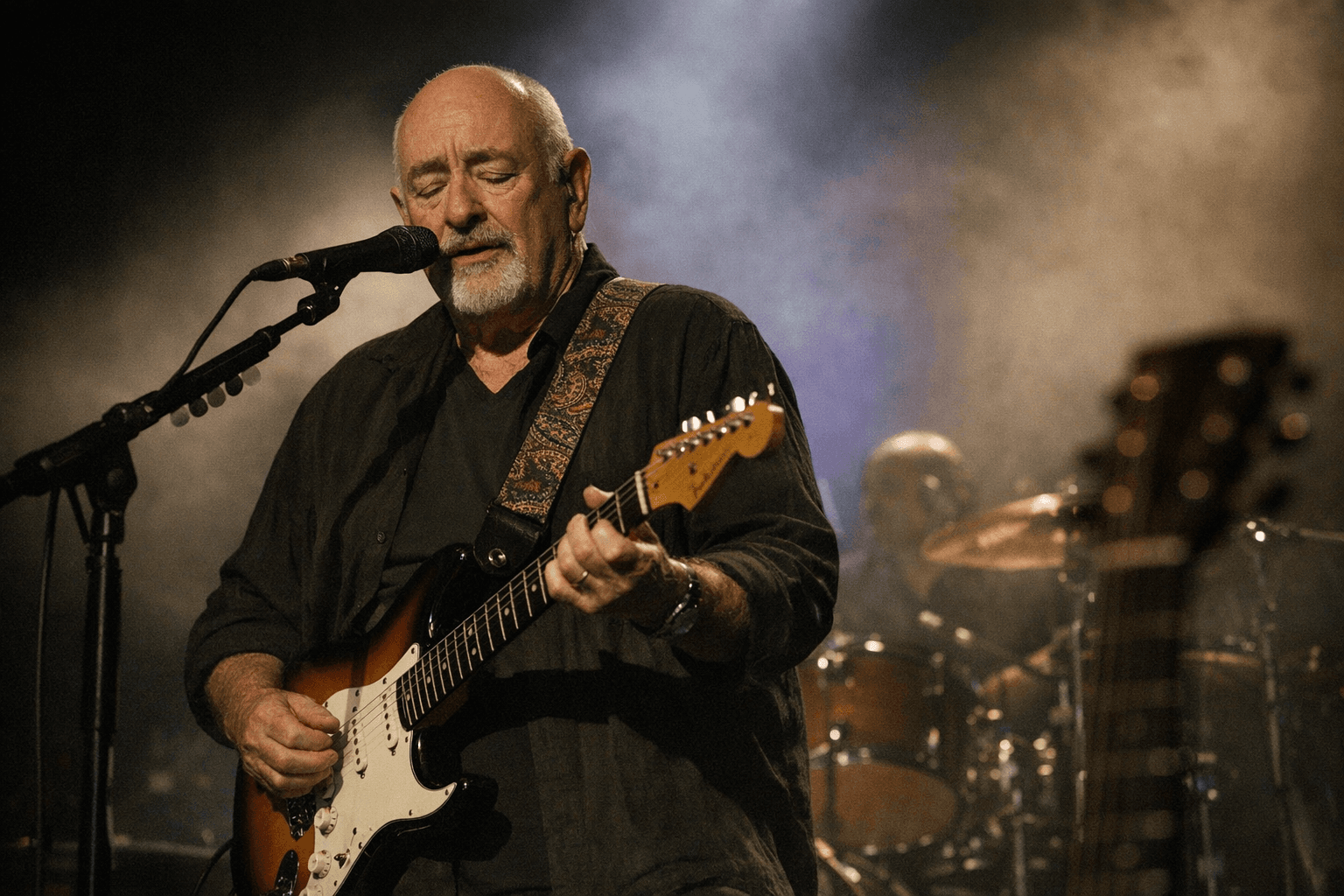

The rollout extends a program YouTube first launched for creators in the YouTube Partner Program, then expanded in open beta to all YPP creators. In March 2026, the company broadened likeness detection again to a pilot group of government officials, journalists and political candidates. YouTube had previously said it would test the technology with celebrity talent represented by Creative Artists Agency, including award-winning actors and top athletes from the NBA and NFL, and it has now expanded that work to agencies including CAA, UTA, WME and Untitled Management.

The broader stakes are growing with the rise of synthetic media that can mimic voices, faces and endorsements with alarming realism. YouTube has said it supports the NO FAKES Act in the United States and has updated its privacy process so people can request removal of altered or synthetic content that simulates their likeness. For Hollywood names and public officials, that looks like a meaningful rights tool. For everyone else, it is a reminder that the platforms most capable of amplifying deepfakes are still the ones deciding how selectively to police them.

Sources:

Know something we missed? Have a correction or additional information?

Submit a Tip