SEO teams learn to control AI bots, not just track them

SEO is moving from tracking bots to governing them, and agencies now need policies for which AI crawlers to welcome, throttle, or shut out.

From visibility to control

AI search has pushed SEO past the old question of whether a crawler can find a page. The sharper question now is who gets in, what they can take, and how much strain they are allowed to place on the site while they do it. That shift turns crawler management into an agency governance issue, not just a technical one, and it gives SEO teams a new kind of advisory work to sell.

The practical divide is simple but consequential. On one side, teams want to make content easy for AI systems to crawl when inclusion matters. On the other, they need ways to block or slow crawlers they would rather keep out. The result is a much broader operating model, where robots directives sit alongside JavaScript rendering, page-load performance, and overall site speed.

The policy question every client now has to answer

The first job is not technical, it is editorial and commercial. Agencies need to help clients decide which crawlers should be welcomed, which should be rate-limited, and which should be denied outright. That choice depends on content sensitivity, server resources, legal concerns, and how much AI visibility the client actually wants.

This is where the work becomes billable in a new way. Instead of treating AI bots as a vague trend, agencies can package an audit and policy framework around crawl logs, visibility controls, and access rules. For clients with large content libraries or heavy traffic, that framework becomes a governance layer that protects revenue, preserves performance, and reduces risk.

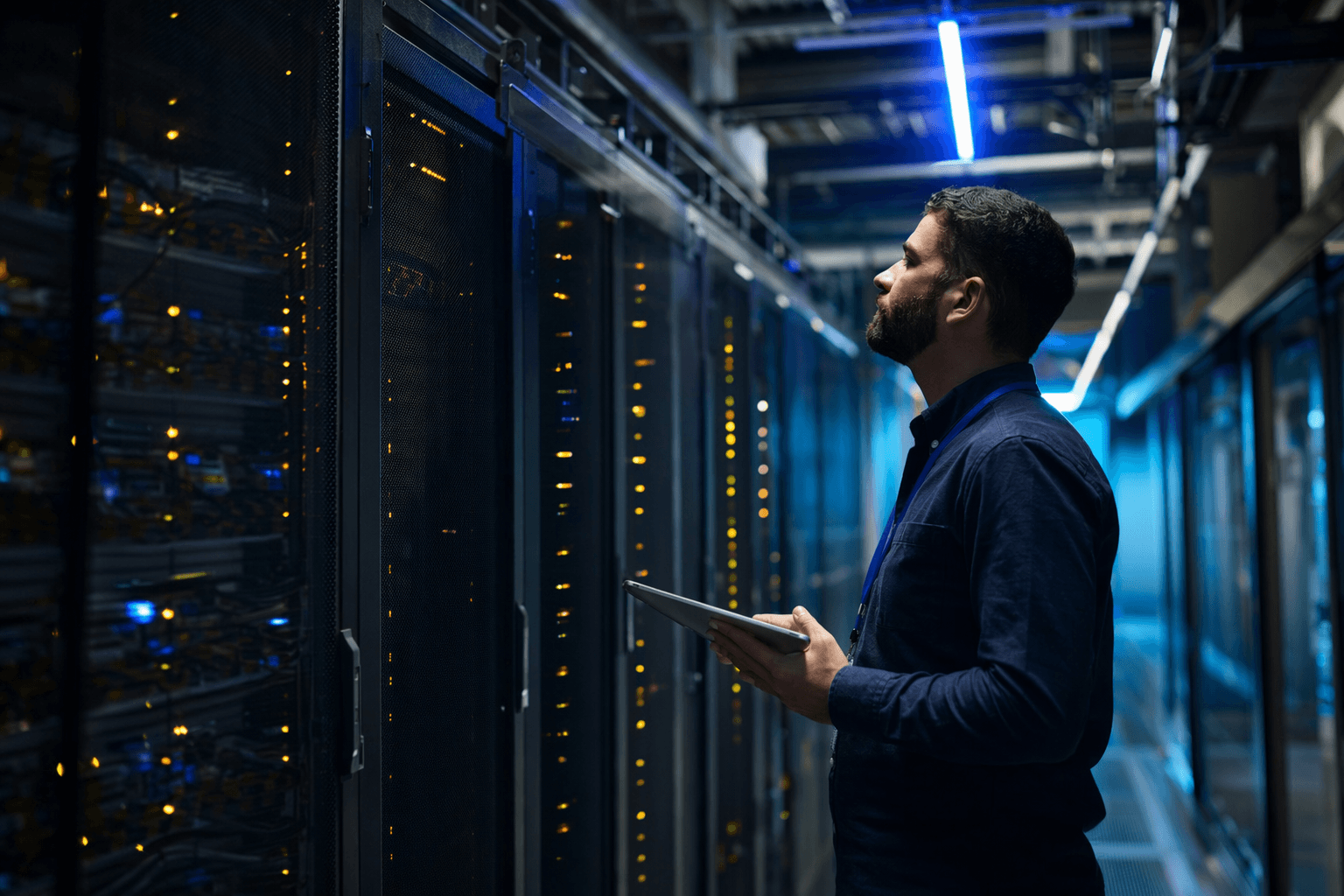

What to audit before changing a single rule

A solid AI-bot policy starts with a crawl audit. Agencies should map which bots are visiting, what pages they request, how often they return, and whether the site is already showing signs of strain. That inventory should also separate high-value pages from sensitive ones, because a single site may need different treatment for product pages, editorial archives, gated assets, and ad-heavy sections.

Then comes the infrastructure check. AI crawlability is no longer only about a robots file, because rendering and speed now shape whether a crawler can actually see and process the content. If JavaScript blocks key text, if pages load too slowly, or if server capacity is thin, even a permissive policy can fail in practice.

A workable decision framework for agencies

A clean way to structure the advice is to classify every crawler and every content type before assigning a rule.

1. Identify the business goal for each content group.

Some pages should be discoverable and reusable, while others exist to support revenue or must remain tightly controlled.

2. Match the bot to the value of the page.

A crawler that supports search visibility may be welcome on indexable content, while a bot trained to ingest material for broader reuse may need restrictions.

3. Choose the control level.

Allow it, limit it, or block it. If the site is under pressure, rate limiting can be a middle ground between openness and refusal.

4. Validate the result in logs and reports.

A policy is only useful if the team can see whether crawlers obey it, whether requests dropped, and whether the site became faster or safer after the change.

That structure also helps agencies explain tradeoffs to clients who may not realize that AI visibility and AI control are now two sides of the same negotiation.

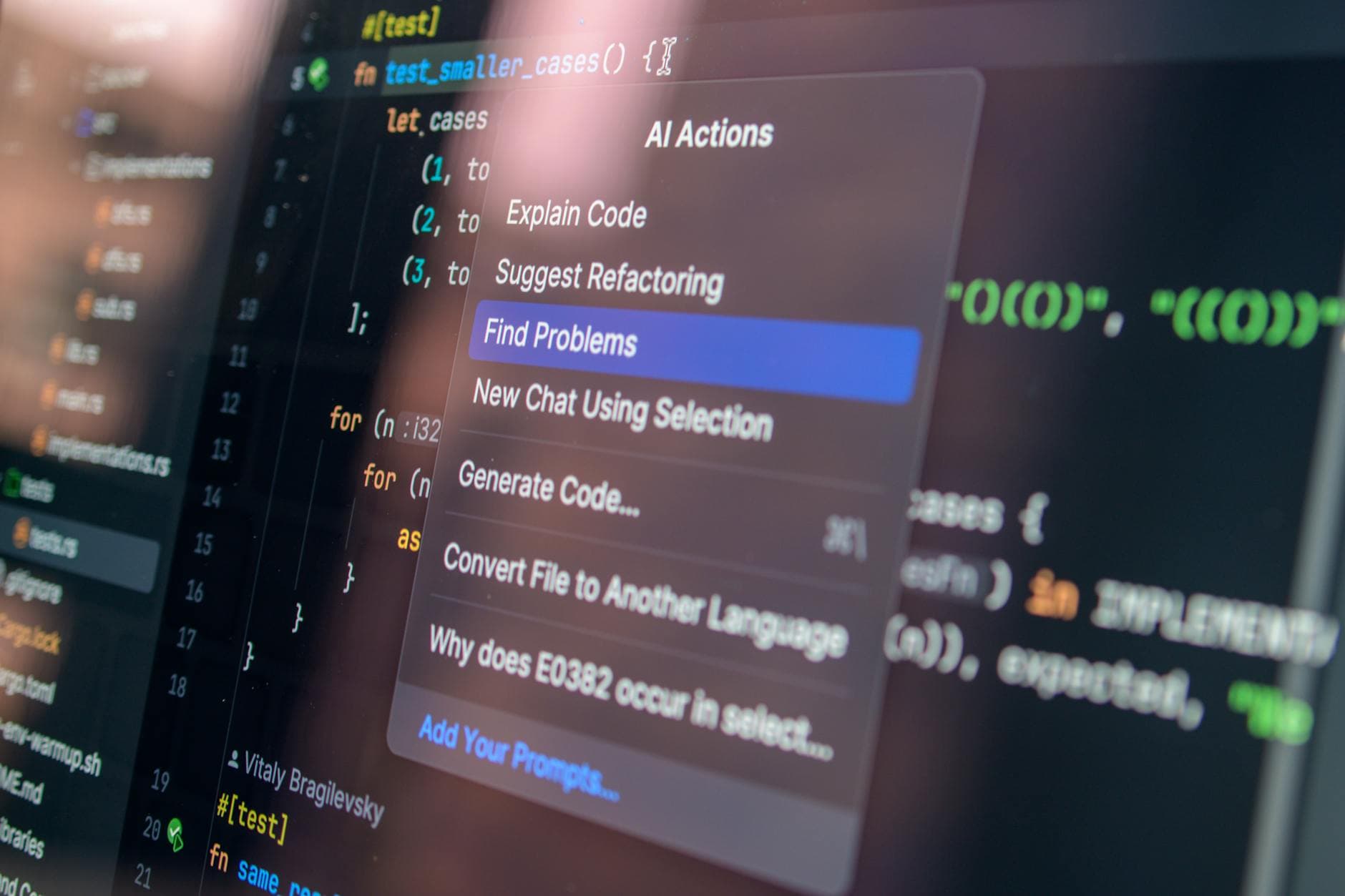

What the major platform players actually allow

Google’s guidance keeps the basics grounded. Robots.txt is primarily a way to manage crawler traffic, and it can help prevent a server from being overwhelmed by requests. Google also says its automated crawlers support the Robots Exclusion Protocol and always obey robots.txt rules when crawling automatically.

OpenAI has taken a similar but more granular approach. Its crawler documentation says it uses OAI-SearchBot and GPTBot robots.txt tags so webmasters can manage how sites and content work with AI. The key detail for agencies is that each setting is independent, so a webmaster can allow one bot while blocking another.

That independence matters in client conversations. It means AI policy is not a single on or off switch. It is a matrix of permissions that can be tuned by use case, platform, and risk tolerance.

Cloudflare turns the policy into an operating layer

Cloudflare has pushed the category further by turning crawler control into a productized workflow. Its AI Crawl Control can show which AI services access content, monitor crawler activity and request patterns, set allow and block rules for individual crawlers, and track robots.txt compliance. It can also block AI bots on all pages or only on hostnames with ads.

For agencies, the most useful part may be how that control sits inside a broader security and traffic stack. Cloudflare says bot-management customers can apply custom rules before the Block AI bots rule, which means policy can be staged rather than bluntly enforced. Cloudflare also says AI Crawl Control works with WAF custom rules that can enforce robots.txt, and that the feature is available on all Cloudflare plans.

When denial gives way to pricing

The newest twist is that some clients no longer want only a wall. They want a market. Cloudflare’s pay-per-crawl system uses HTTP 402 Payment Required responses, with Cloudflare acting as merchant of record. It also says paid customers can receive customizable 402 responses, and that its customers are already sending over one billion 402 response codes on an average day.

That changes the agency conversation in a big way. Once a site can block, throttle, or monetize AI access, the question is no longer simply whether a crawler can read a page. It becomes a legal, revenue, and infrastructure decision about whether an AI system may read, reuse, or pay for that content.

Reporting that clients will actually understand

The reporting layer should reflect the policy layer. Clients do not just need to know how many bots arrived. They need to know which bots were allowed, which were blocked, which were slowed, and what those choices did to server load, ad inventory, and search visibility. A good report ties crawler behavior to business outcomes, not just raw access counts.

That is the agency opportunity hiding inside the AI-search era. The teams that can audit bots, explain tradeoffs, and maintain policy across robots.txt, WAF rules, and crawler controls will not just protect sites. They will help clients decide what their content is for, who gets to use it, and what price, if any, should come with that access.

Know something we missed? Have a correction or additional information?

Submit a Tip