SEO’s Hidden Layer, Why Annotation Now Shapes Search Visibility

AI can mislabel a page before it ranks, and that hidden annotation layer is now a visibility risk agencies can’t ignore.

The hidden failure mode agencies keep missing

The most dangerous search problem today is not a bad ranking, but a wrong label. Jason Barnard’s argument is blunt: AI systems can decide what a page means before they ever decide where it belongs, and if that first interpretation is off, visibility can quietly collapse even when the usual SEO signals look fine.

That is why the misattribution example matters so much. Google briefly attributed two Search Engine Land articles written by Barry Schwartz to Barnard, a reminder that a bot can misread authorship when page signals are unclear. In practical terms, the page may be indexed, crawled, and technically healthy, yet still be understood through the wrong entity, the wrong author, or the wrong topic.

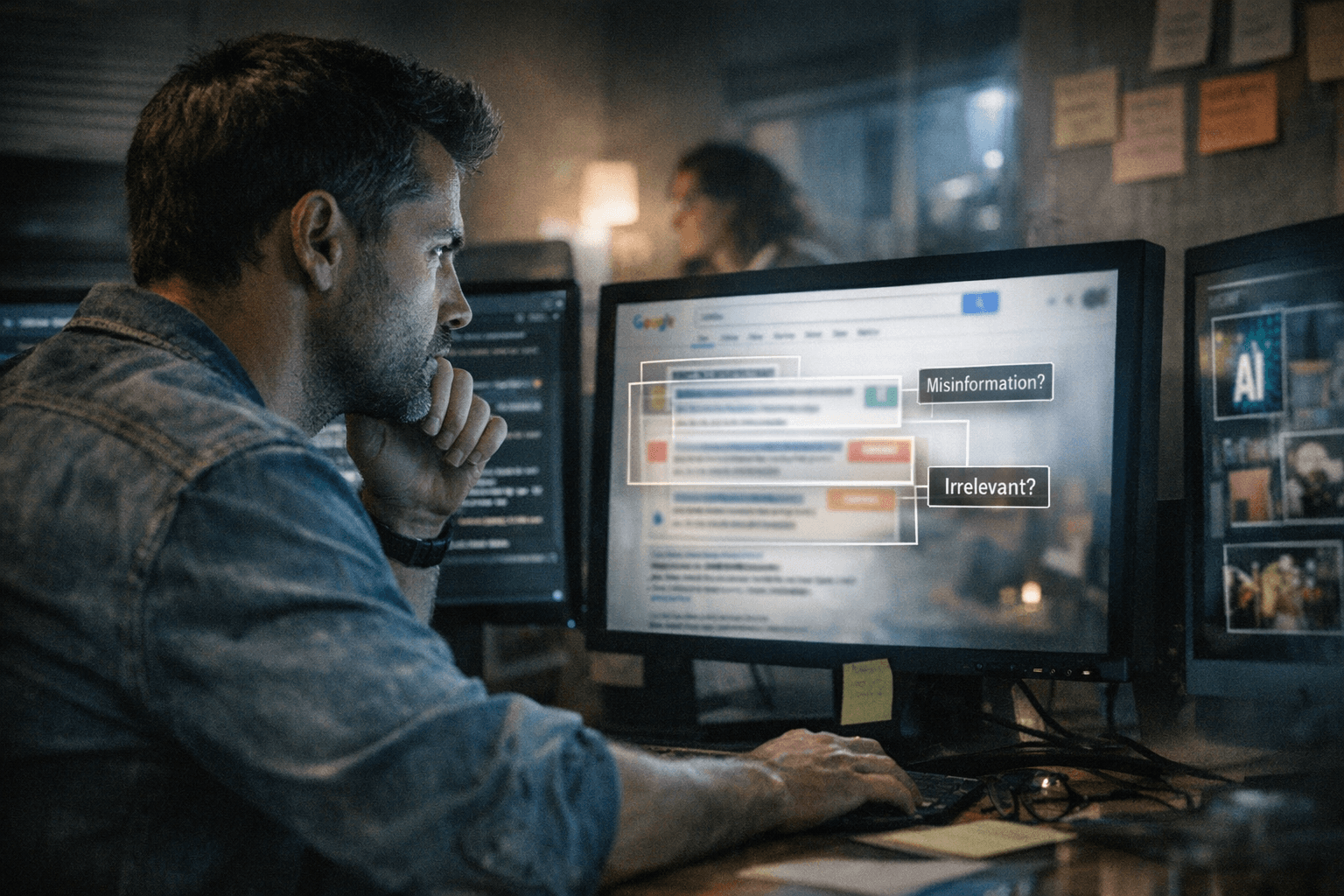

Why annotation is the new diagnostic layer

Barnard’s core point is that annotation sits after indexing and before the rest of the machine’s decisions. It acts like a confidence-based tagging layer that shapes retrieval, display, and answer generation, so the system is not only asking, “Can I find this page?” It is also asking, “What is this page about, who made it, and when should I trust it?”

That shift changes the agency workflow. A content issue is no longer just a keyword gap or a ranking dip. It can be a meaning problem, where the machine misunderstands the brand, the author, the product, the pricing, the audience, or the authority signal before a user ever sees the result.

For agencies, this is the new diagnostic layer to watch when clients say, “we rank, but AI still gets us wrong.” If the annotation layer is off, every downstream surface that depends on machine understanding can drift with it, from snippets to summaries to recommendation-style results.

What Google says about meaning

Google’s own documentation reinforces the idea that meaning is not guessed randomly. Search Central says structured data gives explicit clues about page meaning and helps Search understand content, including information about people, books, and companies. That is a direct signal that the machine-readable layer is not an accessory. It is part of how the system interprets identity and intent.

Google also says its Knowledge Graph contains billions of facts about people, places, and things, and that many of those facts come from public sources and from content owners. Because Google processes billions of searches per day, it relies on automation, which explains why errors can happen at scale and why the system needs constant correction through feedback and suggested changes to knowledge panels.

That context matters for agencies because it shows where misclassification lives. If the Knowledge Graph and related systems are built from structured inputs, public signals, and owner-provided data, then clarity is not cosmetic. It is operational.

How to make a site machine-legible

The practical response is not to write more content and hope for the best. It is to make the entity story unmistakable across the site. That means the technical team and the content team have to work together on the signals that help machines interpret meaning, not just extract text.

The first priority is entity consistency. Brand names, product names, author names, and organizational references need to match across pages, bios, schema, and external profiles. If one page implies one relationship and another page implies something else, the annotation layer has to choose, and that choice is where errors start.

A strong audit should also include:

- author bios that clearly establish who wrote the content and why that person is credible

- structured data that describes people, organizations, products, and other core entities

- page copy that states factual relationships plainly, including who the offer is for and what it includes

- alignment between on-page claims and the signals sent through markup, navigation, and linked entities

- regular checks for knowledge panel or entity-level confusion when a brand appears in AI surfaces

Google’s Knowledge Graph Search API documentation adds another useful clue here: it explicitly supports annotating and organizing content using Knowledge Graph entities. That is a direct reminder that entity annotation is not an abstract theory. It is part of how modern search systems organize information.

Why structured data still pays off

Barnard’s argument is about annotation, but Google’s structured data case studies show why the commercial stakes are real. Rotten Tomatoes saw a 25% higher click-through rate on pages enhanced with structured data. Food Network saw a 35% increase in visits. Rakuten reported users spending 1.5 times more time on structured-data pages and 3.6 times higher interaction rates on AMP pages with search features. Nestlé measured an 82% higher click-through rate on rich-result pages.

Those examples are not a one-to-one proof of Barnard’s point, but they do show the same principle at work: machine-readable signals can change behavior. Google also notes that rich-result eligibility can be affected by structured data quality, which makes the markup layer a performance issue, not just a technical cleanup task.

For growth teams, the lesson is straightforward. Structured data helps the machine understand the page; annotation determines what the machine thinks the page is. If those two layers are working together, visibility improves. If they are fighting each other, even strong content can get mislabeled and underused.

What agencies should monitor next

The search stack is moving toward summarization, comparison, and recommendation, not just indexing. That means agencies need to watch for signs that a brand is being understood incorrectly long before traffic drops become obvious. A page can still rank and still lose if the system thinks it is about the wrong thing.

The agencies that win in this environment will treat annotation as a formal diagnostic layer. They will combine content strategy, technical SEO, structured data, author signals, and entity consistency into one system of truth. In a search world increasingly shaped by AI interpretation, machine legibility is no longer optional, it is the difference between being found and being misunderstood.

Know something we missed? Have a correction or additional information?

Submit a Tip