BrightEdge study finds AI search engines diverge on sources, agree on brands

AI search is splintering on sources but converging on brands, giving marketers a new playbook for visibility across ChatGPT, Google, Gemini, and Perplexity.

A new map for AI visibility

Five AI search surfaces are not reading the web the same way, but they are converging on the same thing: brands. BrightEdge’s latest analysis across ChatGPT, Google AI Overviews, Google AI Mode, Google Gemini, and Perplexity shows a wide split in the sources these systems cite, paired with a much tighter overlap in the brands they recommend.

That matters because it changes the question marketers should be asking. The old SEO instinct is to chase one high-ranking page. The new AI search reality is messier: different engines pull from different source pools, yet they often settle on similar brand names when answering the same query. BrightEdge’s broader AI Catalyst work, which spans tens of thousands of prompts across ten industries, makes the point even clearer. Visibility is now a mix of citation strategy, brand authority, and the way a company is represented across the wider web.

Why the source layer is fragmented

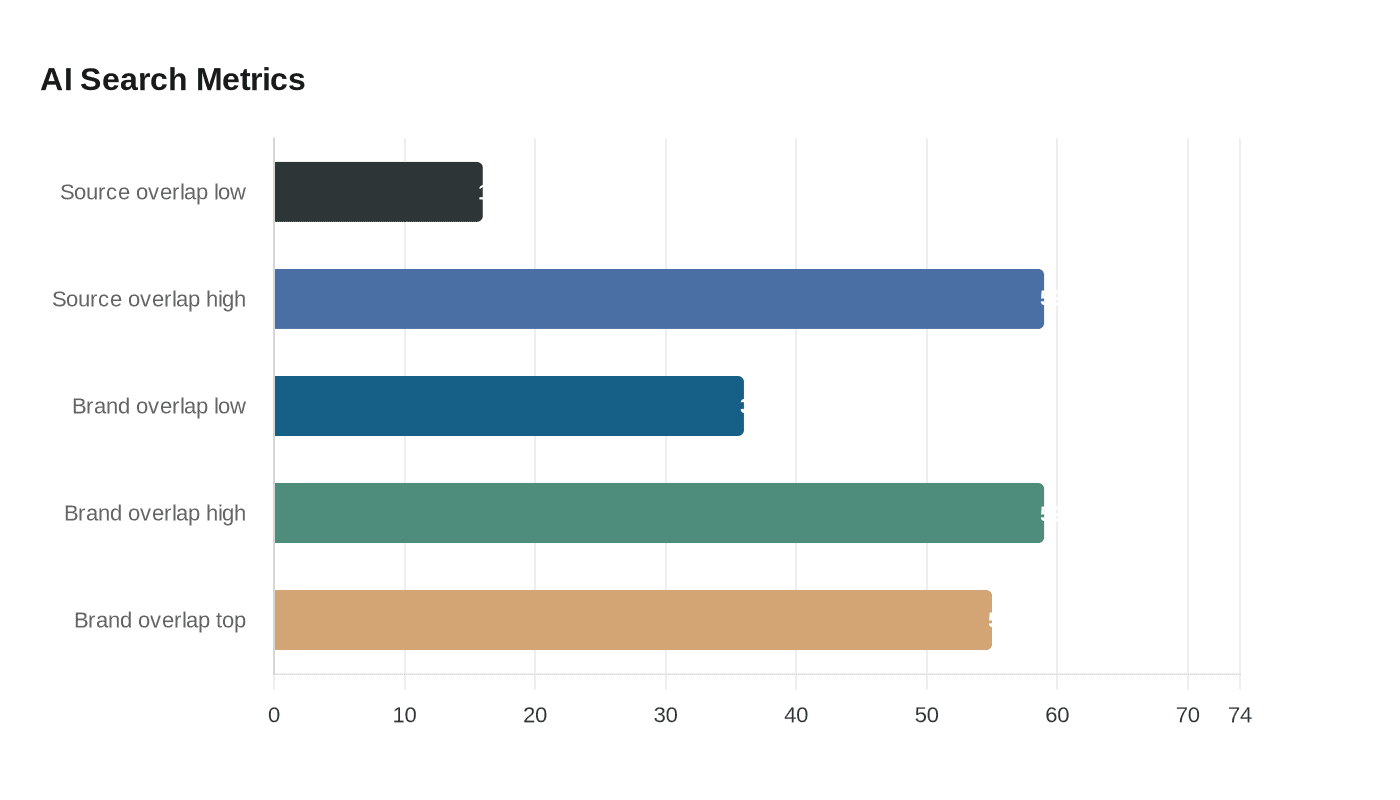

The most striking number in the study is the source-overlap range, which runs from 16% to 59%. That is not a small variation. It shows there is no single citation ecosystem that all AI search surfaces rely on. One engine may lean more heavily on institutional material, another may favor commercial or editorial sources, and another may pull more from user-generated content or social layers.

BrightEdge also tracked source classification and top-source concentration, including TLD distribution, which adds another useful layer to the picture. When the same brands appear through different source combinations, it signals that AI engines are not simply copying a Google-style ranking order. They are assembling answers from different kinds of authority, and they are doing it in different ways depending on the platform and the query.

That leaves a real opening for publishers and brands. If a page can win visibility in one source set but not another, the broader brand still has a chance to surface. In other words, the web page behind the answer may change from engine to engine, but the brand name can remain stable enough to carry through.

Brands are proving more durable than pages

BrightEdge’s brand-overlap range is far higher, landing between 36% and 59%, with the highest overlap in the article body described at 55%. That is the key strategic signal in the study. Sources are splintered, but brands are sticking.

BrightEdge’s interpretation is straightforward: strong brands that are clearly tied to specific products or services tend to perform more consistently because the market, the audience, and the surrounding citation ecosystem reinforce what those brands stand for. The result is that AI systems appear to recognize consensus around brand meaning more readily than consensus around a single source type.

This is where content strategy needs to shift. A page is still important, but it is no longer enough to think in terms of one landing page, one ranking, or one backlink profile. The brand has to be legible across the broader information environment. If an AI system sees a name repeated in authoritative sources, commercial coverage, and user discussion, that brand has a much better chance of being surfaced even when the exact source mix changes.

What the companion studies add

BrightEdge’s related comparison of ChatGPT, Google AI Overviews, and Google AI Mode sharpens the picture further. Those platforms disagreed on brand recommendations for 61.9% of queries, and only 17% of queries produced the same brands across all three. That kind of disagreement is a warning sign for anyone still treating AI search as a single channel.

The same research found that Google AI Overviews mentioned 2.5 times more brands than ChatGPT. It also found that ChatGPT cited brands 3.2 times more than it linked to them, which reinforces the idea that different systems behave differently. Some act more like recommendation engines, while others behave more like citation-first answer engines.

BrightEdge has also shown that commercial-intent language can change the outcome dramatically. Words such as “where,” “find,” “cheap,” and “deals” can trigger far more brand mentions than informational phrasing. That is a practical clue. If the query signals purchase intent, the brand layer becomes more important and the answer surfaces shift accordingly.

How each surface should shape your approach

ChatGPT

ChatGPT’s behavior in BrightEdge’s research suggests a stronger bias toward brand mentions than direct linking. That means it is especially sensitive to clear product identity, obvious category fit, and the language people use when they are close to buying. If your brand name is ambiguous or your offer is poorly defined, you are less likely to be consistently surfaced when users ask intent-heavy questions.

The tactical opening here is to make the brand easy to associate with a concrete need. Tight positioning, product-category clarity, and repeated mention in commercially relevant contexts all help. ChatGPT seems to reward brands that are already recognizable in the market conversation, not just brands with content volume.

Google AI Overviews and Google AI Mode

Google’s AI surfaces are still evolving, but the direction is already visible. BrightEdge reported in March 2025 that AI Overviews expanded by 18.26% in pixel height in the first two weeks of that month, and later reporting showed they were citing deeper into websites, not just the obvious top-ranking pages. Ahrefs-based reporting in March 2026 found that only 38% of cited AI Overview pages also appeared in Google’s top 10 results for the same query, down from 76% in an earlier July measurement.

That shift matters. It means Google’s AI layer is increasingly willing to pull from pages beyond page one, which creates a bigger opening for specialized, well-structured content with strong topical relevance. It also means page-one organic rankings are no longer the whole story. Deep, detailed pages can now earn AI visibility even if they are not the highest-ranked standard blue-link result.

Google Gemini and Perplexity

Gemini and Perplexity are part of the same fragmented citation landscape, and BrightEdge’s five-surface comparison shows they are not converging on the same source pool as ChatGPT and Google’s other AI surfaces. The practical lesson is not that one of these systems is “right” and the others are “wrong.” It is that each engine can reward a different mix of authority, source type, and brand recognition.

For brands, that means visibility tactics need to vary by platform. The same article, citation, or product page may carry different weight depending on whether the engine is leaning on institutional references, commercial/editorial coverage, or user-generated discussion.

Where the strategic openings are

The biggest opportunity is not simply to produce more content. It is to make the brand impossible to misunderstand. That means building a clear category association, showing up in the source layers AI systems already trust, and making sure the brand is discussed consistently across commercial media, institutional sources, and community spaces.

- Reinforce brand meaning with specific product and service language

- Publish content that answers commercial-intent queries as well as informational ones

- Earn mentions in authoritative editorial and institutional contexts

- Watch how different AI surfaces cite different source layers, then adjust accordingly

- Treat page-one rankings as helpful, but not sufficient, for AI discovery

A strong AI visibility program now needs to do several things at once:

The deeper takeaway from BrightEdge’s research is that AI search is becoming its own discipline. Winning is less about dominating one ranking list and more about shaping the brand story that multiple systems can recognize, reuse, and recommend. In that environment, the brands that are easiest to define will be the ones AI search remembers most consistently.

Know something we missed? Have a correction or additional information?

Submit a Tip