WEF and KPMG urge responsible AI scaling for cybersecurity

AI is becoming a core cyber tool, but WEF and KPMG say the real test is whether teams can govern it, trust it, and stay accountable when it fails.

AI is no longer a side project in cyber

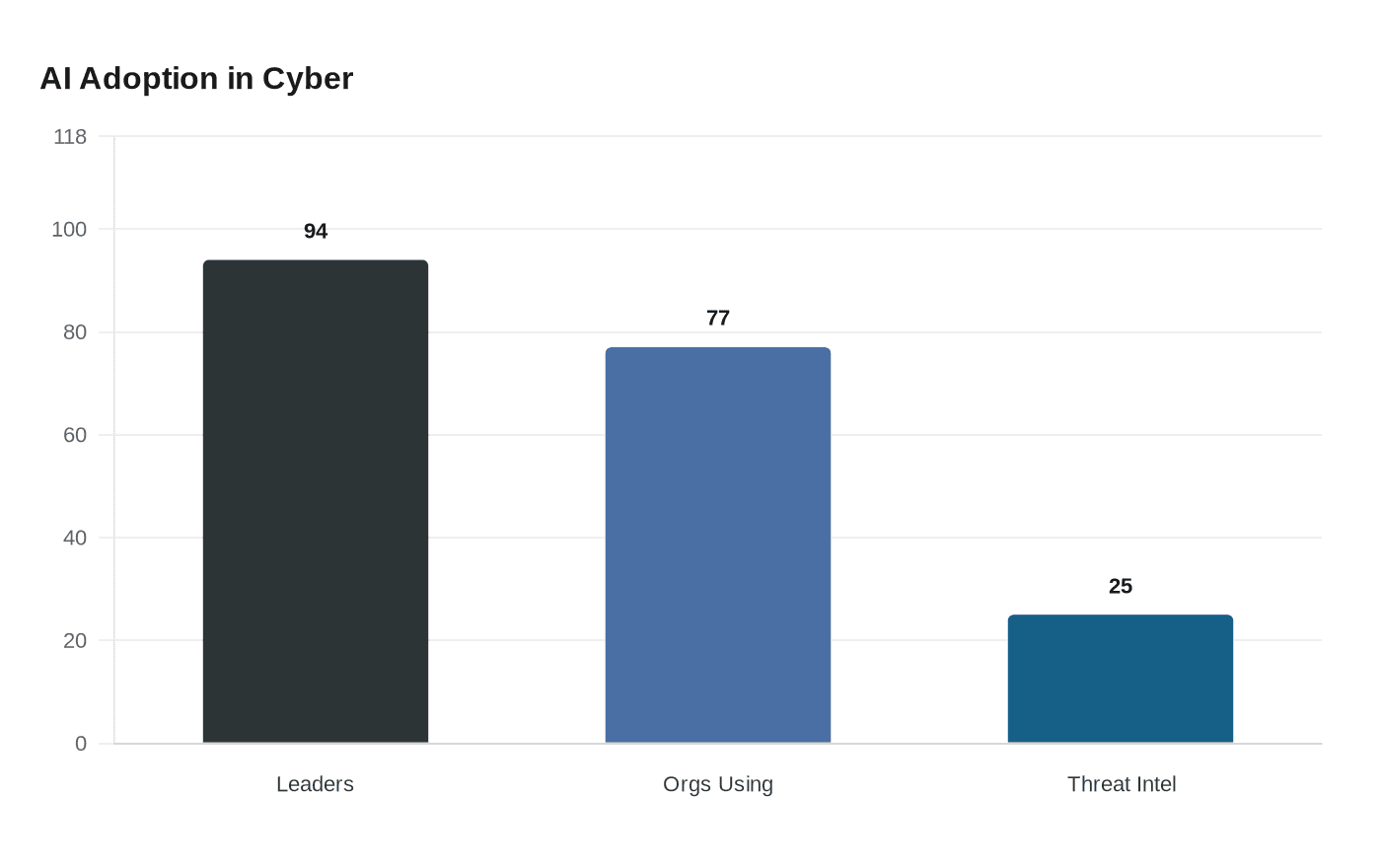

The latest message from the World Economic Forum and KPMG is straightforward: AI is moving from experimentation to the core of cyber defense, and the work is shifting with it. The report, *Empowering Defenders: AI for Cybersecurity*, says 94% of cyber leaders now see AI as a defining force in the field, while 77% of organizations are already using it in cyber operations. That is not just a technology story. It is a staffing, governance, and accountability story for the people who run security, risk, and audit functions every day.

For KPMG professionals, the pressure point is familiar. New tools often arrive faster than training, controls, or role clarity. The report’s central argument is that AI should augment human expertise rather than replace it, which means cyber teams are expected to absorb more responsibility without the luxury of treating AI as a novelty or a black box.

Why the report matters for the people doing the work

The report was published by the World Economic Forum in partnership with KPMG and draws on 20 real-world case studies, plus input from 105 representatives across 84 organizations in 15 industries. It is designed for executives and chief information security officers who want to move AI out of pilots and into core defensive capability. That framing matters because it places the burden on operating teams, not just leadership decks.

The practical takeaway is that security teams are being asked to do more than test tools. They need to decide where AI belongs in governance, how to vet its outputs, and how to keep human judgment in the loop when systems are making faster, broader, and more consequential decisions. In a Big 4 environment, that has implications for promotion cycles, specialization, and the kind of judgment partners expect from senior managers and directors who are increasingly responsible for advising clients on both cyber resilience and AI risk.

Where AI is showing up across the cyber lifecycle

One reason the report has weight is that it does not confine AI to a single security function. It says AI is transforming cybersecurity across the full lifecycle, including governance, risk identification, protection, threat detection, incident response, and incident recovery. That breadth is exactly why the skills discussion matters. A team that once used AI mainly for threat hunting may now need to evaluate how it affects policy, logging, response orchestration, and post-incident review.

The report also flags the rise of agentic AI, which can act with more autonomy than earlier systems. That creates a new category of risk: not just whether the model is accurate, but whether its level of independence is appropriate, explainable, and contained by guardrails. For cyber, risk, and audit teams, that means more work on oversight, model governance, and escalation paths whenever AI-driven systems make decisions that could affect evidence collection, response timing, or client reporting.

The gains are real, but so is the accountability

The business case for scaling AI in cybersecurity is strong enough to get executive attention. The report says organizations that extensively leverage AI in security can reduce average breach costs by up to $1.9 million and shorten breach lifecycles by about 80 days. Those are the kinds of figures that can reset budget conversations, especially when boards want proof that security spend is producing measurable results.

But the report does not present those gains as automatic. It points to operational efficiency improvements already seen in the field, including a 25% increase in threat-intelligence efficiency in one KPMG example. Accenture, in another cited case, cut security analysis time across more than 100,000 internet-facing sites from 15 minutes to under one minute. IBM’s ATOM platform is also cited as automating more than 850 analyst hours per month and reducing end-to-end investigation time by 37%.

Those numbers are compelling because they show where AI helps most: triage, scale, and speed. They also hint at the new shape of the job. If a platform can collapse analysis time so dramatically, then analysts are less valuable as human filters and more valuable as people who can validate outputs, spot edge cases, and decide when automation should stop. That is a different skill profile, and it is one many firms have not yet trained for at scale.

The governance gap is now the real risk

The report’s caution is simple: faster defense only works if organizations preserve human judgment and avoid systemic fragility. Laurent Gobbi, a KPMG partner, says success depends on executive support, high-quality data, a skilled workforce, integrated infrastructure, and retaining human judgment so the system does not become brittle. That is the part many companies struggle to operationalize. It is easy to buy tools; it is much harder to build the governance structure that keeps them reliable under pressure.

This is where cyber teams, risk teams, and internal audit teams converge. If AI is being used to detect threats, prioritize incidents, or guide recovery, then someone has to own the controls around it. That includes data quality, access rights, validation testing, escalation thresholds, and clear accountability when an AI-supported decision goes wrong. The report’s underlying message is that the more AI takes on operational weight, the more scrutiny must follow.

From warning about AI risk to proving defensive value

The new report also marks a shift from the Forum’s January 2025 work, *Artificial Intelligence and Cybersecurity: Balancing Risks and Rewards*. That earlier research found that 66% of organizations expected AI to have the biggest impact on cybersecurity in the coming year, but only 37% had processes to assess the security of AI tools before deployment. In other words, companies were already convinced AI would matter before they had the controls to manage it.

That gap is still the story underneath the optimism. The Forum says the earlier emphasis was on AI-related cyber risk, including concerns that attackers could use AI to automate deception, generate malware, and scale attacks at machine speed. The new paper does not deny those threats. Instead, it argues that defenders are now proving measurable gains and should scale responsibly rather than hesitate indefinitely. Akshay Joshi, who leads the Centre for Cybersecurity, says AI has the potential to shift the balance toward defenders, but only if the operational model is built to hold.

What this means inside a firm like KPMG

For KPMG teams, the report reads less like a future scenario and more like a management brief. Consultants will need to advise clients on where AI creates resilience and where it introduces new failure modes. Auditors will face more pressure to test AI-supported controls, not just document them. Advisory professionals will need enough technical fluency to challenge vendor claims, understand autonomy risks, and explain to client leaders why human oversight still matters even when the machine is faster.

The bigger workplace shift is that AI in cybersecurity does not reduce the need for people. It raises the bar on what those people must know. The firms that benefit most will be the ones that treat governance, training, and accountability as part of the deployment, not as cleanup work after the tools are already live.

Know something we missed? Have a correction or additional information?

Submit a Tip