Clarifai deletes three million OkCupid photos used for AI training without consent

Clarifai scrubbed 3 million OkCupid photos after the FTC said they were shared for AI training without consent. The real warning is for every portrait upload.

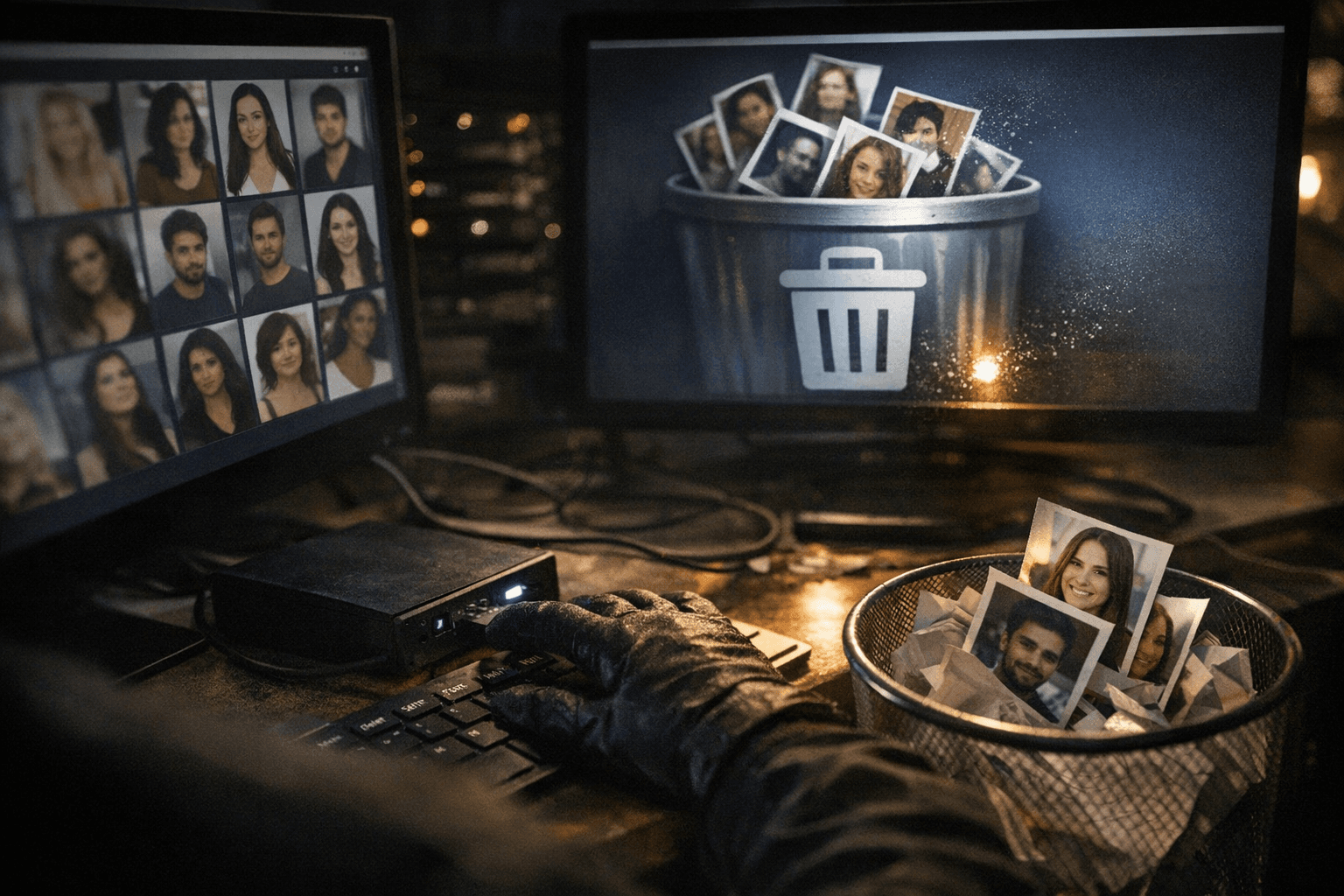

Three million portraits were pulled out of Clarifai’s system, a hard reminder that an ordinary face photo can end up as training data long after it leaves the upload screen. For photographers, the lesson is not about dating apps so much as the life cycle of images: a headshot, profile portrait, or location-tagged snapshot can be reused for facial-recognition work if the platform’s permissions are broad enough.

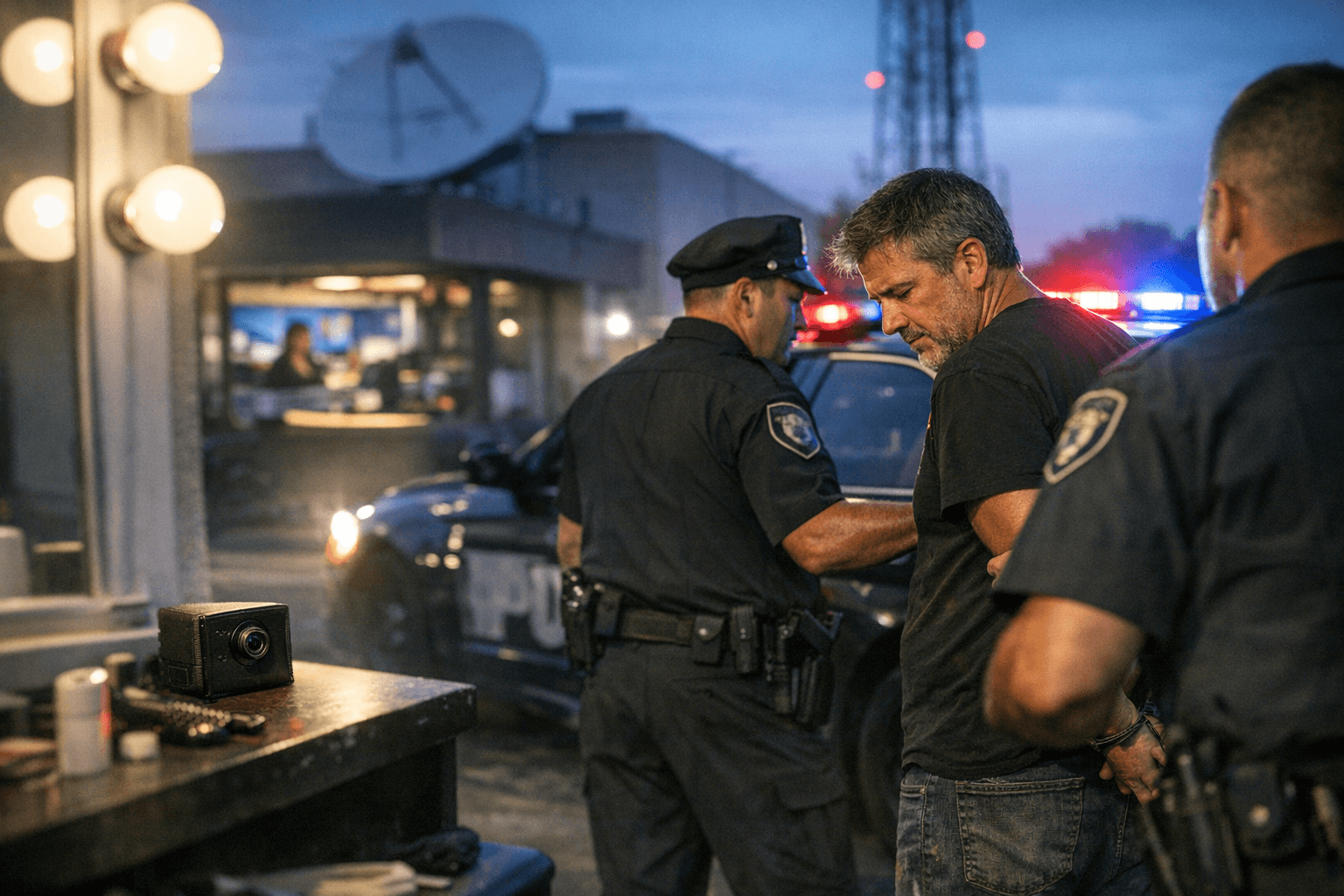

The Federal Trade Commission said OkCupid and Match Group Americas settled on March 30, 2026, after allegations that OkCupid shared personal information, including photos and location data, with an unrelated third party in violation of its privacy promises. The agency said OkCupid told users it would not share personal information except as allowed by its privacy policy or after notice and an opt-out opportunity, yet nearly three million user photos and related data were passed along without informing users or giving them a chance to opt out.

That third party was Clarifai, a facial-recognition and AI company. The FTC said OkCupid’s founders were financial investors in Clarifai, and that the data transfer, which dated to September 2014, came without formal or contractual restrictions on how the material could be used. In other words, the images did not just sit in a database. They entered a pipeline where use limits were weak, and the consequences were permanent once the data was fed into a model.

The case only escalated after a 2019 FTC investigation began, triggered by a New York Times article describing Clarifai using OkCupid images to build an AI tool that could estimate a person’s age, sex, and race from a face. Clarifai has now said it deleted the three million photos and any facial-recognition models trained on them. The FTC said the settlement permanently bars OkCupid and Match from misrepresenting how they collect, use, or share data, though it could not impose a monetary fine because this was a first-time offense.

For anyone uploading or licensing images, the practical read is blunt: the fine print around sharing, affiliates, partners, and model training matters more than the marketing copy. If a platform can hand portraits to an unrelated company without a clean opt-out, those images can become part of a dataset that outlives the original use. Once a face is in the training set, the burden shifts from image delivery to damage control.

Know something we missed? Have a correction or additional information?

Submit a Tip