Eldagsen and Astray Challenge Fontcuberta’s Algorithmic Photography Claims

Fontcuberta’s AI label gets tested by two famous contest reversals, and the real question for photographers is when editing stops being photography.

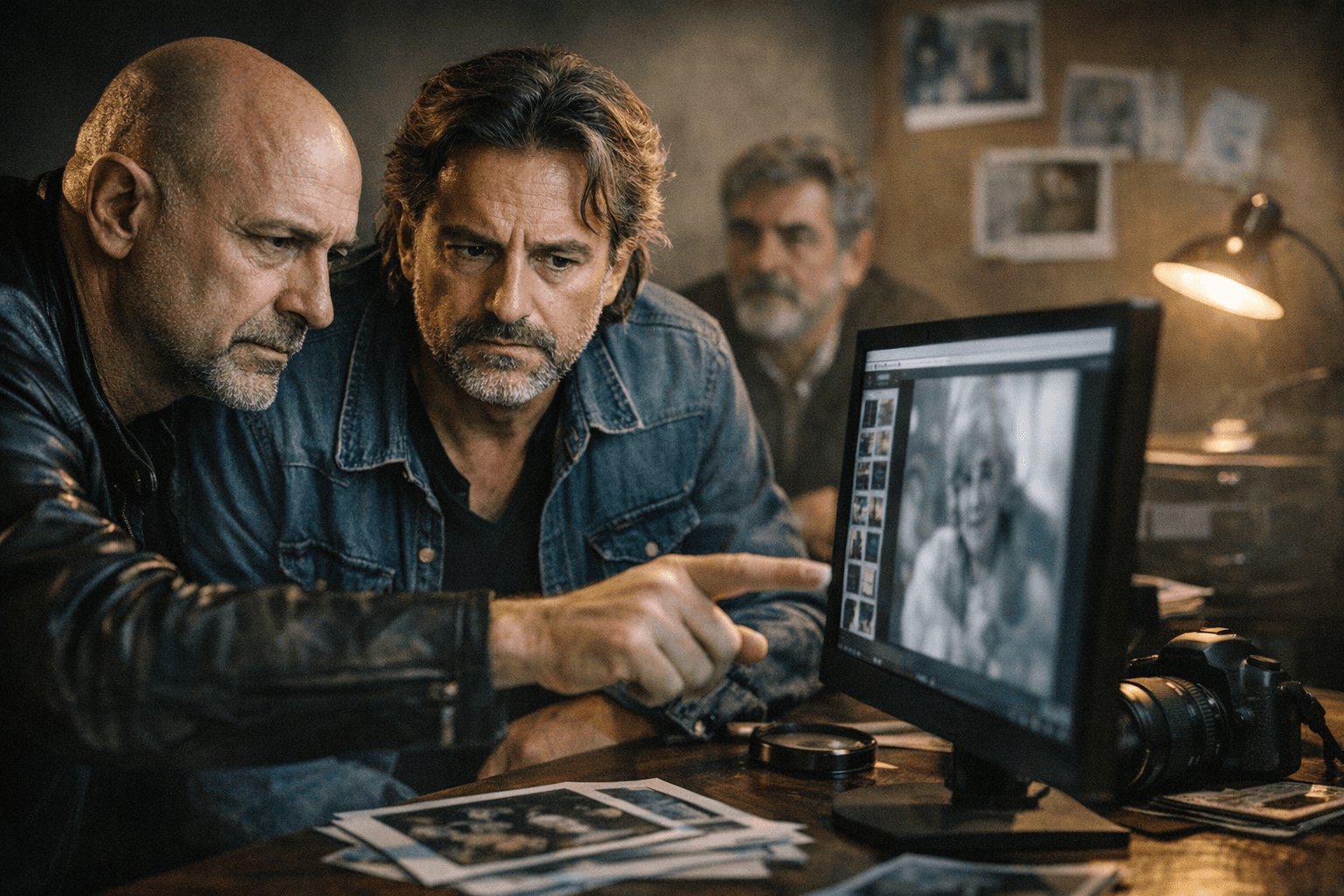

A real photograph won in an AI category, and an AI-generated image won a major photo award. That double reversal is why Boris Eldagsen and Miles Astray are pushing back now: the line between photographic editing and algorithmic image-making is no longer an abstract argument, it decides prizes, captions, and trust.

Why Fontcuberta’s language matters

Joan Fontcuberta’s *Immagini Latenti. La fotografia in transizione* was published in Italy in March 2026, and it closes with a chapter on AI and photography. The publisher’s synopsis asks what remains of photography in the 21st century in an era shaped by post-truth, social media, and generative artificial intelligence. Fontcuberta frames AI-generated images as “Second-Generation Photography” and uses the term “Algorithmic Photography,” which is exactly where Eldagsen and Astray draw the line.

Their objection is not just semantic nitpicking. They argue that images made through cameras and photo-optical systems are fundamentally different from outputs generated by computational systems, even when the finished picture looks plausible enough to fool a quick scroll on a phone. If you call both things photography, you blur authorship, truth, and creative labor in one move.

That distinction matters because photographers now live in a space where the workflow itself is under scrutiny. A crop, tone curve, and local dodge-and-burn are one thing. A model inventing a face, a window, or a complete scene is another. Fontcuberta’s framing tries to fold both into the same family, and Eldagsen and Astray argue that the family tree does not hold up.

The two contest cases that broke the frame

Boris Eldagsen became one of the most visible names in the AI-versus-photography fight when his image *Pseudmnesia | The Electrician* won the Creative Open category at the 2023 Sony World Photography Awards. He refused the prize on stage in London in April 2023 after revealing that the image was AI-generated. At the time, the competition had allowed submissions using “any device,” and the World Photography Organisation later said Eldagsen had described the work as a “co-creation” before the winner was announced.

That episode still matters because it showed how easily a ruleset can collapse once the image on the screen is allowed to stand in for the process behind it. A jury can reward the surface and miss the mechanism. For photographers, that is not an academic gotcha. It is the difference between entering a competition as a camera operator and entering as someone who prompted a model into making the final frame.

Miles Astray’s case was the mirror image. His image *F L A M I N G O N E* won third place and the People’s Vote in the 1839 Awards’ AI category in 2024, then Astray disclosed that it was a real photograph taken in Aruba, not an AI image. The awards organizer disqualified the entry after the disclosure, and later said the incident brought global attention to the contest and its participants.

Astray’s win exposed a separate weakness: even an AI-specific category can be gamed by a conventional photograph if the audience and judges are reacting to the image alone. That is the nightmare scenario for any contest that assumes the final file is enough to define the medium. The picture may look synthetic, but if the process was camera-based, the category label changes everything.

Where editing ends and algorithmic image-making begins

For working photographers, the useful question is not whether software touched the file. It is whether software invented content you did not capture. Basic editing still belongs to the long tradition of darkroom and digital finishing: exposure correction, white balance, color grading, sharpening, crop, cleanup, and modest retouching. Those moves shape a photograph, but they do not replace the photograph.

The line gets crossed when the software starts generating the subject matter itself. If the sky, hands, background, or even the main figure are synthesized by a model, the finished image is no longer just a photograph with processing. It becomes an algorithmic image that borrows photographic cues. That is the distinction Eldagsen and Astray are asking readers, judges, and clients to make out loud.

- If you captured the light and the scene, you are in photography.

- If the software invents the scene, you are in algorithmic image-making.

- If a contest, client, or publication would misread the process from the final file alone, the caption needs to do more work.

A practical way to think about it:

That may sound strict, but it is the only way to keep trust intact. A portfolio, a commercial delivery, and a competition submission all depend on the same basic promise: the image says what it is. Once you break that promise, the picture may still be useful, but it is no longer honest to label it as a straightforward photograph.

Why this debate reaches beyond contests

Fontcuberta has spent years pushing on photographic truth, so this dispute is especially pointed. He is not dismissing the medium from the outside. He is arguing from inside photography’s conceptual history, which makes Eldagsen and Astray’s reply even sharper: if you collapse AI images into photography, you weaken the category that gives photographs their specific meaning.

That has consequences well beyond trophies. Editorial teams need cleaner disclosure rules. Competition organizers need firmer language about capture and generation. Clients need to know whether they are buying a photographed moment or a machine-built composite. And photographers need to be able to explain the difference without sounding defensive.

Even the production of this discussion reflects the moment it describes. The translation of the Italian text used multiple AI tools, including ChatGPT, Gemini, and DeepL. That detail is not a gimmick; it is a reminder that AI is already embedded in the pipeline around photography, not just in the image-making stage itself.

The practical takeaway for photographers

The most useful definition is the one that survives a caption, a contest entry, and a client email. If your process begins with camera-made light and ends with human editing, you are still working inside photography. If your process depends on a model inventing the image’s substance, then you owe the result a different name.

That is the real force of Eldagsen and Astray’s challenge to Fontcuberta. They are not arguing that AI images are worthless. They are arguing that calling them photography without qualification makes the rules harder to enforce, the awards easier to game, and the work harder to trust. In a field where the file on screen can no longer prove its own origin, the label is part of the image.

Know something we missed? Have a correction or additional information?

Submit a Tip