Batty Brings Rust-Powered Supervision to Parallel AI Coding Agents

A single 51,000-line Rust binary called Batty lets you run Claude Code, Codex, and Aider in parallel without becoming a full-time dispatcher.

AI coding agents are good at isolated tasks. They're terrible at working together." That diagnosis, posted on DEV Community on March 22, is the starting point for Batty: a terminal-native daemon that supervises teams of AI coding agents, running in tmux and isolating work in git worktrees while routing messages between agents and gating everything on tests.

The project uses synchronous polling, Maildir inboxes, and git worktrees as the core engineering primitives to solve a problem that anyone who has pushed past a single-agent workflow will recognize immediately. As the author wrote: "I've been running Claude Code, Codex, and Aider on real projects for months. One agent works great. But the moment you try to run three or four in parallel on the same repository, you hit a wall: they edit the same files, nobody checks if tests pass, and you become a full-time dispatcher instead of a developer."

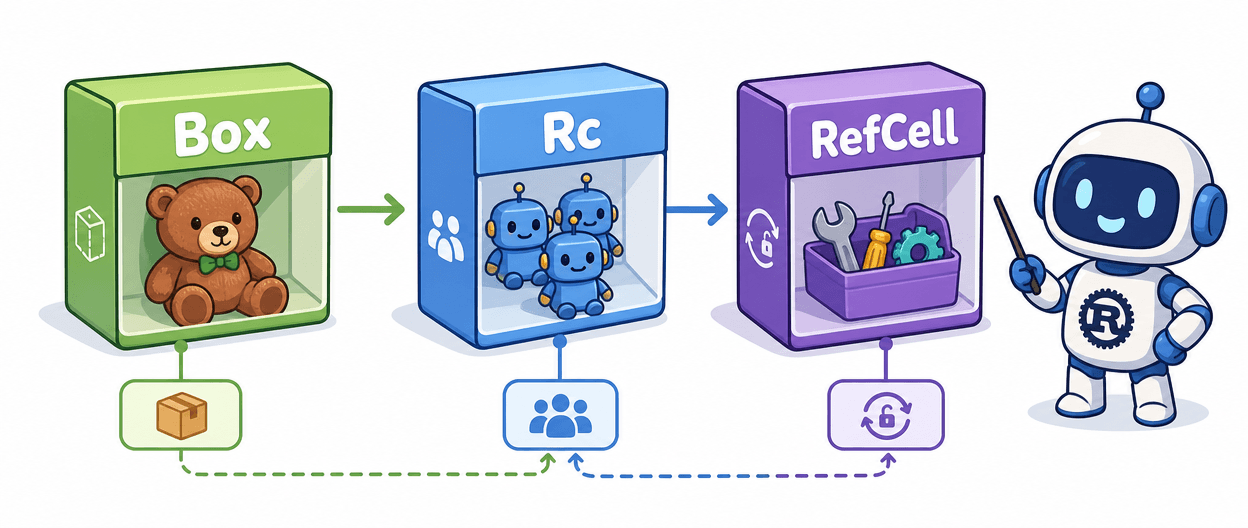

Batty is a single Rust binary, roughly 51,000 lines compiled against the Rust 2024 edition, that manages a strict hierarchy: you feed work to an Architect, which dispatches to a Manager, which assigns tasks to three to five Engineer agents running in parallel, each coding in an isolated git worktree via a kanban-style board. Every agent gets its own tmux pane.

The daemon polls every five seconds, detects agent states, delivers messages, dispatches tasks, runs tests, and merges results. Everything is file-based: YAML config, Markdown kanban boards, Maildir inboxes, and JSONL event logs. Maildir was chosen deliberately: it is battle-tested, inspectable with a plain `ls`, and survives daemon restarts. If Batty crashes mid-delivery, undelivered messages remain in the `new/` directory when it comes back, and no custom recovery logic is needed.

Each Engineer agent gets a persistent worktree under `.batty/worktrees/`, with a fresh branch cut per task rather than a freshly created worktree. That distinction keeps worktree setup overhead out of the hot path. Also notably absent from Batty's architecture: any async runtime in the hot path. The synchronous polling model keeps the control loop predictable and debuggable, a deliberate trade-off the author addresses directly in the DEV write-up.

Error handling follows a similar philosophy. If a message delivery fails because a pane is dead or tmux returns an error, the message stays queued and gets retried on the next iteration. Transient errors like rate limits and timeouts are categorized separately from permanent failures, with a custom `is_transient()` check on the `DeliveryError` type.

The DEV post, the first in a two-part series titled "Parallel AI Agents: From Chaos to Coordination," covers not just what was built but why each architectural decision was made and what the author would change. The project's GitHub repository is at github.com/battysh/batty, documentation lives at battysh.github.io/batty, and a two-minute demo walkthrough is available alongside those resources. Issues and PRs are explicitly welcome.

For Rust developers watching the AI tooling space, Batty represents a bet that structured supervision, file-based state, and synchronous control loops will outlast flashier async orchestration approaches. With a second part of the series still to come, the architectural reflections are only half told.

Know something we missed? Have a correction or additional information?

Submit a Tip