Burn emerges as Rust's flexible, portable deep learning framework

Burn is starting to look like the Rust ML crate that tries to have it all: training, inference, portability, and hardware-aware performance without picking just one.

Burn is trying to solve the Rust ML problem the hard way

The interesting Burn question is not whether Rust can host machine learning code. It is whether one framework can stay flexible, portable, and fast without making you pay for every one of those choices somewhere else. Burn’s answer is aggressive: runtime-optimized tensor streams, a just-in-time compiler, and ownership-aware tensor tracking, all wrapped in a framework that wants to cover training and inference without acting like a thin clone of PyTorch or TensorFlow.

That ambition is exactly why Burn stood out as crate of the week in This Week in Rust 650 on May 6, 2026. It was not framed as a novelty or a side project. It was presented as a tensor and deep learning library that looks serious enough to matter to Rust developers who care about numerical computing, backend flexibility, and deployment.

What Burn is actually trying to be

Burn’s docs are unusually direct about its goals. The crate page describes it as a next-generation tensor library and deep learning framework that does not compromise on flexibility, efficiency, or portability. The official documentation goes further, calling it a new comprehensive dynamic deep learning framework built in Rust, with extreme flexibility, compute efficiency, and portability as its primary goals.

That combination matters because most ML stacks force a tradeoff. One tool is fast but rigid. Another is flexible but harder to tune. Another can run almost anywhere, but only after you accept awkward backend compromises. Burn is designed to push on all three at once, and that is the part worth paying attention to if you are deciding what to build next in Rust.

The project’s own book makes another useful distinction: Burn is not merely a replication of PyTorch or TensorFlow in Rust. That matters because it tells you how to think about the framework. This is not just an attempt to recreate a familiar Python workflow with safer syntax. It is a Rust-native attempt to build a deep learning system around the language’s strengths.

Why the architecture is different

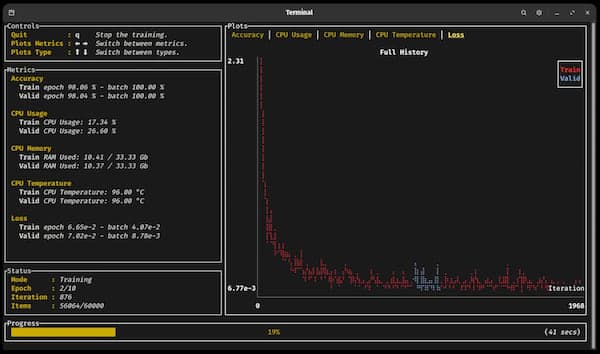

Burn’s homepage describes a tensor architecture based on tensor operation streams that are fully optimized at runtime and auto-tuned for the target hardware by a just-in-time compiler. The same material says the design relies on Rust’s ownership rules to track tensor usage precisely. That is the kind of detail that makes Burn more interesting than a generic “Rust enters AI” headline.

In practice, that architecture hints at what Burn is trying to buy you: less waste in tensor handling, more precise control over data flow, and a runtime that can adapt to the machine underneath it. If you have ever hit a wall where a framework was elegant until it met real hardware, this is the part of Burn that should catch your eye.

Its docs also say performance is a core pillar, and that the framework aims to be as fast as possible across many hardware targets while remaining robust. That combination is not a casual promise. It is the central engineering bet behind the whole project.

Where Burn fits in a Rust ML stack

If you are choosing between Burn, Candle, tch, or another Rust ML option, the practical question is not “which one is the best framework?” It is “what kind of project do you want to ship?”

Burn looks best when you care about the full path from model development to deployment, and when portability across hardware matters as much as raw throughput. It is built for training and inference, and the docs explicitly frame it that way. If your goal is to explore small models, prototype a training loop, or build inference code that has to live outside a single hardware assumption, Burn has a stronger story than a narrow wrapper around one backend.

That is where Burn differs in spirit from the more familiar Rust options. Candle and tch are often the names people already know when they first look for Rust ML tooling, but Burn is aiming at a broader problem: not just making tensors available in Rust, but making the framework itself portable across backends and hardware targets without losing too much speed or ergonomics. If Candle or tch feel like the obvious place to get moving fast, Burn is the one you reach for when you want more architectural flexibility and are willing to live with a framework that is still actively maturing.

The backend story is the real tell

Burn 0.17.0, released on April 24, 2025, is a good snapshot of where the project is headed. That release added a new Metal backend via WGPU passthrough, and the release notes say CubeCL now powers CUDA, Metal, ROCm, Vulkan, and WebGPU backends. It also expanded tensor operation fusion for element-wise ops, reductions, and matmul.

That matters because backend support is where most “portable ML” stories get messy. Supporting CUDA is one thing. Supporting Metal, ROCm, Vulkan, WebGPU, and the rest without turning the stack into a maintenance trap is a much harder problem. Burn’s release notes make it clear that portability is not a slogan here. It is the core engineering work.

- train small models without leaving Rust

- run inference with a framework that is built for multiple hardware paths

- experiment with backend choice without rewriting the whole stack

- target desktop, GPU, and web-adjacent environments with the same project vocabulary

For Rust developers, that opens up a concrete use case list:

That last part is especially important. Burn’s backend mix suggests a framework that wants to follow Rust into places where a conventional Python-first ML stack can feel awkward.

What the project activity says about maturity

The Burn GitHub repository shows a project that is active, visible, and still solving hard problems. At crawl time, it reported about 15.1k stars, 903 forks, 261 open issues, and 1,035 closed issues. That is not the footprint of a dormant experiment. It is the footprint of a project that people are using, stress-testing, and pushing into new corners.

The open issue list reinforces that picture. Recent work and bug reports touch runtime backend selection, paged flash attention, FFT backward support, and backend-specific correctness and performance issues. That mix tells you two things at once. First, Burn is deep enough to attract serious usage. Second, portable high-performance ML is still a moving target, and Burn is doing the unglamorous work of ironing out the edge cases.

That is also why the project feels more relevant than a one-off framework demo. It sits in the part of the Rust ecosystem where performance, predictable memory behavior, and deployment concerns all collide. For Rust developers building real systems, that is the useful zone.

Burn is not ready-made magic, and it is not pretending to be. What it does offer is a coherent answer to a hard question: if you want deep learning in Rust, and you care about training, inference, hardware flexibility, and runtime efficiency at the same time, Burn is one of the few frameworks that is trying to earn all of those claims in the same codebase.

Know something we missed? Have a correction or additional information?

Submit a Tip