Practical Strategies to Speed Up Rust Builds Locally and in CI

Slow Rust builds drain CI budgets and developer patience; these actionable strategies tackle both with cargo-native tools and workspace-level thinking.

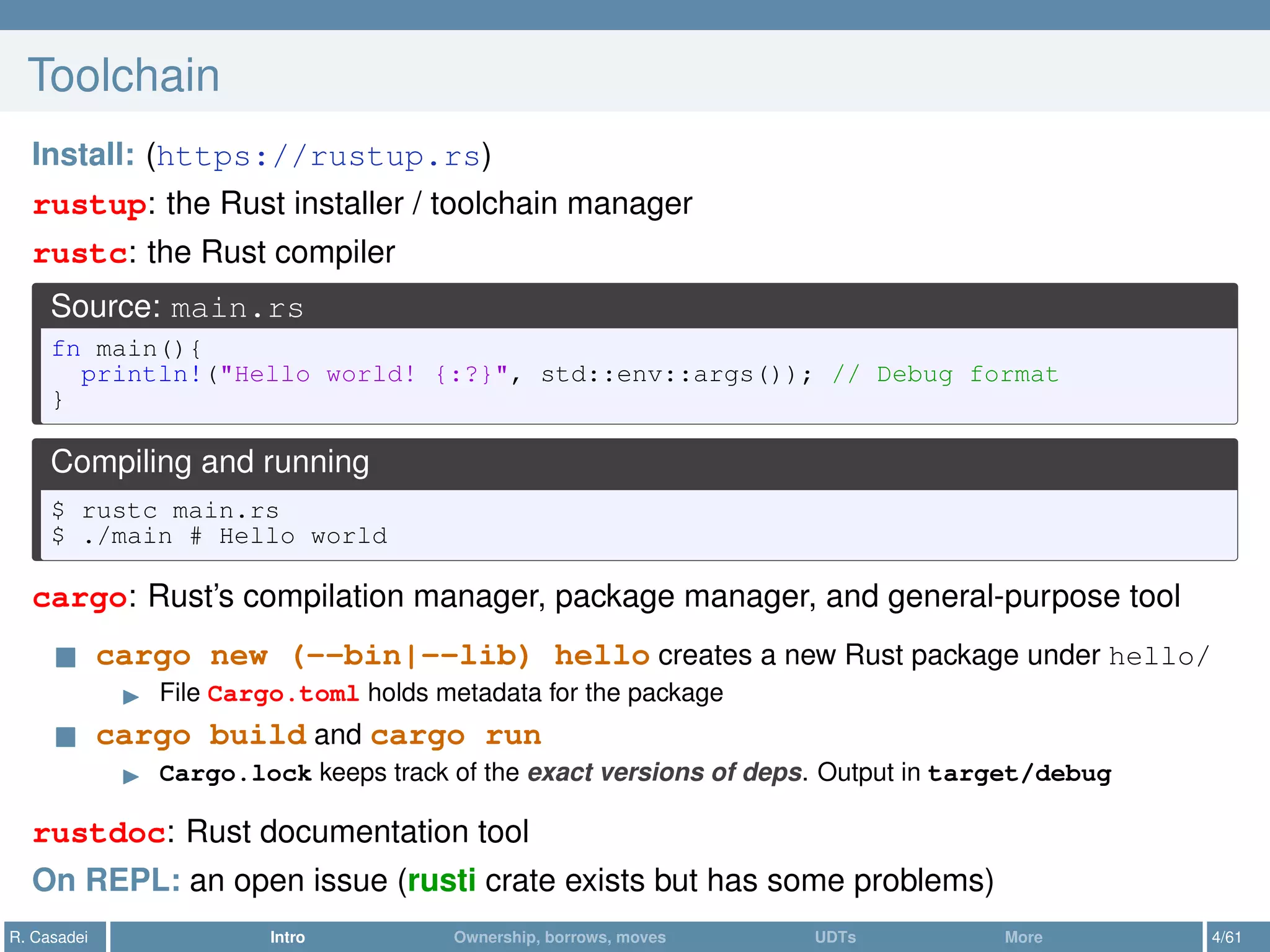

Compile times are one of the most discussed friction points in the Rust ecosystem, and for good reason. A project that takes four minutes to build locally might balloon to fifteen in CI, where cold caches and underpowered runners compound every inefficiency. The good news is that the Cargo toolchain, documented thoroughly in the Cargo book and refined through years of changelog improvements, gives you a surprisingly deep set of levers to pull. What follows is a synthesis of those recommendations alongside community best practices that engineering teams have validated in production.

Understand what's actually slow before optimizing

The single most common mistake is reaching for a tool before profiling the build. Cargo includes timing instrumentation you can enable with `cargo build timings`, which generates an HTML report showing how long each crate took to compile and, critically, whether crates were blocked waiting on dependencies. That blocking behavior is the key insight: if your dependency graph is wide and parallel, you have headroom; if it's a long serial chain, you need to restructure before caching will help much. Running this report on both a clean build and an incremental one will reveal two entirely different bottlenecks, and conflating them leads to wasted effort.

Incremental compilation deserves its own strategy

Rust's incremental compilation, enabled by default in debug builds, saves work between rebuilds by reusing unchanged compilation units. The important nuance here is that incremental compilation is most effective when you make small, localized changes. Large refactors that touch a shared type or a widely imported module will invalidate a substantial portion of the incremental cache, making the build nearly as slow as a clean one. For local development, keeping your crates small and well-bounded is the structural investment that makes incremental compilation pay off consistently. For CI, incremental compilation is often counterproductive on runners that start fresh, since the overhead of writing and reading the incremental state can outweigh the savings on a cold cache.

Workspace layout shapes compile parallelism

The Cargo workspace model is one of the most underused performance tools available. Splitting a large monolithic crate into a workspace of smaller crates allows Cargo to compile independent crates in parallel, taking full advantage of multi-core build machines. The Cargo book recommends placing shared utilities and types in dedicated crates near the bottom of the dependency graph, so they compile once and are reused. Avoid creating circular-feeling dependency clusters where many crates all depend on one heavyweight crate that changes frequently; that single crate becomes a serial bottleneck that stalls the entire build graph every time it's touched.

Feature flags and build profiles are compile-time knobs

Cargo's feature flag system has a direct performance implication: the more features you enable, the more code gets compiled. In a workspace with optional integrations, enabling every feature for every build, which is a common CI default, can double or triple compile times compared to compiling only the features your tests actually exercise. Be deliberate about ` features` flags in CI configuration. On the profile side, the `[profile.dev]` section in `Cargo.toml` gives you control over optimization levels, debug info, and codegen units. Setting `codegen-units = 16` for debug builds explicitly (the default is higher) can reduce compile time at the cost of some runtime performance, which is an acceptable trade in a development context. The `opt-level = 0` default for dev is already fast; resist the urge to bump it locally unless you need realistic performance numbers.

Caching is where CI economics are won or lost

On CI runners, every build starts from scratch unless you explicitly cache the right directories. The two directories that matter most are the Cargo registry cache, typically located at `~/.cargo/registry` and `~/.cargo/git`, and the `target/` directory specific to your project. Caching the registry avoids re-downloading and re-extracting crate sources on every run, which alone can shave minutes off cold builds. Caching the `target/` directory is more nuanced: it saves compiled artifacts but grows quickly and can become stale in ways that cause mysterious build failures. A practical middle ground is to cache the registry unconditionally and cache `target/` with a cache key that includes your `Cargo.lock` file hash, so the target cache is invalidated whenever dependencies change.

The Cargo changelog has introduced improvements to how Cargo handles the `Cargo.lock` file over time, and keeping your toolchain current means benefiting from these incremental efficiency gains without changing your configuration.

Linker choice has an outsized impact

The default system linker, `ld` on Linux, is not optimized for the incremental, partial-link workload that iterative Rust development creates. Switching to `lld` (LLVM's linker) or `mold` (a modern parallel linker) can cut link times by 50 to 80 percent on large projects. This is configured in `.cargo/config.toml` under the `[target]` table, and the change is local to the developer or CI environment, requiring no changes to `Cargo.toml`. On macOS, `zld` was a popular alternative for some time, though Apple's own improvements to `ld` and the rise of `lld` have shifted community recommendations. The Rust community broadly considers linker switching one of the highest-leverage, lowest-effort changes available for improving build feedback loops.

Dependency hygiene compounds over time

Every dependency you add is a crate that must be compiled, and many popular crates pull in transitive dependencies that are themselves heavyweight. Running `cargo tree` to audit your dependency graph periodically is a habit that pays dividends. Look for duplicated versions of the same crate, which force the compiler to build multiple copies, and for dependencies that are only used in tests or examples but pulled into the main build graph. Moving test-only dependencies into `[dev-dependencies]` ensures they don't inflate production build times. The Cargo book explicitly distinguishes between dependency types for this reason.

CI pipeline structure: don't build what you don't need

Beyond caching and tooling, the structure of your CI pipeline itself shapes performance. Running `cargo check` instead of `cargo build` for jobs that only need to validate correctness skips the code generation phase entirely, which is often the slowest part of a Rust build. Separating your pipeline into a fast check stage and a slower full-build stage lets developers get feedback on obvious errors in under a minute while the full artifact build runs in parallel. Similarly, `cargo test no-fail-fast` combined with parallel test execution across workspace members can distribute test runtime across runner capacity rather than serializing it.

The compounding effect of these strategies is significant. Teams that have applied workspace restructuring, linker switching, selective feature compilation, and disciplined CI caching report build time reductions that bring multi-minute CI jobs down to under sixty seconds for incremental runs. None of these require exotic tooling; they're all grounded in Cargo's documented behavior and the accumulated knowledge of a community that has been wrestling with compile times as a first-class concern for years. The investment in getting this right pays back every time a developer pushes a commit.

Know something we missed? Have a correction or additional information?

Submit a Tip