Rust App Optimization Uses Napkin Math to Crush Lock Contention and Memory Pressure

Napkin math plus data-oriented redesign is how engineers at PlanetScale and ex-Cloudflare are actually killing lock contention in production Rust apps.

Engineers who've shipped Rust in production know the pattern: your app feels fast until it doesn't, and the profiler points somewhere uncomfortable. Lock contention and memory pressure are the two villains that show up most often once you're past the "hello world" benchmarks and into real workloads. A detailed profile of one such Rust application is making the rounds precisely because it doesn't just diagnose the problem — it shows the math and the redesign decisions that actually fixed it. The fact that it's been shared widely by engineers from PlanetScale and veterans of Cloudflare's infrastructure teams tells you this isn't theoretical territory.

The Problem: Lock Contention and Memory Pressure in the Real World

Lock contention happens when multiple threads compete for the same `Mutex` or `RwLock`, and the threads that lose the race stall. In high-throughput Rust services, this can turn a theoretically concurrent system into something that behaves sequentially under load. Memory pressure is the quieter cousin: when your allocator is working overtime because you're creating and dropping lots of short-lived heap allocations, you pay in latency spikes and cache thrashing that don't always show up cleanly in flamegraphs.

What makes this particular application profile interesting is that both problems were present simultaneously. That's common in real codebases — the lock contention creates backpressure that makes memory allocation patterns worse, and the two pathologies reinforce each other. Untangling them requires understanding not just where the hotspots are, but why the data is structured the way it is.

Napkin Math as a First Diagnostic Tool

Before touching a single line of code, the optimization approach leans on napkin math: rough-order-of-magnitude calculations that tell you whether a bottleneck is even worth pursuing. This is a discipline borrowed from systems engineers at places like Cloudflare, where you need to reason about whether a given design can physically fit your performance envelope before you spend a week refactoring it.

The napkin math approach here means asking questions like: how many times per second is this lock acquired? How long is the critical section? What's the expected thread count under production load? If the answers multiply out to something that saturates a core, you have your culprit. If they don't, you look elsewhere. This kind of back-of-the-envelope reasoning is what separates engineers who optimize effectively from those who spend time tuning code paths that weren't the bottleneck.

For memory pressure, the same arithmetic applies. How large is each allocation? How frequently is it created and dropped? Does the total throughput of your allocator exceed what your system's `jemalloc` or `mimalloc` configuration can handle without fragmentation? These numbers are often knowable without a profiler, and knowing them first makes the profiler data far more interpretable.

Data-Oriented Redesign: The Actual Fix

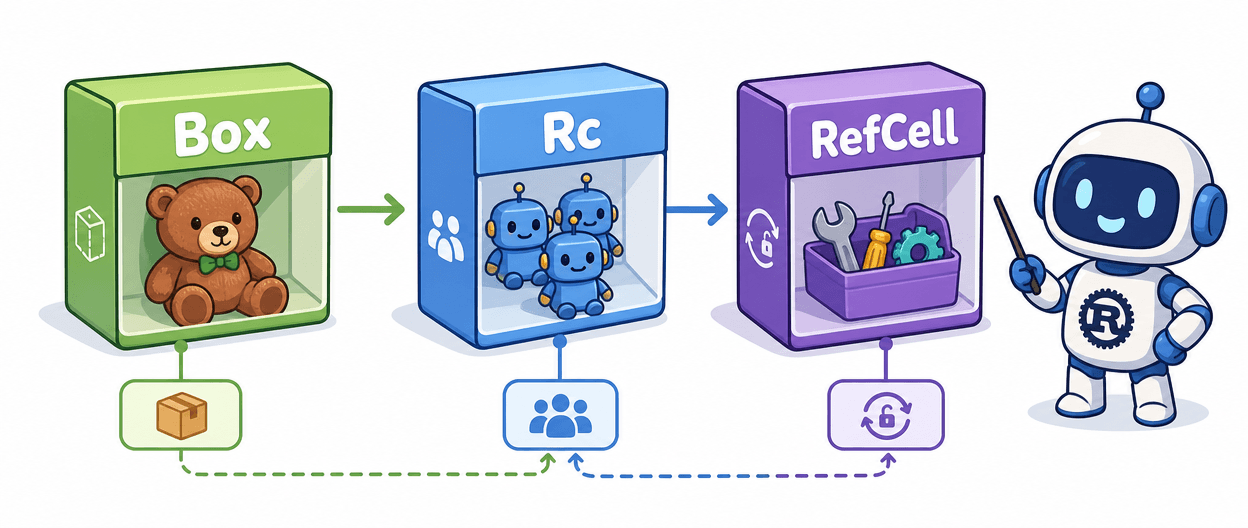

The core intervention in this profile is a data-oriented redesign. This is a specific philosophy: instead of organizing your data around the objects or abstractions that feel natural to model, you organize it around how the data is actually accessed. In Rust terms, this often means moving from a `Vec<Arc<Mutex<Thing>>>` pattern toward structures where the data is stored contiguously and ownership is managed at a higher level.

When you eliminate interior mutability scattered across many heap-allocated objects and instead concentrate mutation into a single, well-defined boundary, two things happen. First, lock contention drops because you're no longer acquiring dozens of fine-grained locks on the hot path — you might acquire one coarser lock less frequently, or you restructure entirely to avoid shared mutable state using channels or epoch-based reclamation. Second, memory pressure falls because you've replaced many small allocations with fewer, larger, cache-friendly ones. The allocator does less work, and the CPU cache does more useful work.

This is where Rust's ownership model becomes an advantage rather than an obstacle. The borrow checker forces you to be explicit about who owns data and when mutation occurs. A data-oriented redesign takes that explicitness and makes it structural: the architecture itself encodes the access patterns, rather than relying on runtime synchronization to paper over an ownership model that was never quite right.

What PlanetScale and Cloudflare Engineers Recognize in This

The fact that this work resonated with engineers from PlanetScale and ex-Cloudflare is worth unpacking. Both organizations run Rust at scale in database and networking infrastructure, respectively, where the cost of lock contention and allocator pressure isn't abstract — it's milliseconds on a query or dropped packets under load.

PlanetScale, which runs a distributed MySQL-compatible database platform, has deep experience with the kind of concurrent access patterns that make lock contention a first-class concern. Ex-Cloudflare engineers have dealt with similar problems in proxies and edge infrastructure where throughput requirements are extreme. When people with that background share a piece of applied Rust optimization work, it's because the techniques generalize. The napkin math discipline and the data-oriented approach aren't specific to one codebase — they're transferable patterns.

Applying These Techniques to Your Own Rust Code

If your Rust application is showing signs of either pathology, here's where to start:

- Before profiling, do the arithmetic. Calculate your lock acquisition rate, your critical section duration, and your thread count. If the product suggests contention, you have confirmation before you open `perf` or `tokio-console`.

- Audit your use of `Arc<Mutex<T>>` and `Arc<RwLock<T>>`. Every one of these is a potential contention point. Ask whether the data inside could be owned differently, batched, or accessed through a message-passing boundary instead.

- Look at your allocation patterns. Short-lived `Vec` and `String` allocations in tight loops are common culprits. Can they be reused with an object pool? Can the data be stack-allocated or stored in a pre-allocated arena?

- Consider switching allocators. Both `jemalloc` (via the `tikv-jemallocator` crate) and `mimalloc` (via `mimalloc`) can significantly reduce fragmentation and allocation latency compared to the system allocator, with minimal code changes.

- When you do reach for the profiler, use the napkin math results to guide where you look. Flamegraphs are dense; knowing in advance which subsystem should be hot makes the data actionable rather than overwhelming.

The broader lesson from this profile is that performance optimization in Rust is most effective when it's grounded in both measurement and design intent. Napkin math tells you what's possible; data-oriented redesign tells you how to restructure toward it. Engineers who've operated Rust services at the scale of PlanetScale and Cloudflare already know this — and the wide circulation of this particular case study suggests that combination is exactly what the community needed spelled out in concrete terms.

Know something we missed? Have a correction or additional information?

Submit a Tip