SciRS2 v0.4.1 Brings Pure-Rust Scientific Computing Stack Closer to Production

SciRS2 v0.4.1 ships zero C or Fortran deps and 549 passing linalg tests. Here's whether it's worth your Cargo.toml for a working regression today.

The question worth asking about SciRS2's March 28 v0.4.1 release isn't what changed; it's whether cool-japan's pure-Rust SciPy alternative has matured enough for actual use without a system BLAS installation or Python runtime anywhere in the chain.

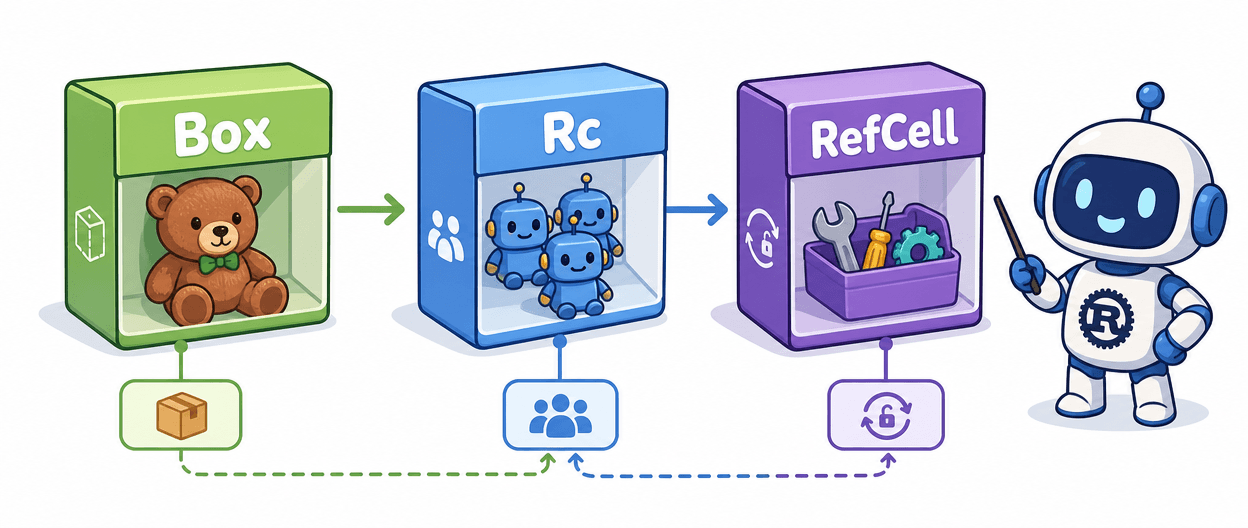

Start with the simplest viable demo: linear regression via `scirs2-linalg`. Add it to your `Cargo.toml` alongside `ndarray`, and the entire dependency tree compiles with no C or Fortran on the host. Before v0.2.0, SciRS2 required system-level LAPACK; OxiBLAS, the project's pure-Rust BLAS/LAPACK replacement, eliminated that constraint entirely. The eigendecomposition path shows how the API reads in practice:

use scirs2::prelude::*; use ndarray::array;

fn main() -> CoreResult<()> { let a = array![[4.0_f64, 2.0], [1.0, 3.0]]; let eig = linalg::eigen::eig(&a)?; println!("Eigenvalues: {:?}", eig.eigenvalues); Ok(()) }

The `?`-propagated errors, `CoreResult<T>` return type, and module names that mirror `scipy.linalg` are genuinely ergonomic. `scirs2-linalg` alone ships 549 passing tests in this release. The stats crate is equally clean: `Normal::new(0.0_f64, 1.0)?` followed by `.rvs(1000)?` reads exactly as expected if you're porting from SciPy, with zero surprises. Feature flags gate each of the 25-plus sub-crates individually, so `scirs2 = { version = "0.4.1", default-features = false, features = ["linalg", "stats"] }` keeps compile times from spiraling when you only need a slice of the workspace.

Rough edges appear when you push past the core scientific modules. The optimizer (`scirs2-optimize`) and neural machinery are still being hardened; v0.4.1 specifically patches Adam's handling of scalar and 1x1 parameters (Issue #98). Python bindings via PyO3 for autograd, neural, and vision modules also landed in this release, which is a useful bridge, but it also signals that end-to-end pure-Rust ML workflows aren't seamless yet. When you assemble a meaningful cross-section of the workspace, compile times grow noticeably.

Here's where SciRS2 v0.4.1 sits against the usual suspects:

| Capability | ndarray+nalgebra | tch-rs | SciRS2 v0.4.1 |

|---|---|---|---|

| N-dim arrays | Strong | Via tensors | Via ndarray |

| Linear algebra | Needs LAPACK | Via libtorch | OxiBLAS (pure Rust) |

| Optimization/ML | None | Full PyTorch | Growing (25+ crates) |

| GPU support | No | CUDA/MPS | Not yet |

| Native C/C++ deps | Sometimes | ~2 GB libtorch | None |

| SciPy-like API | Partial | No | Explicit design goal |

| WASM/no-std targets | Partial | No | Yes |

If you're already on tch-rs and need GPU training, SciRS2 won't replace it. If nalgebra covers your pure linear algebra needs on embedded targets, nalgebra is more settled code with a longer track record. But for a dependency-free pipeline spanning FFT, statistics, signal processing, and basic inference without a C compiler anywhere in the loop, nothing else in the Rust ecosystem attempts the same scope.

The project's claim of 10-100x SIMD performance improvements via AVX2, AVX512, and ARM NEON is worth benchmarking against your specific workload before treating it as settled. What isn't a claim is the zero-dependency build and the 549-test coverage on `scirs2-linalg`. For teams with strict audit policies on native code, or anyone targeting WASM or constrained environments, that combination makes v0.4.1 the most credible single-language scientific stack Rust has yet produced.

Know something we missed? Have a correction or additional information?

Submit a Tip