Study Tests Security of LLM-Generated Rust Cryptography Code

Memory-safe Rust still crumbles when an LLM writes crypto: only 23.3% of samples compiled, and nonce reuse plus API hallucinations kept showing up.

Memory-safe Rust is not the same thing as safe AI-generated crypto. That is the real takeaway from this study, and it is the part worth treating as a hard warning, not a headline. If you let an LLM write cryptographic Rust for secrets, authentication, or transport security, you are stepping into one of the highest-risk corners of software development, where a small mistake can become a leaked key, a broken nonce scheme, or a silent security failure.

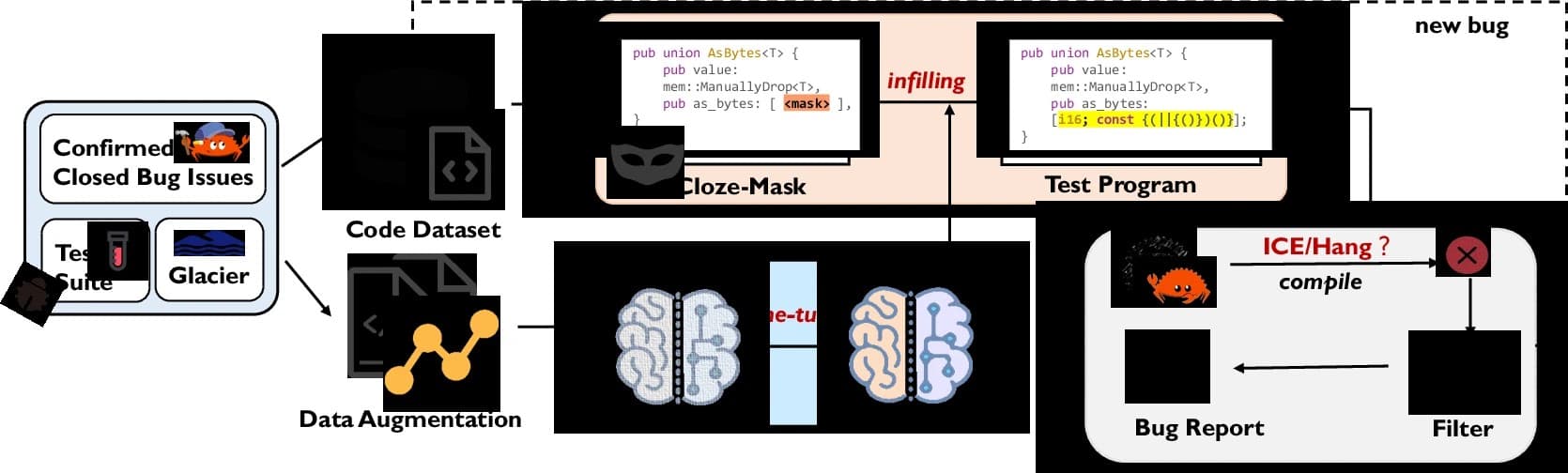

What the study actually tested

The paper, *An Empirical Security Evaluation of LLM-Generated Cryptographic Rust Code*, examines 240 Rust code samples built around two widely used AEAD algorithms: AES-256-GCM and ChaCha20-Poly1305. The samples came from three models, Gemini 2.5 Pro, GPT-4o, and DeepSeek Coder, and the researchers varied the prompting strategy across four setups to see whether model choice or prompt style changed the outcome.

That design matters because it avoids treating LLM output like one undifferentiated blob. It asks a better question: if you change the model, or change how you ask, do you get safer Rust? The answer, at least in the direction this paper points, is that cryptographic output still needs far more than a clever prompt.

The researchers then pushed the code through CodeQL static analysis and a rule-based crypto-specific analyzer, mapping findings to Common Weakness Enumeration categories. That gives the paper practical weight. It is not just saying “AI is risky.” It is checking the generated code for the kinds of mistakes security engineers actually triage.

Why this lands hard in Rust

Rust already has a reputation as the language you reach for when memory safety matters. That is exactly why this paper is newsworthy. The old promise was that Rust would help eliminate whole classes of exploitable bugs, and the National Security Agency said in 2022 that software memory safety issues account for a large portion of exploitable vulnerabilities. The National Security Agency and the Cybersecurity and Infrastructure Security Agency reinforced that message in June 2025, saying that adopting memory-safe languages directly improves software security and reduces the risk of security incidents.

But memory safety is only one layer. Cryptography has its own failure modes, and Rust does not magically save you from bad nonce handling, the wrong API call, or a hallucinated function that compiles in the model’s imagination but not in your crate. That is the key shift this study highlights: the question is no longer just whether Rust avoids memory bugs, but whether AI-generated Rust can still honor the security promises people expect from the language.

That matters across the ecosystem. GitHub said in July 2025 that CodeQL support for Rust was in public preview and could identify numerous cryptographic misuses, alongside other unsafe usage patterns. OWASP’s 2025 Top 10 also keeps cryptographic failures in the spotlight at A04:2025, including lack of cryptography, insufficiently strong cryptography, and leaking cryptographic keys. In other words, the broader security world is already treating crypto misuse as a top-tier application risk, and this paper shows why AI-written Rust belongs in that same conversation.

Where the generated code went wrong

The most striking secondary result is the compilation rate: only 23.3% of generated samples compiled successfully. That alone is a red flag. Before you even ask whether the code is secure, most of it could not make it through the compiler as-is.

The security failures were not random, either. All three models reportedly showed systematic problems, including nonce reuse and API hallucination. Those are exactly the sort of issues that make cryptographic code dangerous in the real world. A reused nonce can break the security assumptions of an otherwise strong algorithm, and an invented or incorrect API call can push developers into using the library in a way it was never designed to support.

That combination is especially alarming because these mistakes can look “close enough” in a code review. Crypto bugs often hide in plain sight. A snippet may appear polished, idiomatic, and Rust-like while still doing something fatal under the hood. The study’s use of many generated samples, rather than a single demo, is what makes the warning more credible: it suggests the problem is repeated behavior, not a one-off stumble.

The editorial test: what you should never trust an AI to generate

If you are writing Rust and the code touches secrets, this study gives you a clear filter. Never trust an LLM to generate cryptographic code that you would not be prepared to review line by line with someone who understands the algorithm, the crate, and the threat model.

That means you should treat these areas as expert-review-only:

- nonce generation and reuse logic

- key handling, storage, and rotation

- encryption and decryption setup

- authentication and transport security code

- anything involving crate-specific crypto APIs

- any code where a hallucinated function name could change behavior silently

The practical rule is simple: let the model help with scaffolding, naming, or documentation if you want, but do not hand over the security-critical parts and assume Rust will save you. The compiler can catch type mistakes. It cannot tell you that your nonce strategy is broken, your API usage is wrong, or your implementation leaks keys.

What this means for Rust teams right now

The paper fits a broader pattern that security teams are already seeing: AI assistance can accelerate development, but it does not reliably produce safe cryptography on demand. That is why the responsible workflow is still a three-part stack: Rust, static analysis, and human review.

CodeQL is especially relevant here because GitHub’s Rust support is explicitly aimed at catching cryptographic misuses. Pair that with a rule-based analyzer, then put a human in the loop who knows the difference between correct encryption code and code that merely looks advanced. If the generated snippet touches AES-256-GCM or ChaCha20-Poly1305, the review should be stricter, not looser.

The deeper lesson is that “memory-safe language” and “safe AI-generated crypto” are not the same claim. Rust can remove many classes of memory bugs, and that is valuable. But when an LLM is the author, the risk shifts upward into logic, misuse, and false confidence. The study makes that shift visible, and it gives the community a useful standard for judging AI output: if the code protects secrets, it earns trust only after expert scrutiny, not because it was written in Rust.

Know something we missed? Have a correction or additional information?

Submit a Tip