ThreatFlux Releases Fast Rust Library for Reading and Writing GGUF Files

Wyatt Roersma's gguf-rs-lib v0.2.5 claims to parse large GGUF model files significantly faster than Python, with 4,087 downloads already logged on crates.io.

Wyatt Roersma, working under the ThreatFlux GitHub organization, shipped gguf-rs-lib v0.2.5 last week, a Rust library targeting the GGUF (GGML Universal Format) binary format that has become the dominant storage format for quantized LLMs running through llama.cpp and its derivatives. The repository was active within the past week as of mid-March 2026, and the crate has already pulled 4,087 all-time downloads across its two published versions.

GGUF is the binary format behind most of the quantized model weights you'd pull down to run locally with Ollama or llama.cpp. Until now, tooling for inspecting and manipulating those files programmatically has leaned heavily on Python. Roersma's crate makes the explicit claim that gguf_rs can parse large GGUF files significantly faster than equivalent Python implementations, though no raw benchmark numbers or methodology accompany that claim in the current release.

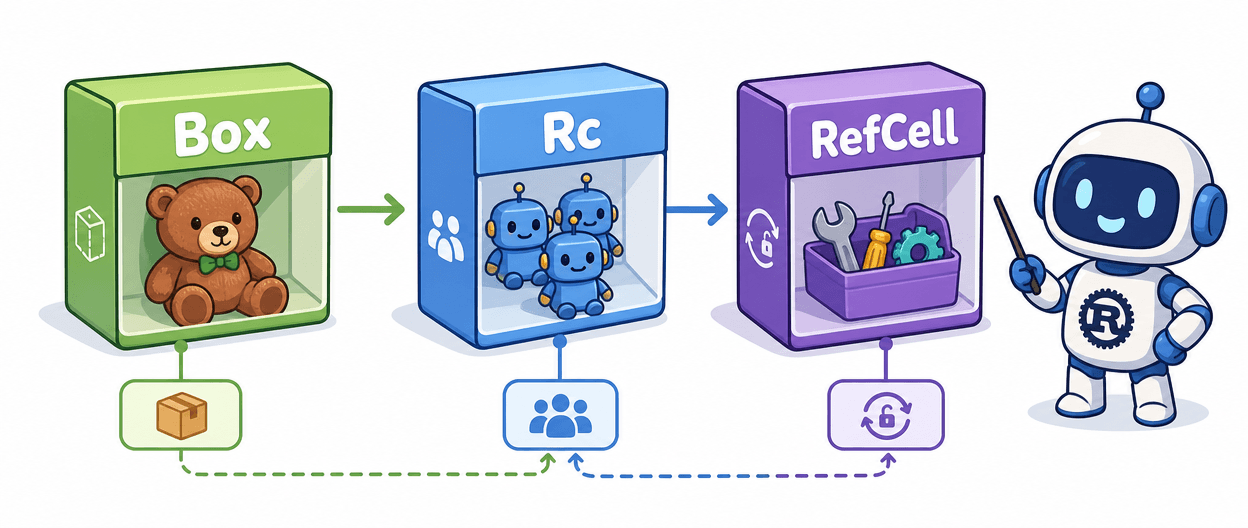

The library ships with four feature flags: std (enabled by default for standard library support), async for Tokio-backed async I/O, mmap for memory-mapped access to large files, and cli to build the bundled command-line tool. The safety posture is deliberately conservative: the crate uses only safe Rust by default, and the mmap feature, while keeping the API safe to use, carries an explicit warning about inherent platform-specific risks from the underlying memory mapping.

The included gguf-cli is the most immediately practical piece for anyone already working with GGUF files. Install it with cargo install gguf features=cli and you get four commands worth knowing: gguf-cli info model.gguf for a quick file inspection, gguf-cli tensors model.gguf to list all tensors, gguf-cli metadata model.gguf format json to dump metadata as JSON, and gguf-cli validate model.gguf to check file integrity. The repository includes a test_model.gguf sample file, so you can run those commands without hunting down a test weight file.

The package itself is compact at 161 KiB and 8.2K lines of source code, built against the 2021 Rust edition. Configuration files present in the repository (clippy.toml, rustfmt.toml, deny.toml, and lcov.info) indicate the project is set up with linting, formatting enforcement, dependency auditing, and coverage tracking from the start.

Roersma's acknowledgments section credits the GGML project for the format specification and the Rust community broadly. The crate sits alongside related ML tooling in the same ecosystem, including safetensors_explorer for inspecting .safetensors and .gguf files via CLI, and llama-gguf, described as a high-performance Rust implementation of llama.cpp with full GGUF support. Documentation lives at docs.rs/gguf-rs-lib/0.2.5, and the source is browsable there as well. The MIT license makes it straightforward to pull into commercial projects without friction.

Know something we missed? Have a correction or additional information?

Submit a Tip