WebAssembly Components and Rust Offer a Leaner Path to Edge Microservices

Rust plus WebAssembly's Component Model may finally give edge and serverless architectures a credible escape from container bloat.

If you've spent any time deploying microservices at the edge, you already know the frustration: containers are heavy, cold starts are punishing, and the operational overhead of keeping a fleet of Docker images lean enough for low-latency workloads can feel like a second job. Essa Mamdani's recently published essay makes a pointed argument that this tradeoff isn't inevitable, and that the combination of WebAssembly's Component Model with Rust's performance characteristics offers a genuinely different path forward.

The timing matters here. Serverless and edge computing have matured enough that the architectural choices you make today will be load-bearing for years. Reaching for Kubernetes and OCI containers because they're familiar is understandable, but Mamdani's argument is essentially that familiarity has been masking a serious efficiency problem, one that WebAssembly components are now positioned to solve.

Why containers struggle at the edge

The containerized microservice model was designed for data center environments where you have predictable network topology, generous memory headroom, and the luxury of warm instances. At the edge, those assumptions fall apart. Containers carry entire OS-level abstractions, runtime dependencies, and initialization sequences that add latency before your code runs a single instruction. For workloads where you're measuring response time in single-digit milliseconds, that startup cost is not a rounding error; it's the difference between a viable product and one that fails its SLA on a bad day.

The weight problem compounds when you're operating across dozens of edge PoPs. Each node needs to pull, store, and orchestrate images that are orders of magnitude larger than the actual business logic they're running. The container is doing a lot of work to protect you from environment inconsistency, but at the edge, that protection comes at a steep price.

What the WebAssembly Component Model actually changes

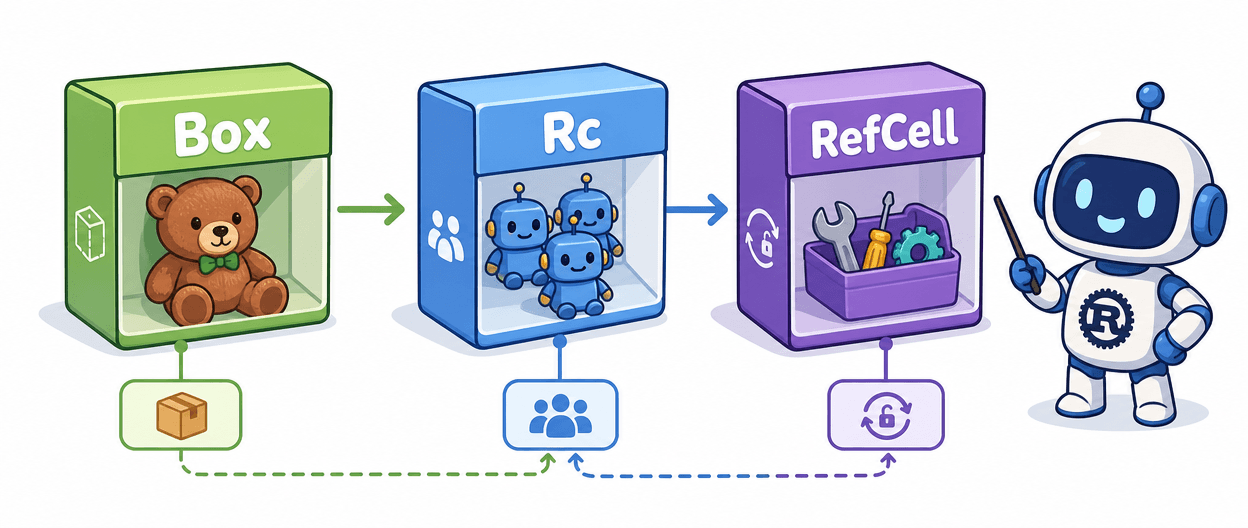

WebAssembly itself has been around long enough that calling it new would be misleading. What's new, and what Mamdani's essay focuses on, is the Component Model: a specification that allows Wasm modules to be composed with well-defined interfaces, share types across language boundaries, and be linked together without the glue code that previously made multi-module Wasm architectures painful to maintain.

The Component Model essentially gives you the compositional architecture that microservices promised, but at the module level rather than the network level. Instead of two services talking over HTTP with all the serialization, retry logic, and observability overhead that implies, you have two components with typed interfaces that can be linked and executed in the same Wasm runtime. The performance implications are significant: inter-component calls happen in-process, type-checked at the interface boundary, without a network hop.

For edge deployments specifically, the sandbox model is what makes this practical rather than theoretical. A Wasm runtime like Wasmtime enforces memory isolation between components at the engine level, which means you get multi-tenant safety without running separate OS processes or containers. The attack surface shrinks, the startup time shrinks, and the artifact you're deploying is measured in kilobytes rather than hundreds of megabytes.

Where Rust fits in this picture

You could write Wasm components in several languages, and the toolchain support is improving across the board. But Rust has a structural advantage here that goes beyond benchmark numbers. Rust's ownership model eliminates the garbage collector, which means you don't get GC pauses at the worst possible moment, and the memory footprint of a compiled Rust component is predictable in a way that JVM or Go-based alternatives simply aren't.

Memory safety without a runtime is the key phrase. At the edge, you want deterministic latency. A GC pause that adds 10-50ms to a tail request might be acceptable in a backend analytics service; it's catastrophic in an edge function sitting between a user and your product. Rust gives you the safety guarantees that C and C++ don't, while delivering the performance profile that managed runtimes struggle to match in constrained environments.

The Rust Wasm toolchain has also matured considerably. The `wasm32-wasip2` target, combined with the `wit-bindgen` tooling for generating component interface bindings from WIT (WebAssembly Interface Types) definitions, means the developer experience is no longer the rough prototype it was two or three years ago. You define your interfaces in WIT, generate the bindings, implement the logic in Rust, and compile to a component that any conformant Wasm runtime can execute.

The practical case for edge microservices

Mamdani's core argument is that the Component Model changes the calculus for edge and serverless architectures specifically because it eliminates the network as the primary composition mechanism. Traditional microservices decompose functionality across processes that communicate over the network, which is a sound pattern in a data center with sub-millisecond internal latency. At the edge, where you're already fighting for every millisecond in the path to the user, adding internal network hops for service-to-service calls is a tax the architecture can't easily afford.

Component-based Wasm flips this. Your "microservices" are now components linked within a single Wasm runtime instance. You still get the interface contracts, the separation of concerns, and the independent deployability that made microservices attractive, but the composition happens at the module boundary rather than the network boundary. The result is a service mesh architecture that weighs almost nothing and starts in microseconds.

This is not a universal replacement for containerized services. If your workload has heavy stateful requirements, needs POSIX system call access that Wasm's sandboxed environment doesn't support, or depends on libraries that don't yet compile cleanly to Wasm targets, containers remain the right tool. But for stateless or lightly stateful edge functions, API gateway logic, auth handlers, and similar workloads, the Component Model case is compelling.

Getting started without the friction

The practical entry point is the `cargo component` toolchain, which wraps the standard Cargo workflow with component-aware build steps. You'll want to define your component's exported and imported interfaces in a `.wit` file, run `wit-bindgen` to generate the Rust glue, and then implement against those generated traits. The compiled output is a `.wasm` file that carries its type information as part of the component binary, making it introspectable and composable without external documentation.

Wasmtime is the runtime to start with for local development and testing. The `wasmtime` CLI lets you run components directly, inspect their interfaces, and link them together for integration testing before you ever touch a deployment pipeline. For production edge deployments, platforms built on the Wasmtime engine are beginning to support the Component Model natively, which means the artifact you test locally is the artifact that runs in production.

The direction of travel here is clear. The WebAssembly Component Model gives Rust's edge performance story a composability layer it previously lacked, and the tooling is now mature enough that reaching for it on a real project is a reasonable bet rather than an act of faith.

Know something we missed? Have a correction or additional information?

Submit a Tip