UW Researcher Compares Emotional Content in AI Chatbot, Physician Patient Responses

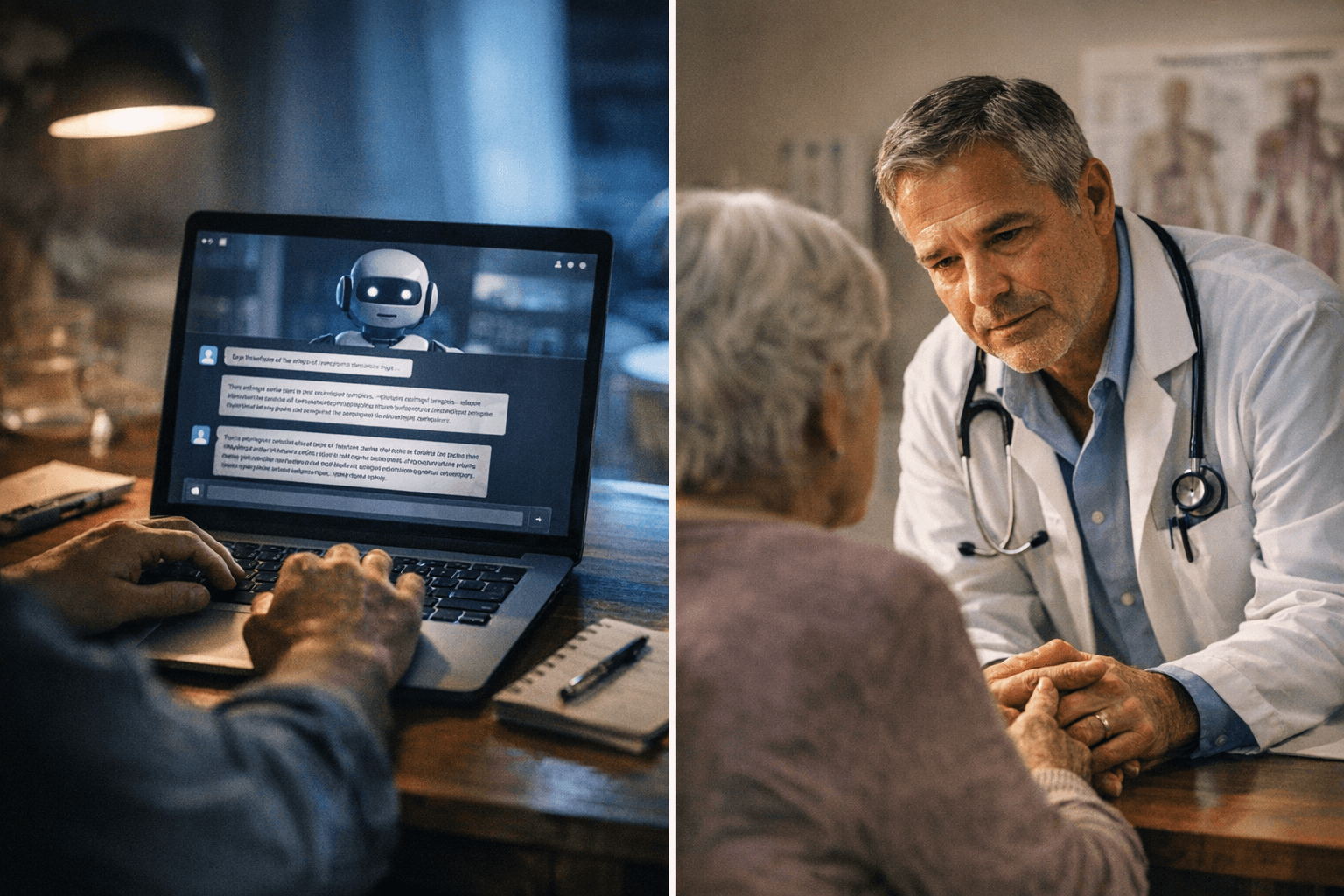

AI chatbots gave longer, more emotionally varied health answers than physicians, who were more concise and easier to read, a new University of Wyoming study found.

When patients type their health concerns into an AI chatbot, the response they get back looks and reads very differently from what a physician would write. A new peer-reviewed study out of the University of Wyoming quantifies exactly how differently, finding that responses from OpenAI's ChatGPT and Google's Gemini were generally longer and more emotionally varied than physician replies, while doctor-written answers were more concise and easier to read.

Daniel T. "Danny" Burns, a Ph.D. student in the data science track of UW's biomedical sciences program, led the research. Burns, who grew up in Wall Township, N.J., completed his master's degree in statistics at UW in 2023 before continuing into the doctoral program. His team drew on 100 real-world patient telehealth questions, running each through both AI chatbots and comparing the machine-generated answers against physician responses across four measures: emotional tone, readability, response length, and use of medical disclaimers such as "I'm not a doctor, but here is information you need."

The study was published March 6 in the Journal of Medical Internet Research, which the University of Wyoming describes as the top-ranked medical informatics journal in the world. UW announced the findings publicly on March 12.

Burns framed the emotional dimension of the research as clinically significant, not merely stylistic. "Supportive and emotionally responsive information has been shown to alleviate health-related stress, worry and depression, while also enhancing individuals' commitment to managing health risks," he said.

The work reflects a broader shift in how patients are seeking health information. Prior research has largely focused on chatbot accuracy, with some studies finding that both physicians and consumers overwhelmingly prefer chatbot-generated text over physician responses. Burns and his co-authors moved past the accuracy question to examine whether AI responses serve patients emotionally as well as informationally.

Burns collaborated with four co-authors spanning fields well beyond his own: Channing Bice from the Department of Journalism and Media Communication at Colorado State University in Fort Collins; Paul E. Johnson from the Department of Otolaryngology-Head and Neck Surgery at the University of Washington in Seattle; Nicholas Chia from Data Science and Learning at Argonne National Laboratory in Lemont, Illinois; and Timothy Robinson, also from UW's Department of Mathematics and Statistics.

The full article, titled "Comparison of Emotional Content in Text Responses from Physicians and AI Chatbots to Patient Health Queries: Cross-Sectional Study," is available through PubMed under PMID 41791109 and DOI 10.2196/85516. The study does not yet offer a definitive prescription for how AI tools should communicate with patients, but it establishes a baseline for understanding what patients are actually receiving when they turn to chatbots instead of, or alongside, their doctors.

Know something we missed? Have a correction or additional information?

Submit a Tip