AI Health Advice Becomes Routine for Many Americans, Survey Finds

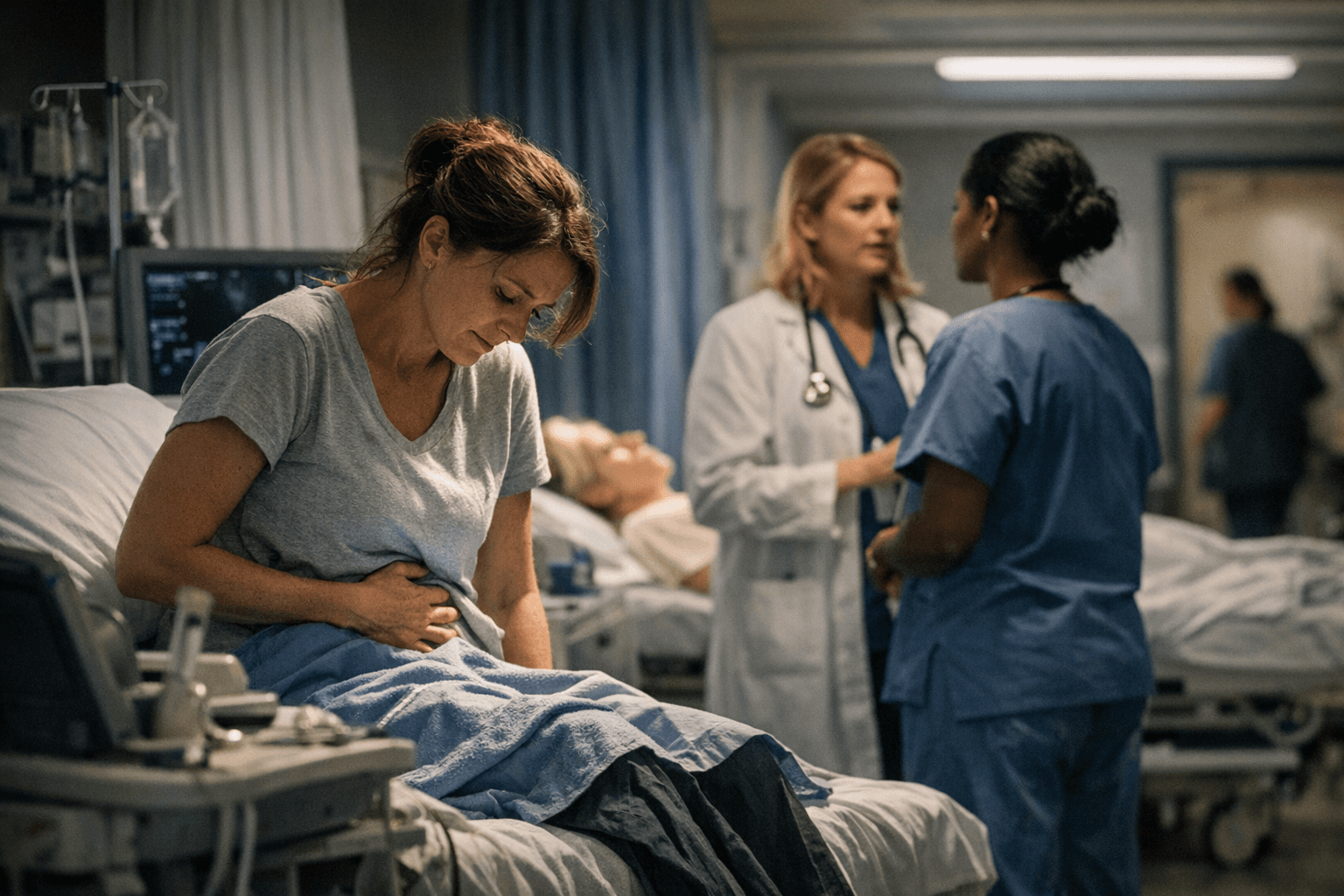

About a third of adults now use AI chatbots for health questions, often for fast, private answers before a doctor visit. Many never follow up, raising privacy and safety concerns.

For Tiffany Davis in Mesquite, Texas, a question about symptoms tied to weight-loss injections can turn into a quick ChatGPT check before she decides whether a doctor visit is worth the time. In Alabama, Rakesia Wilson uses AI tools such as ChatGPT and Microsoft Copilot to help interpret lab results and decide whether she should miss work for an appointment. Their habits reflect a larger shift in American health care behavior: AI is becoming a routine first stop for people who want faster answers than a clinic can provide.

KFF found 32% of adults had turned to AI chatbots in the past year for health information or advice, including 29% for physical health and 16% for mental health. A Pew Research Center report found 22% of U.S. adults get health information from AI chatbots at least sometimes. A Gallup poll in the same span pointed in the same direction, with about one-quarter of adults saying they had used AI for health information or advice in the previous 30 days.

The appeal is less about replacing doctors than getting unstuck. KFF found 65% of AI health users said they wanted quick or immediate advice, 41% used it to look something up before seeing a provider, and 36% said they felt more comfortable asking privately. That matters in communities where care is expensive or hard to reach. Nineteen percent of users cited not being able to afford care, and 18% cited not having a regular doctor or not being able to get an appointment. Those barriers were even more common among adults under 30 and people with incomes below $40,000.

The survey data also points to a public health risk. KFF found 42% of people who asked AI about physical health did not follow up with a doctor or other health professional afterward, and 58% of those asking about mental health did not follow up. That leaves room for incomplete or incorrect advice to shape decisions about symptoms, medications or whether a problem is serious enough to get care.

Privacy is another fault line. KFF found 77% of the public is concerned about the privacy of personal medical information provided to AI tools. Pew found that many users see AI health information, like social media posts, as convenient and easy to understand, but not necessarily highly accurate. OpenAI’s usage policies, effective Oct. 29, 2025, prohibit tailored advice that requires a license, including medical advice, without appropriate involvement by a licensed professional.

Dr. Karandeep Singh of University of California San Diego Health has described AI as an upgraded version of Google-style health searching, and that is exactly what is pulling many Americans in. For people facing long waits, weak insurance or no regular clinician, the technology can be a bridge into care. But when that bridge becomes the only stop, the risk is that convenience outruns judgment.

Know something we missed? Have a correction or additional information?

Submit a Tip