AI Models Using Routine Heart Scans Are Finding Advanced Failure Sooner

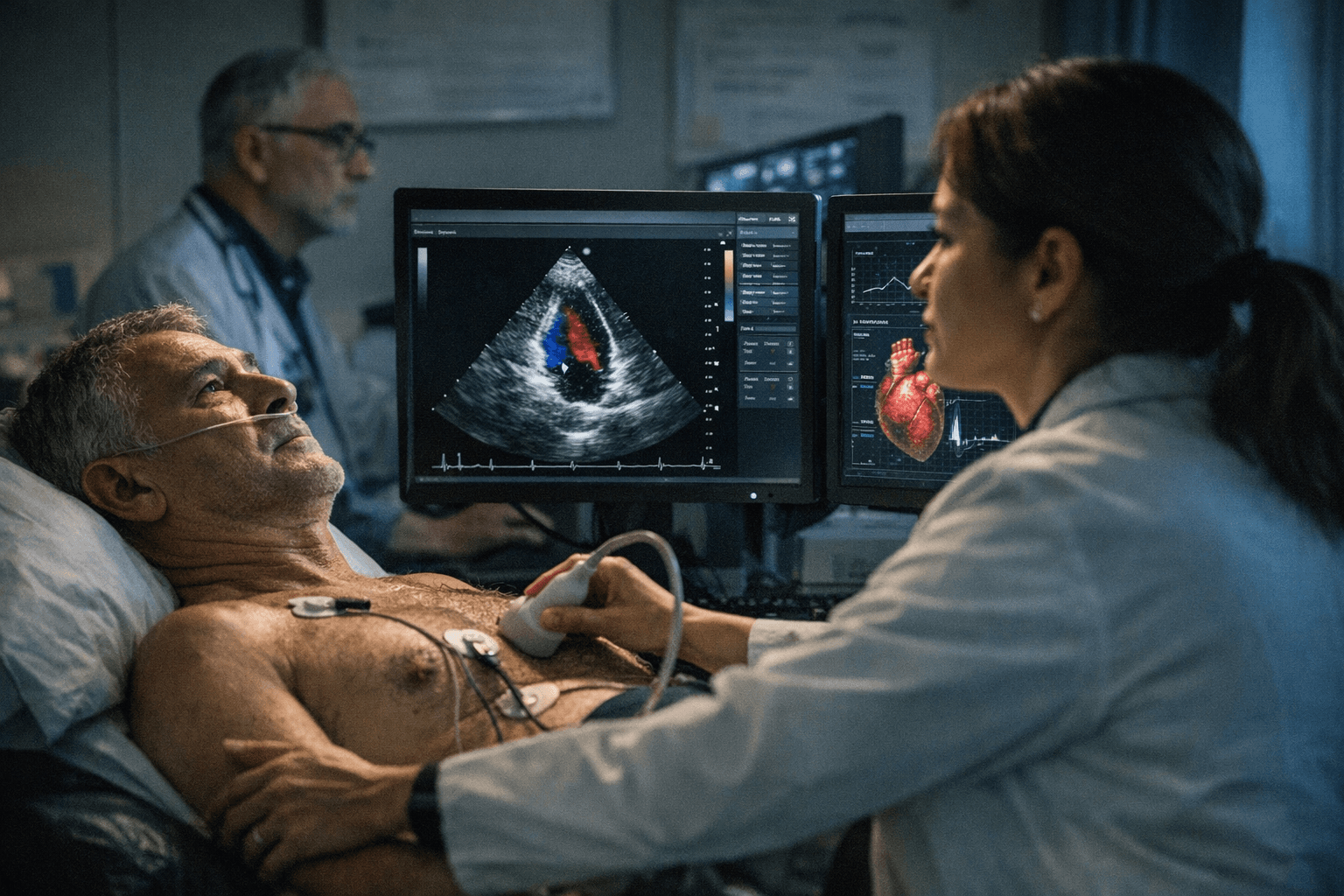

Four research teams have built AI tools that read echocardiograms to catch heart disease earlier, potentially reaching thousands of patients who never get diagnosed.

Roughly 200,000 Americans live with advanced heart failure, yet only a fraction receive appropriate care each year. A cluster of new artificial intelligence tools, developed independently at some of the country's top medical centers, may be about to change that.

A team led by researchers at Weill Cornell Medicine published findings in npj Digital Medicine showing that a multi-modal machine learning model could predict peak oxygen consumption, the key measurement from cardiopulmonary exercise testing, using routine echocardiogram images combined with electronic health records. That measurement, known as peak VO2, is the standard way clinicians determine whether a heart failure patient has progressed to an advanced, high-risk stage. The problem is that the testing requires specialized equipment and trained staff found almost exclusively at large academic medical centers, creating a diagnostic bottleneck that leaves many patients unidentified and undertreated.

The Weill Cornell model, developed by an AI team led by Dr. Wang and including lead authors Dr. Zhe Huang and Dr. Weishen Pan, was trained on deidentified data from 1,000 heart failure patients seen at NewYork-Presbyterian/Columbia University Irving Medical Center. It was then tested on 127 patients from three other NewYork-Presbyterian campuses, where it predicted peak VO2 with high accuracy.

The implications are significant for patients in community settings who currently have no practical path to a CPET evaluation.

Meanwhile, a separate effort at Kaiser Permanente produced EchoPrime, described as the largest echocardiography AI model built to date. Trained on more than 12 million cardiac ultrasound videos paired with expert cardiologist interpretations and published in Nature, the vision-language model can generate comprehensive echocardiogram reports. The National Heart, Lung, and Blood Institute funded the study, and a clinical trial of the model is now underway at Kaiser Permanente Northern California.

"We built this AI model to help clinicians quickly and easily provide more accurate and precise interpretations of echocardiograms to patients," said David Ouyang, a research scientist with the Kaiser Permanente Division of Research and a non-invasive cardiologist and echocardiographer with The Permanente Medical Group. The team has also released EchoPrime as open-source software, making it freely available to other researchers.

At NewYork-Presbyterian and Columbia, Dr. Pierre Elias and his team built a deep learning laboratory called CRADLE, which has aggregated 13 million electrocardiograms, more than 2 million echocardiograms, and a million cardiac catheterizations into a centralized database. Their model EchoNext, trained on more than a million paired ECG-echocardiogram records drawn from the most racially and ethnically diverse cardiovascular AI dataset yet assembled, achieved a positive predictive value of 74 percent for new structural heart disease diagnoses in a head-to-head comparison with physician reads of 3,000 electrocardiograms. The team reports the tool produced a 20 percent increase in new diagnoses of cardiac amyloidosis, a frequently missed and dangerous condition, at one of the country's busiest cardiac centers.

Yale School of Medicine researchers contributed their own system, called PanEcho, published in JAMA, which can perform 39 distinct diagnostic tasks from multi-view echocardiography and interpret full studies in minutes. "We developed a tool that integrates information from many views of the heart to automatically identify the key measurements and abnormalities that a cardiologist would include in a complete report," said Greg Holste, a PhD student at the University of Texas Austin and co-first author of the study. Yale validated the model using point-of-care ultrasounds from the Yale New Haven Hospital emergency department, demonstrating it can function in lower-resource clinical settings.

Taken together, the four efforts signal a shift in cardiac diagnostics: AI is no longer confined to single-task analyses of isolated scans. The harder question now is how quickly these tools move from research publications into the clinical workflows of the community hospitals and rural health systems where the diagnostic gap is largest.

Know something we missed? Have a correction or additional information?

Submit a Tip