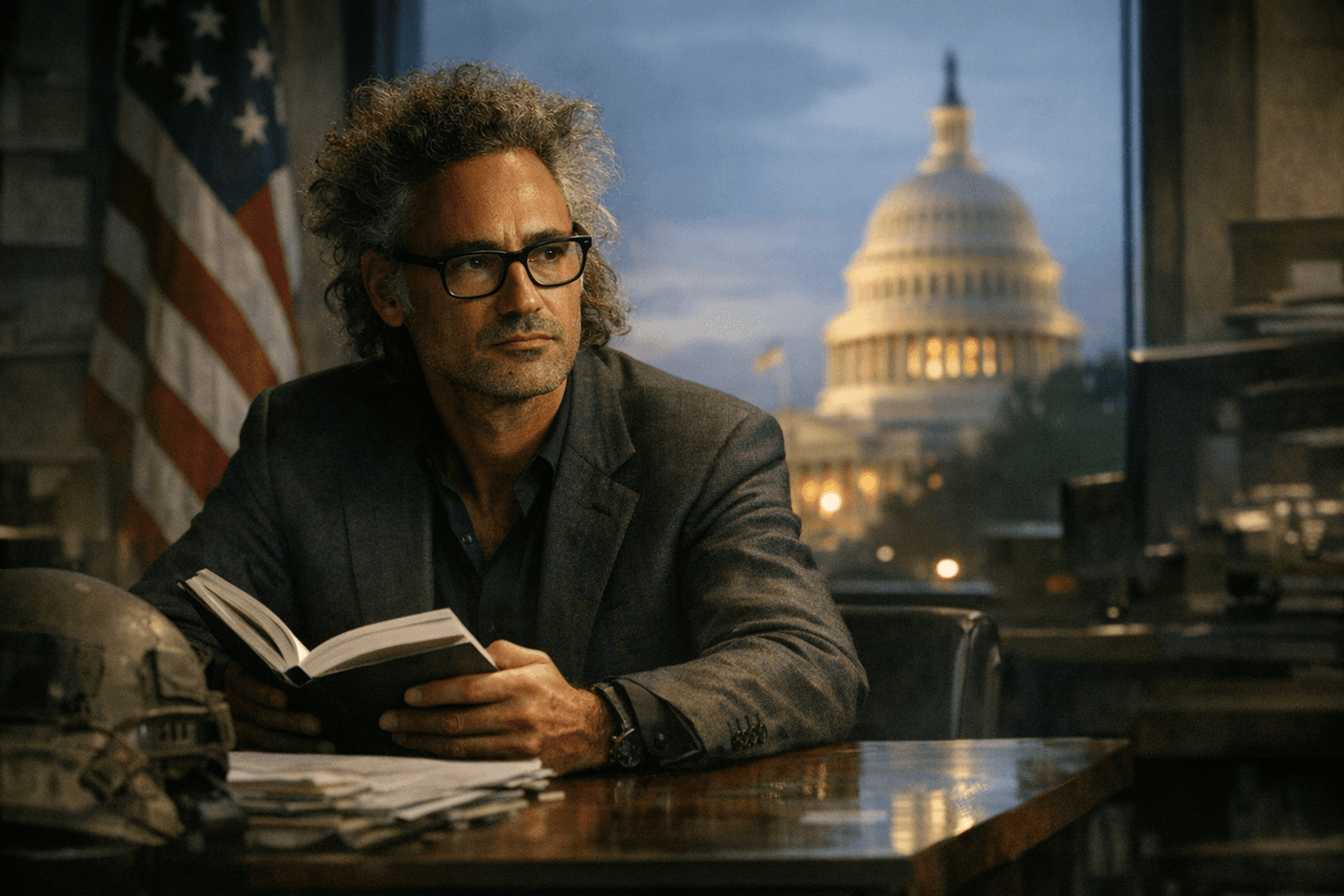

Anthropic probes claims of unauthorized access to unreleased Claude Mythos Preview

Anthropic is probing claims that a small group reached its unreleased Mythos Preview through a vendor path, while the company says its own systems were not hit.

Anthropic is investigating claims that unauthorized users accessed its unreleased Claude Mythos Preview through a third-party vendor environment, but the company says it has found no evidence its own systems were compromised. That distinction is central: the allegation points to possible misuse of access outside Anthropic’s core network, not a confirmed breach of the company’s internal defenses.

The reported access concerns a model Anthropic has presented as a major cybersecurity system, not a general-purpose release. Anthropic said on April 7 that it was limiting Mythos Preview to a tightly controlled initiative called Project Glasswing, with access granted only to selected partners for defensive security work. The launch group included Amazon Web Services, Apple, Broadcom, Cisco, CrowdStrike, Google, JPMorganChase, the Linux Foundation, Microsoft, NVIDIA and Palo Alto Networks. Anthropic has said the model was not intended for public release and that broader deployment would wait until additional safeguards were in place.

The new allegations sharpen questions about how a restricted frontier model can still be reached. A small group of unauthorized users reportedly accessed Mythos on the same day Anthropic announced limited testing, and the group was said to have come from a private online forum focused on unreleased AI models. Anthropic said the users tried several methods to gain access, including using the credentials of a person who had been interviewed and who works for a third-party contractor. The company said it is still reviewing the claims and, so far, has seen no sign that its own systems were affected.

The stakes are high because Anthropic has repeatedly warned that Mythos is unusually powerful and could be misused for cyberattacks. In material that was left publicly accessible in a data cache, Anthropic described the model as a step change in capability and said it was the most capable model it had built to date. The company also said Mythos Preview had already identified thousands of zero-day vulnerabilities, including flaws in major operating systems and web browsers. Its first public exposure came after a March 26 leak of draft materials in a publicly accessible data store, which Anthropic said resulted from human error in its content-management configuration.

The episode lands as frontier AI security becomes an increasingly institutional issue, not just a technical one. Anthropic said in November that it disrupted an AI-assisted cyber-espionage campaign it attributed to a Chinese state-sponsored group designated GTG-1002, and said the actor used Claude Code to automate much of the attack lifecycle. Taken together, the allegations around Mythos and the earlier espionage disclosure show a company trying to secure a powerful model before wider release, while confronting the possibility that access controls can fail even when the core system remains intact.

Know something we missed? Have a correction or additional information?

Submit a Tip