AWS Opened Its Secret Trainium Lab and the AI Chip Race Just Changed

AWS just gave reporters a rare look inside its Trainium chip lab, revealing the custom silicon strategy at the heart of its challenge to Nvidia's AI dominance.

Rare access to a chip lab does not happen without a reason. When TechCrunch published an exclusive tour of AWS's Trainium development lab on March 22, 2026, the timing was deliberate: Amazon is signaling that its proprietary silicon strategy has matured enough to be shown, and the stakes could not be higher. According to Techbuzz Ai, the lab sits at the center of Amazon's reported $50 billion OpenAI investment, and it has already attracted high-profile customers including Anthropic and Apple. What journalists saw inside offers a rare window into how AWS intends to reshape the economics of AI training and challenge a company that, according to Techbuzz Ai, controls over 90% of the AI chip market.

1. A Decade of Custom Silicon

AWS's Trainium ambitions did not emerge overnight. As Aimagazine's Kitty Wheeler reported in September 2025, the company's custom chip strategy began nearly a decade ago with the acquisition of Israeli chip designer Annapurna Labs. That purchase seeded AWS's internal silicon engineering capability and eventually produced multiple generations of Graviton ARM-based processors, which gradually reduced AWS's dependence on Intel and AMD for general computing workloads. Trainium represents the next, more ambitious phase of that same philosophy: rather than competing with rivals for the same general-purpose processors, AWS designs chips tailored specifically to its own data centers and customer requirements. As Aimagazine put it, the vertical integration approach allows the company to optimize "every component of its hardware stack, from individual transistors to entire server configurations."

2. What Trainium Is Built to Do

Trainium chips are purpose-built for AI training workloads, which demand massive parallel processing power to develop machine learning models capable of recognizing patterns across vast datasets. During the lab tour reported by Techbuzz Ai, engineers demonstrated how the chips handle the massive matrix multiplications that are essential for transformer architectures, the computational foundation of modern large language models. This is not incidental engineering: transformer-based models underpin nearly every frontier AI system in production today, from conversational assistants to multimodal reasoning systems. The chips are specifically optimized for those compute patterns rather than the broader, less efficient workloads that general-purpose GPUs must accommodate.

3. UltraServers and the Interconnect Advantage

Individual chips are only part of the story. Aimagazine reported that AWS has developed server systems called UltraServers, which incorporate multiple Trainium chips working in coordination to create computing clusters capable of handling the most demanding machine learning workloads. Techbuzz Ai added a critical architectural detail: a custom interconnect fabric that "allows thousands of chips to work in parallel without the communication bottlenecks that plague traditional GPU clusters." In large-scale AI training, communication overhead between chips is one of the primary performance constraints; the interconnect architecture AWS has built is designed to attack that bottleneck directly. Together, UltraServers and the custom fabric represent a system-level bet, not just a chip-level one.

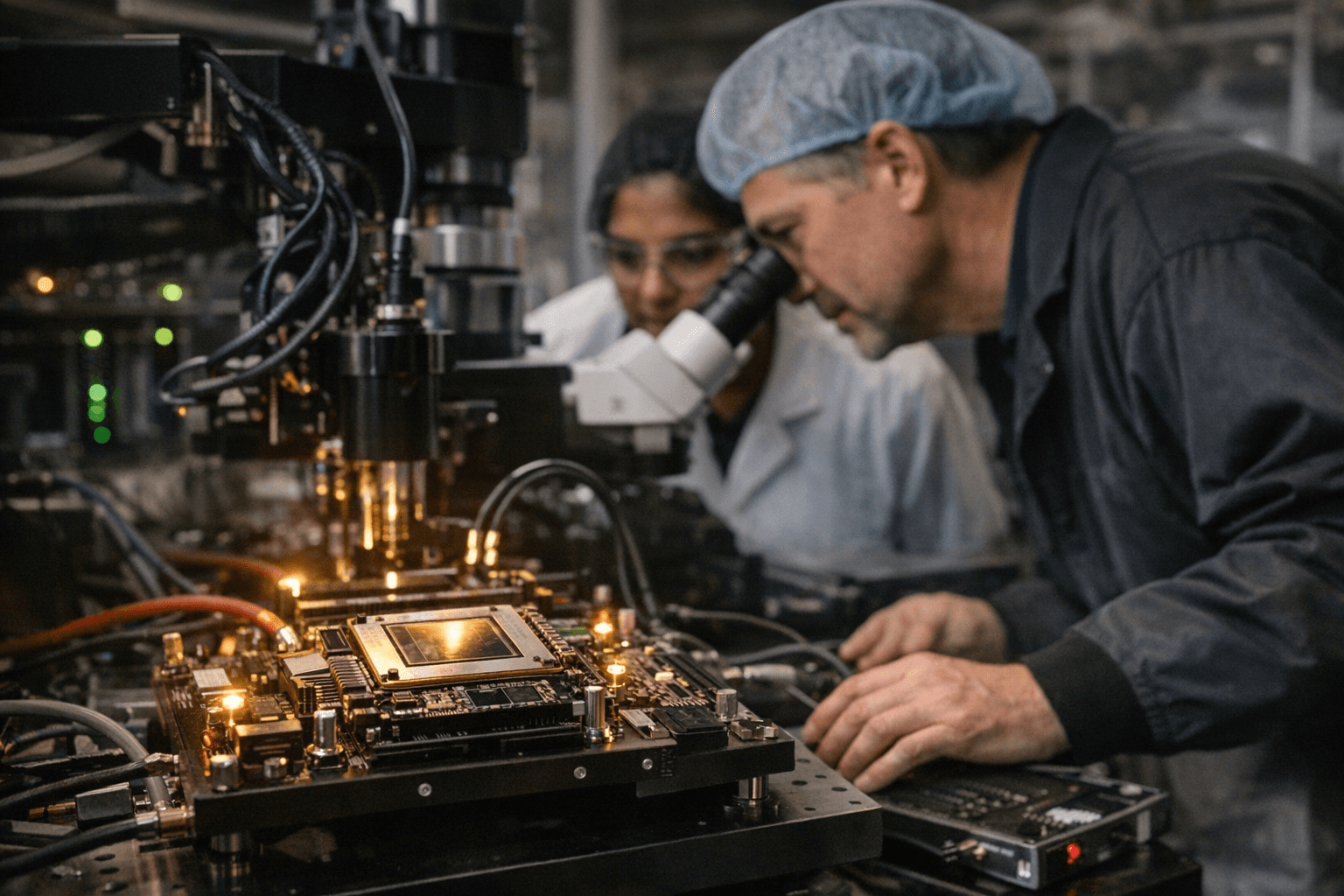

4. Inside the Lab

The behind-the-scenes access described by both TechCrunch and Techbuzz Ai offered a rare look at the engineering rigor behind these claims. Rows of Trainium chips undergo stress testing and optimization for different AI workloads inside the facility. Engineers on-site demonstrated the chips handling matrix multiplication at scale, illustrating the kind of computational throughput required to train modern large language models. It is worth noting that the tour revealed engineering demonstrations, not independently validated benchmarks: the provided reporting describes observed processes and claimed capabilities, but no quantified performance metrics such as FLOPS, throughput figures, or head-to-head comparisons with Nvidia hardware were disclosed in available excerpts.

5. The Economics Driving the Bet

The financial logic behind Trainium is straightforward even if the execution is not. Techbuzz Ai noted that training frontier AI models has become extraordinarily expensive, with some estimates placing GPT-4's training costs north of $100 million. Nvidia's chips command premium pricing because, as Techbuzz Ai reported, there are no viable alternatives at scale, a position reinforced by the company's estimated greater than 90% market share in AI chips. AWS is betting that Trainium can deliver comparable performance at significantly lower cost, and because the chips are offered exclusively through AWS cloud services, Amazon captures the full economic value chain rather than simply selling hardware. As Techbuzz Ai observed, "the economics driving this shift are impossible to ignore."

6. Customers and Strategic Positioning

The customer traction reported around Trainium signals that the technology has moved beyond internal use. Techbuzz Ai reported that AWS has already landed Anthropic and Apple as customers for its Trainium-based offering. Anthropic, one of the most closely watched AI safety and model development companies, is itself partly Amazon-backed, making the relationship strategically layered. Apple's reported involvement is notable given the company's historically tight control over its own silicon supply chain. Techbuzz Ai also linked the lab directly to Amazon's reported $50 billion OpenAI investment, framing Trainium as the infrastructure layer underpinning that partnership. These are claims sourced to Techbuzz Ai alone and have not been independently corroborated in available reporting from TechCrunch or Aimagazine.

7. Manufacturing Demand Has Outrun Plans

Market response to Trainium has not been a slow build. Aimagazine reported that demand exceeded expectations, forcing AWS to expand manufacturing commitments well beyond original projections. An AWS figure identified only as "Matt" told Aimagazine: "We're seeing significant interest in these chips. We've gone back to our manufacturing partners multiple times to produce much more than we'd originally planned." The timing matters: Aimagazine noted that demand for AI computing resources has broadly outpaced traditional semiconductor supply chains, making the ability to control one's own chip roadmap a strategic asset rather than simply a cost-reduction play.

8. What Remains Unverified

Responsible coverage of Trainium requires distinguishing between what has been demonstrated and what has been claimed. No hard performance benchmarks comparing Trainium to Nvidia GPUs appear in any available reporting. The $50 billion OpenAI investment figure, the Nvidia market share estimate, the GPT-4 training cost figure, and the named customer list all originate from Techbuzz Ai and have not been corroborated by additional sources in available excerpts. The AWS figure quoted by Aimagazine is identified only by first name. Full confirmation of manufacturing partners, chip specifications, pricing, and regional availability has not been publicly disclosed. The competitive picture AWS is drawing is credible in its strategic logic; whether Trainium's silicon delivers on the performance promise at the scale required is a question the benchmarks have not yet publicly answered.

AWS has spent nearly a decade building toward this moment. The Trainium lab tour signals that Amazon believes its proprietary infrastructure stack is ready for scrutiny and, more importantly, ready to compete for the largest AI training contracts in the world.

Know something we missed? Have a correction or additional information?

Submit a Tip