BBC investigation exposes misleading AI fitness ads and exaggerated claims

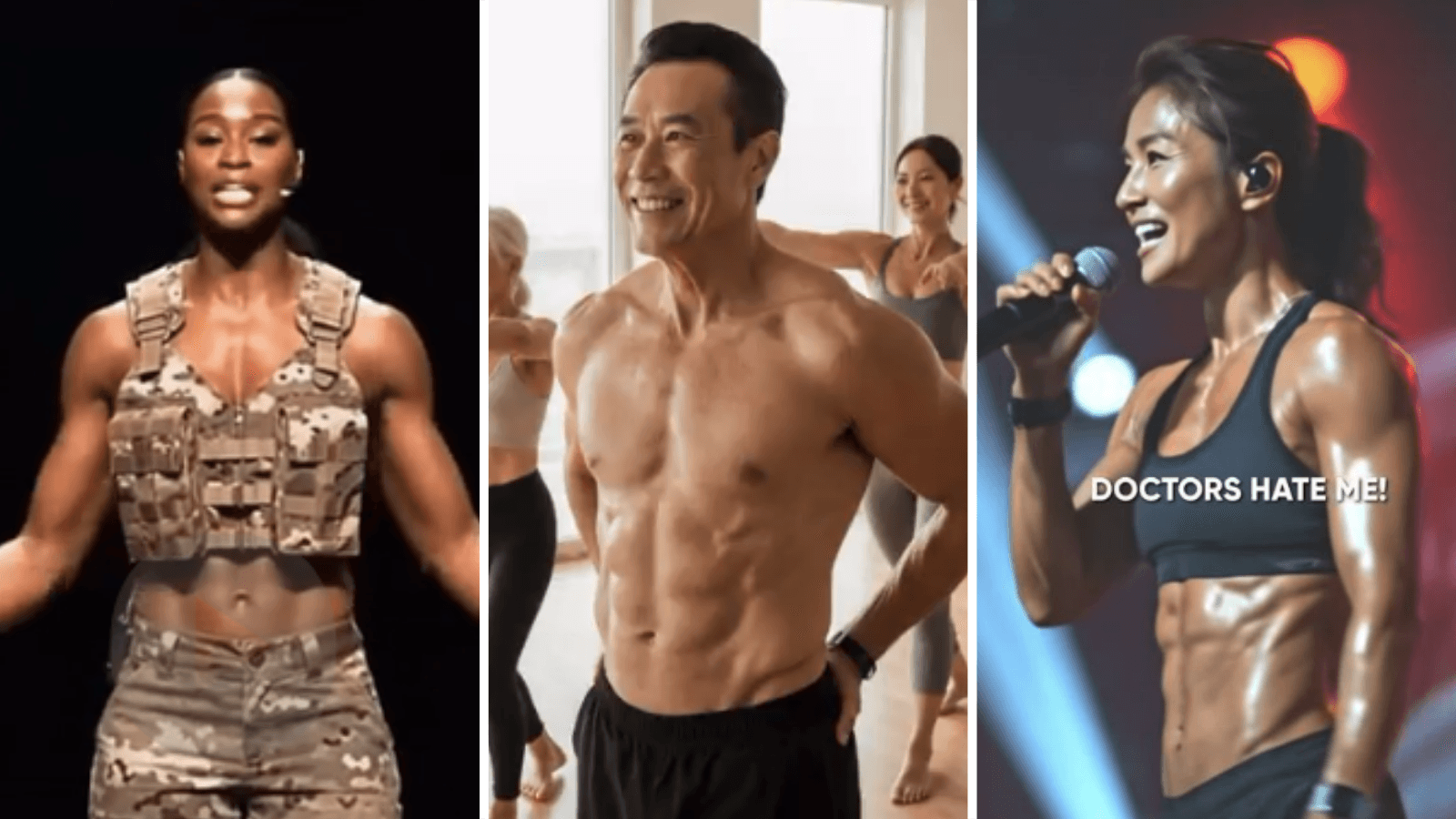

AI-generated trainers are selling impossible body changes in weeks, and some ads hid that the people on screen were not real.

Misleading fitness ads built around AI-generated instructors are pushing promises that sound more like fantasy than physiology: bodies changed in weeks, faces looking 20 years younger, and weight-loss claims such as shedding 40lb in one month. The BBC Sport investigation found that some of the ads did not clearly disclose that the people shown were not real, turning synthetic personalities into a sales tool for exaggerated transformation claims.

Many of the ads were flagged to the UK Advertising Standards Authority, which publishes its rulings every Wednesday and places the outcome of formal investigations on the public record. That matters because fitness marketing already faces close scrutiny over weight-loss and makeover claims, and AI makes it easier to manufacture credibility, urgency and before-and-after narratives at scale. When the person coaching the workout is synthetic, the line between inspiration and deception gets thinner.

Prof Andy Miah, Chair of Science Communication and Future Media at the University of Salford, said the trend is “huge” and warned that people who are already looking for advice can be drawn in by this kind of content. That vulnerability is part of the problem. Fitness ads often target people at their most self-conscious, then use polished AI faces and fast-result promises to sell a body image that science cannot support.

The issue is not confined to one platform or one country. Eating disorder charities also warned about a “harmful” diet app that had been promoted on the Apple App Store to over-12s, a reminder that app stores can become distribution channels for products that would struggle to survive tougher scrutiny elsewhere. The consumer risk is not just wasted money. For some users, especially young people, these ads can intensify body dissatisfaction and distort expectations around diet, exercise and health.

The regulatory response is getting sharper. In the United States, the Federal Trade Commission launched Operation AI Comply on September 25, 2024, to target deceptive or unfair AI-related practices and said there is no “AI exemption” from laws against deception. The agency said its cases included fake reviews, “AI Lawyer” claims and earnings schemes tied to AI hype. Together, those actions show the direction regulators are taking: AI may change how scams look, but it does not change the consumer-protection rules they are meant to obey.

Know something we missed? Have a correction or additional information?

Submit a Tip