Breacher.ai launches live deepfake avatars to simulate executive video-call attacks

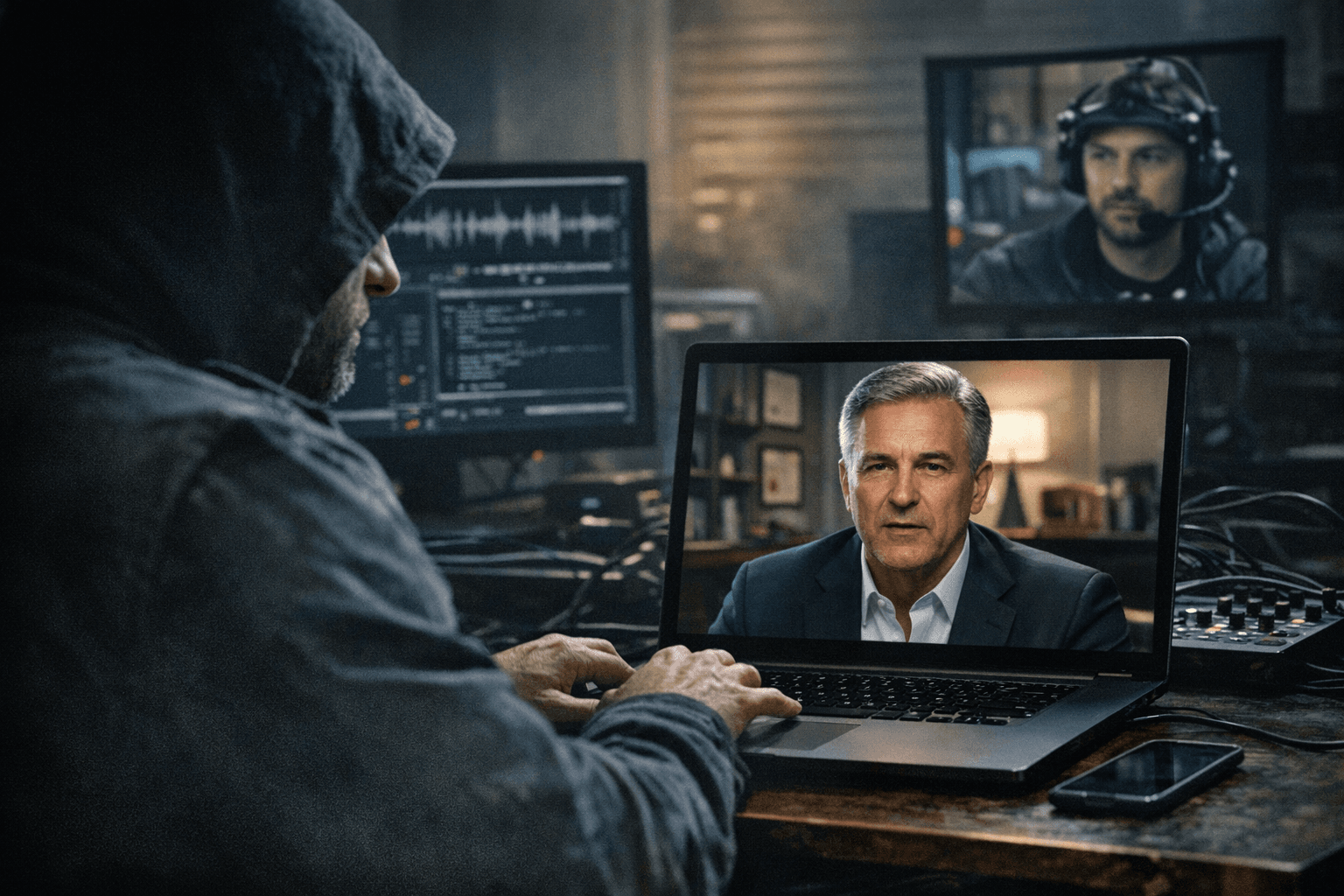

Breacher.ai rolled out live deepfake video avatars for simulated conferencing attacks, claiming real-time impersonation across Zoom, Teams and Google Meets.

Breacher.ai, a cybersecurity vendor that calls itself "Threat Researchers, Not a Training Platform," added live deepfake video avatars to its social-engineering simulation suite, enabling clients to stage real-time impersonations of executives on common video-conferencing tools. The company published a blog post by Jason Thatcher on March 1, 2026 titled "Deepfake Interactive Avatar Simulation" and has been demonstrating related material in a 2025 YouTube demo.

The company says the avatars are "Live, Not Pre-Recorded" and designed for "interactive conversation, responsive expressions, natural pauses, indistinguishable from real participants." Breacher.ai details a four-step methodology for tests: "Identify executives to impersonate using publicly available video and audio. OSINT-driven, just like a real attacker," then "Generate a real-time deepfake avatar with cloned voice, synced lip movement, and natural expression tracking," followed by a "Conduct a live deepfake video call with targeted employees using realistic pretexts and social engineering scenarios," and concluding with "Reveal the simulation, walk through detection cues, and deliver a tailored awareness session that turns the experience into lasting behavioral change."

The product claim set includes real-time avatar deployment paired with "Voice cloning models that replicate tone, cadence, and speech patterns, synced to the deepfake avatar's lip movements." Breacher.ai also asserts platform compatibility, saying the system "Operates across Zoom, Microsoft Teams, Google Meets without an integration layer." The company invites prospective customers to "Schedule a Live Demo" and to "See how your team responds to a live deepfake video call."

Breacher.ai frames the service as red teaming that mirrors criminal and state actor methods: "We don't simulate theoretical attacks. We replicate the exact techniques nation-states and organized crime groups are using right now, from OSINT reconnaissance to real-time avatar deployment." The firm presents several headline statistics on its site, including "92% Orgs Vulnerable to Deepfake SE," "$25M Largest Video Call Deepfake Fraud," "63% Can't Distinguish Synthetic From Real," and "<5 min To Clone a Voice & Face." These figures originate on Breacher.ai's site and are presented here as company-provided metrics.

A short demo clip and accompanying script on Breacher.ai's YouTube channel illustrates the type of social-engineering pretext the company uses in exercises: "Hey, this is Steve Goodman in the IT department. We're seeing some blocked emails under your account. Can you log in and download remote access using the link provided below?" The channel posted a "Deepfake Phishing Simulations" video on April 19, 2025; the clip has modest view counts and is described as "Data compiled from our #Deepfake #Phishing Simulations and Demo."

Breacher.ai also states operational safeguards: "Our simulations are ethical and follow rules of engagement" and that it will assess clients on a "no commitment quarterly basis to avoid saturating users with simulations." Those assertions address one set of risks, but key technical and legal questions remain. The company’s core technical and statistical claims have not been independently verified in the materials published by Breacher.ai, and the firm has not published a third-party validation of real-time fidelity, lip-sync accuracy across platforms, or the provenance of its headline statistics.

The service sharpens an urgent dilemma for corporate security teams: simulated exposure can sharpen defenses, yet live deepfakes amplify the reputational and operational risk of an exercise gone wrong. Video-conferencing providers, corporate counsel and regulators now face concrete choices about whether and how to permit or police synthetic participants in business meetings.

Know something we missed? Have a correction or additional information?

Submit a Tip