DeepSeek's Next AI Model V4 to Run on Huawei Chips, Report Says

Alibaba, ByteDance, and Tencent's bulk Huawei chip orders signal that DeepSeek's trillion-parameter V4 model could reshape China's AI hardware stack.

China's three largest internet platforms committed to hundreds of thousands of Huawei AI chips in preparation for DeepSeek's next flagship, V4, placing one of the most concrete hardware bets yet on China's ability to run frontier artificial intelligence without U.S. accelerators.

Alibaba Group, ByteDance, and Tencent Holdings placed bulk orders for hundreds of thousands of Huawei chips ahead of V4's expected rollout. The purchases were cited by five people with direct knowledge of the transactions. DeepSeek and Huawei did not respond to requests for comment outside normal office hours.

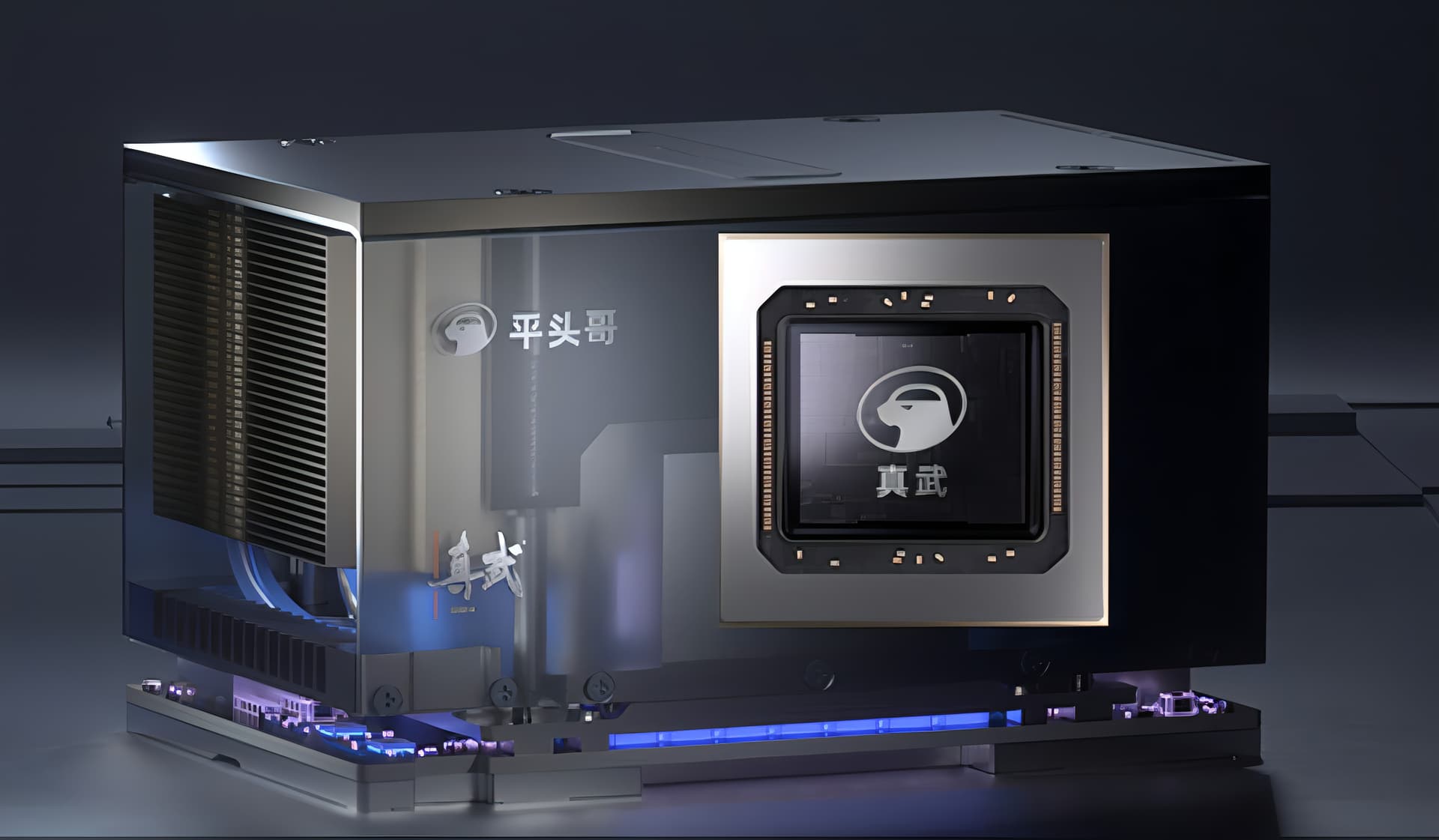

DeepSeek developed V4 to run on Huawei hardware after months of collaboration with Cambricon Technologies, with engineers rewriting certain parts of the model's code to achieve compatibility with Chinese-made processors. The company is also building additional V4 variants optimized for domestic silicon, a departure from the industry norm of granting early optimization access to Western chip suppliers.

V4 is built on a mixture-of-experts architecture with approximately one trillion total parameters, with roughly 37 billion activating per inference pass, keeping latency and compute costs low while enabling performance that rivals much larger dense models. The model accepts text, images, and code within the same context window, raising the competitive bar against multimodal systems like GPT-4o and extending well beyond the capabilities of DeepSeek's prior releases.

The Huawei hardware at the center of that ambition carries a documented compute gap relative to top Western alternatives. The Ascend 910C is barely sufficient for cost-efficient training of large AI models and delivers about 60 percent of Nvidia's H100 inference performance, according to DeepSeek's own researchers. Configured at cluster scale, the calculus shifts meaningfully. Huawei's CloudMatrix 384 architecture delivers competitive inference economics against the H100, reporting up to 6,688 tokens per second per neural processing unit on prefill and 1,943 tokens per second on decode, with optimizations built specifically for mixture-of-experts and multi-head latent attention workloads.

Export controls have already cut China's cloud providers off from Nvidia's most advanced accelerators, making domestic optimization a structural requirement rather than a preference. V4 is the successor to the model that sent Nvidia's stock down 17 percent in a single day upon release, and its deliberate architecture around Huawei hardware extends that pressure: if a frontier model can be trained and served at scale on chips outside the Nvidia ecosystem, the strategic rationale underpinning U.S. export restrictions weakens in practice, not just in theory.

The pre-orders total hundreds of thousands of units, a demand signal that reflects growing confidence in domestic chip capabilities as AI competition intensifies. For Huawei, concentrated commitments from Alibaba, ByteDance, and Tencent validate its Ascend production roadmap and deepen the developer ecosystem around the chip line. Each software optimization written for Ascend reduces the migration cost for firms currently relying on Nvidia inventory, compounding the domestic supply advantage with every new model generation.

Performance at scale is the test that remains. If Huawei's chips sustain competitive throughput across V4's inference workloads at the volume those pre-orders imply, the economic and geopolitical case for China's domestic AI hardware stack will have cleared its most visible benchmark yet.

Know something we missed? Have a correction or additional information?

Submit a Tip