Digital twins promise productivity, but workplace AI raises legal risks

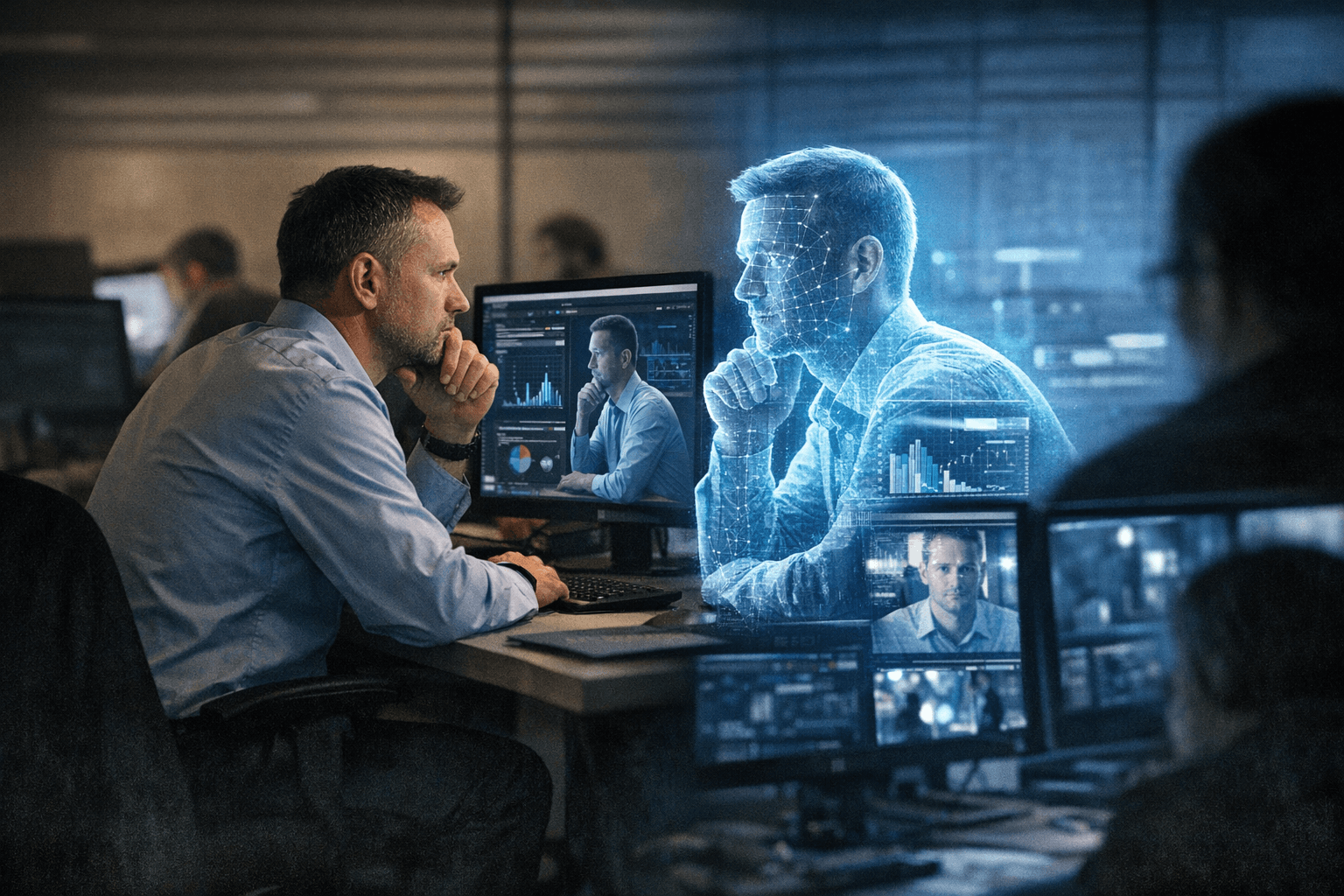

A digital twin can speed work, but one bad decision could raise questions about surveillance, discrimination, and who owns a worker’s digital replica.

The first test is failure

If a workplace digital twin keeps working after an employee logs off, or makes a bad call that reroutes a project, the legal question is not whether the software was impressive but who is accountable. That is why the new pitch around “digital employees” matters: these tools can promise more output, but they also blur the line between assistant, monitor, and decision-maker.

What a workplace digital twin actually is

IBM defines a digital twin as a virtual representation of an object or system that uses real-time data to reflect real-world behavior and performance. In industrial settings, that means a machine, line, or plant can be mirrored in software so managers can test changes before they touch the physical operation.

The workplace version goes further. IBM describes digital employees as AI-and-automation systems that work alongside knowledge workers to automate mundane tasks and improve decision-making. That shifts the idea from machinery to labor itself, which is where the legal and ethical stakes become much harder to separate.

Why employers want one

The business case is straightforward: companies want faster decisions, fewer disruptions, and more capacity from the people and systems they already have. McKinsey & Company says factory digital twins can support faster, smarter, and more cost-effective decision-making in continuous manufacturing operations, especially when resources are tight and output must scale without major new investment.

That logic is now moving out of the factory. Vendors are pitching digital twins for offices, service teams, and support functions, where the promise is similar: automate routine work, surface problems early, and help managers act before bottlenecks spread. In theory, a digital twin could become a kind of always-on operator for knowledge work, extending productivity well beyond normal working hours.

The surveillance problem arrives fast

The same data that makes a digital twin useful can also make it invasive. Kyndryl said on April 9, 2026, that its AI-powered Digital Twin for the Workplace, built on Microsoft Foundry, continuously analyzes signals from employee devices, applications, and workplace locations to anticipate and resolve technology disruptions. That is a powerful operational tool, but it also means the system is built on a dense stream of workplace telemetry.

That kind of monitoring raises an immediate question: is the tool helping workers, or watching them? If a system follows device activity, app use, and location patterns in real time, employers may be able to infer performance, availability, and behavior well beyond the narrow task of fixing IT problems. The closer the twin gets to a worker’s daily routine, the harder it becomes to distinguish support from surveillance.

The legal line is wider than many employers think

Federal law does not treat AI as a special exemption. The U.S. Equal Employment Opportunity Commission says federal employment discrimination laws apply to AI and other new technologies in employment just as they do to other employment practices. The agency has also published workplace AI materials, including guidance on wearables and tips for workers dealing with ADA-related issues.

That matters because digital twins may touch hiring, discipline, scheduling, accommodation, and performance management. If a system disadvantages workers, screens out people with disabilities, or depends on data that is incomplete or biased, the employer can still face the same civil-rights obligations that apply to older tools. The technology may be new, but the legal exposure is not.

State lawmakers are starting to write the rules

California is already moving toward disclosure. A bill tracked in April 2026 would require employers to give written notice when a workplace AI tool is used to help make employment-related decisions or surveil workers. That is a strong signal that lawmakers are less interested in whether AI boosts efficiency than in whether workers know when it is being used against them.

The broader legal backdrop is expanding as well. Bloomberg Law has noted that state laws are already addressing employers’ creation and use of employees’ digital replicas. That suggests the issue is no longer theoretical. Legislatures are beginning to ask who may create a digital copy of a worker, what that replica may be used for, and whether the worker can control it once it exists.

The unanswered questions are the most important ones

The biggest unresolved issues are not technical. They are questions of power, authorship, and liability. If a digital twin helps create a “superworker,” who owns the underlying data, who controls the model, and who bears responsibility when it gets something wrong? If the twin keeps operating after hours, does it represent the worker, the employer, or some hybrid of the two?

There is also the problem of error. A digital twin that predicts a disruption may help a team move faster, but a false positive could trigger unnecessary intervention, while a false negative could conceal a real problem. In a workplace, those mistakes can affect pay, workload, promotion prospects, disability accommodations, and trust. The more the system is allowed to act, the more its mistakes can become employment decisions.

What employers need to get right first

Before a company deploys a workplace digital twin, it needs clear guardrails. The most important controls are not flashy features but governance, notice, and human oversight. Gartner said in its 2025 digital workplace planning materials that AI brought both major benefits and significant challenges, making governance and digital employee experience priorities.

That is the right lens for this moment. A digital twin can be useful when it is bounded by policy, transparency, and review. It becomes a liability when it is allowed to drift from productivity tool into invisible supervisor. Employers that want the output gains will also have to accept the compliance burden that comes with mapping a human worker into software, because once the replica starts making decisions, the question is no longer how smart the system is, but whose rights it can affect.

Sources:

Know something we missed? Have a correction or additional information?

Submit a Tip