Father’s Secret Cancer Truth Exposes Risks of AI Medical Advice

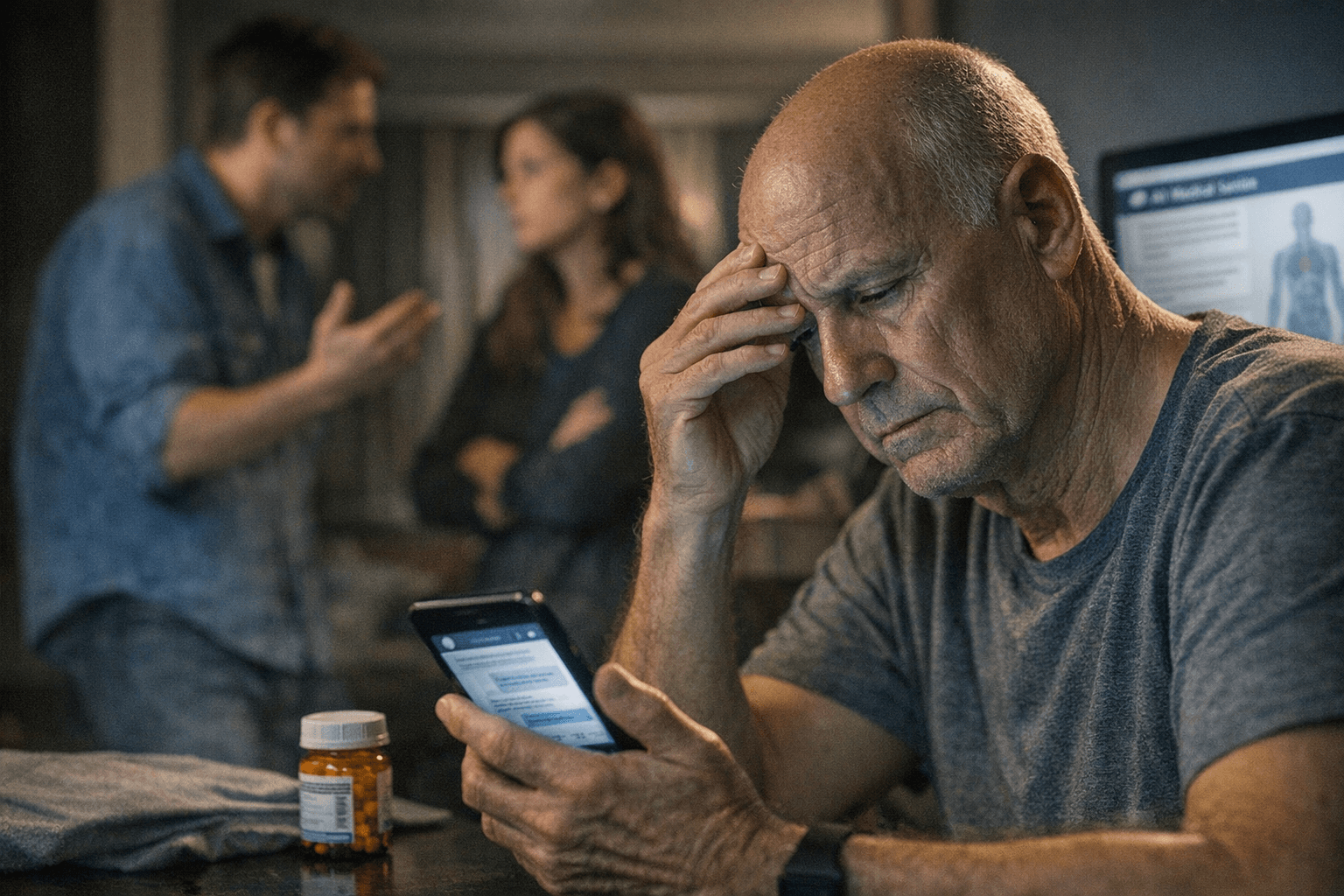

Ben Riley’s father hid his cancer while leaning on A.I. instead of his doctor, a family rupture that mirrors new warnings about chatbot medical errors.

Ben Riley was already writing about the risks of chatbots when his own family became part of the warning. In a New York Times story published April 13, 2026, Riley said he discovered by accident that his father had not been telling the truth about his cancer, after the older Riley began trusting A.I. over his doctor.

The episode puts a human face on a broader problem now drawing alarm from researchers: people are increasingly turning to large language models for medical advice, even as the systems continue to produce inaccurate and inconsistent answers. That tension has become especially sharp in cancer care, where delays, false confidence and bad triage can change outcomes quickly.

A February 10, 2026 study led by Oxford and published in Nature Medicine found that large language models present risks for people seeking medical advice. The University of Oxford said the largest user study of these tools for helping the public make medical decisions found they can provide inaccurate and inconsistent information. In another warning sign, Mount Sinai researchers reported in August 2025 that widely used AI chatbots were highly vulnerable to repeating and elaborating on false medical information.

The evidence worsened on April 13, 2026, the same day Riley’s story appeared. A Mass General Brigham study published in JAMA Network Open found chatbots failed to produce the correct differential diagnosis more than 80 percent of the time when given basic information from real patient cases. Reporting on the study said the systems missed the correct list of possible causes of symptoms more than 80 percent of the time, underscoring how quickly confident answers can drift away from clinical reality.

Riley, who founded Cognitive Resonance after previously founding Deans for Impact, has spent years examining how people process information and how generative A.I. shapes judgment. His experience at home now reinforces what public-health experts have been saying for months: convenience is not the same as expertise, and medical authority can be undermined when a chatbot feels easier to trust than a clinician.

The National Cancer Institute has warned that people are not ready to rely on these tools, or to steer patients and the public to them, for cancer information. For families already facing fear, uncertainty and pressure to make fast decisions, the danger is not just bad data. It is the erosion of trust itself, one conversation at a time.

Sources:

Know something we missed? Have a correction or additional information?

Submit a Tip