Google Cloud unveils split AI chips to challenge Nvidia dominance

Google split its newest AI chips into training and inference versions, aiming to cut costs and weaken Nvidia’s grip on enterprise AI spending.

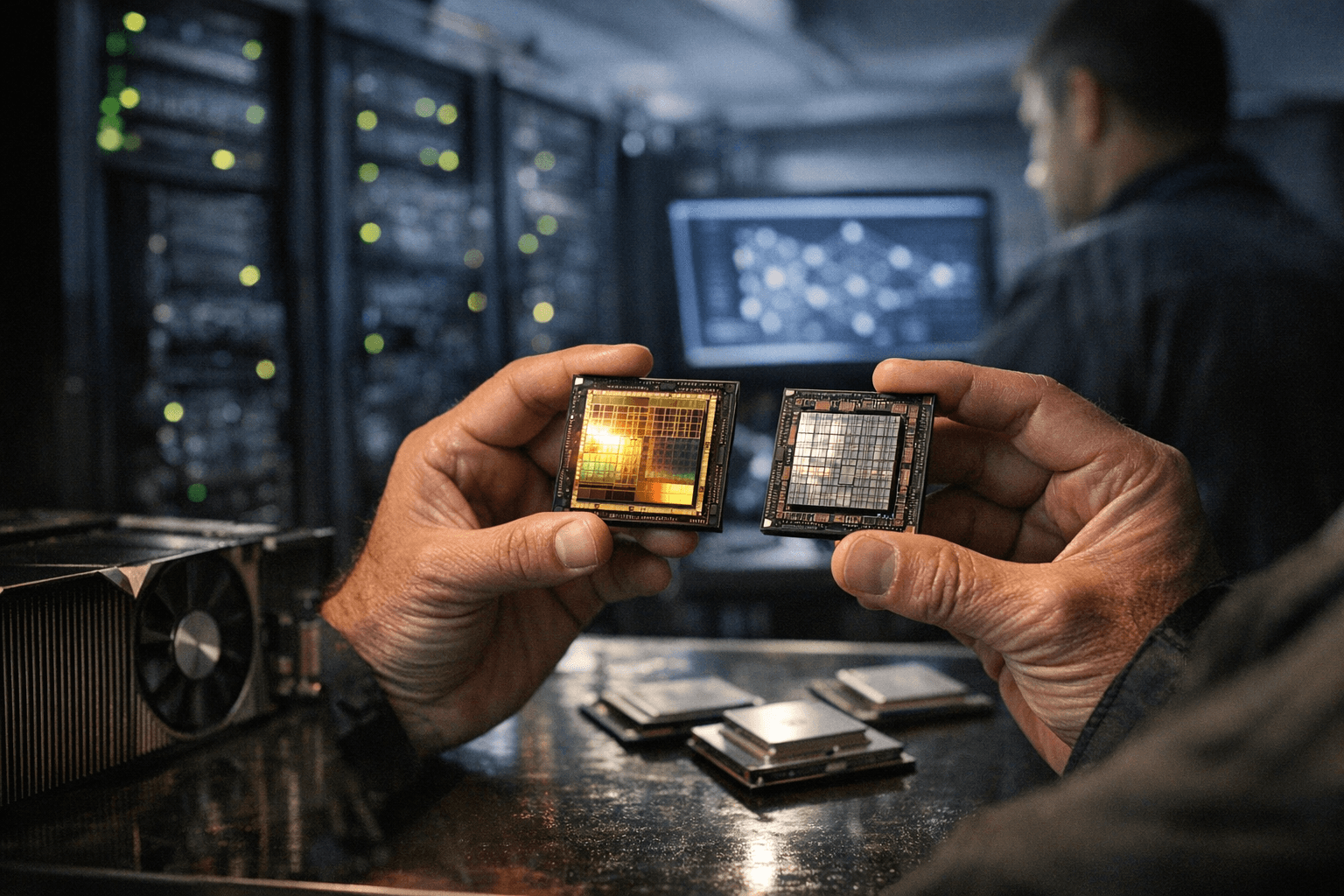

Google Cloud used its annual conference to redraw a piece of the AI power map, unveiling the eighth generation of its Tensor Processing Units as two separate chips, TPU 8t for training and TPU 8i for inference. The split matters because it separates the expensive work of building models from the more cost-sensitive job of running them in products, where speed and efficiency can make or break margins.

The move was designed to do more than improve performance. Google framed the new chips as a cheaper way for customers to handle different stages of AI workloads, a pitch aimed squarely at companies trying to rein in cloud bills as model demand keeps rising. CNBC reported that Google packed significant memory into the inference chip, a choice meant to help large models run more efficiently and to challenge the hardware advantage Nvidia has enjoyed through the AI boom.

That challenge is part technical and part strategic. Training systems consume massive computing power to teach models, while inference systems need to answer requests quickly and at scale. By tailoring separate chips to those roles, Google is betting that customers will value lower costs and less dependence on a single supplier across every part of the stack. For enterprises, that could mean more leverage in negotiations and more room to move workloads around instead of locking into one vendor’s hardware.

The launch also reflects how the AI race is shifting beyond model software and into infrastructure economics. Cloud providers are increasingly competing on the cost per query, the cost per training run and the ability to keep corporate customers inside one ecosystem. Google’s TPU strategy signals that it wants to be seen not only as a company that builds models, but as a full-stack AI platform with hardware, cloud services and enterprise tools that can anchor those workloads.

That puts Google into a tighter contest with Nvidia, Amazon and Microsoft over who captures the most AI spending over the next several years. Nvidia still sets the pace in much of the market, but Google’s split-chip approach suggests the next battleground will be less about raw silicon bragging rights and more about which cloud can deliver the lowest total cost for the work companies actually need done.

Sources:

Know something we missed? Have a correction or additional information?

Submit a Tip