How Jeff Bezos Pushed Amazon to Build a Voice-Powered Computer

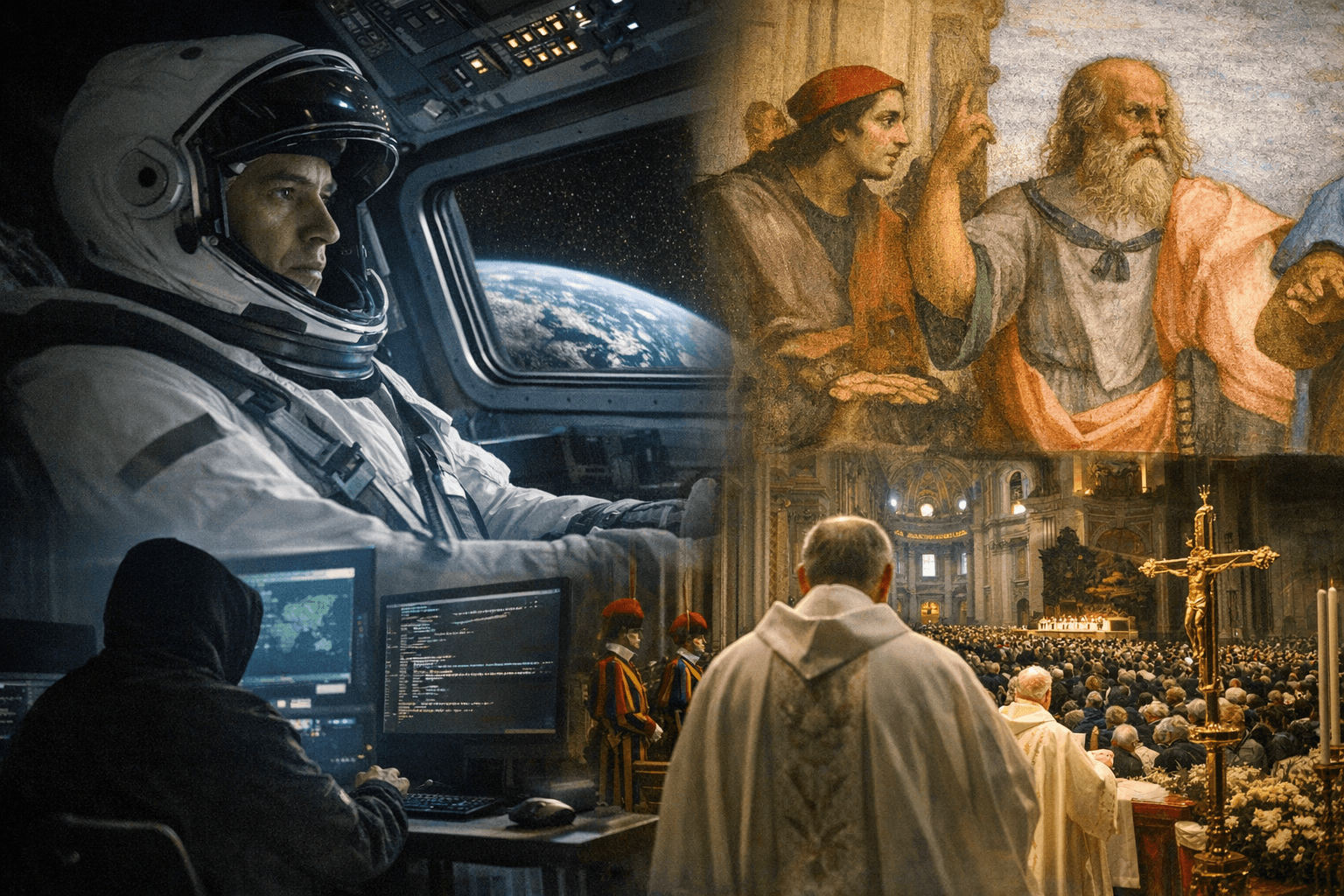

Jeff Bezos's obsession with a Star Trek-style voice computer produced the Amazon Echo, and the engineering shortcuts made for convenience in 2011 are now the fault lines of today's AI governance crisis.

The Obsession That Started It All

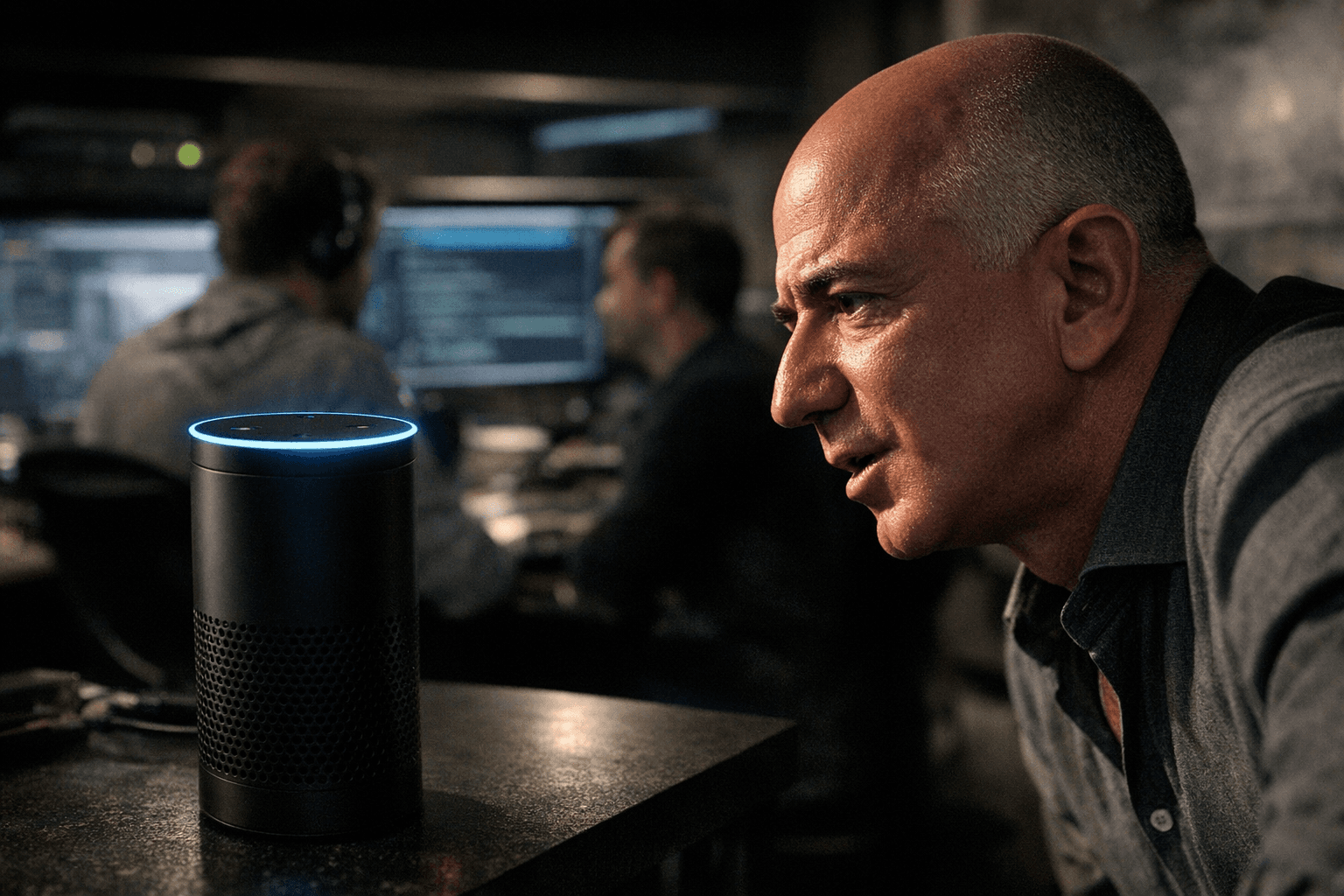

Long before "smart speaker" was a product category, Jeff Bezos was telling anyone within earshot that voice was the next great computing platform. His reference point was the computer aboard the starship Enterprise: a machine you could simply talk to, one that understood context, answered questions, and executed commands without a screen, a keyboard, or a stylus. The ambition was not just utilitarian. Bezos also understood, with characteristic precision, that a voice-activated device sitting permanently in someone's kitchen was an extraordinarily direct path to selling more products on Amazon.

The project began taking formal shape around 2011, when Bezos sketched out his concept and handed it to Greg Hart, his technical assistant and chief of staff at the time. The scene, described in Brad Stone's book "Amazon Unbound," has become something of a founding myth: Bezos drawing his vision for a voice computer on paper, Hart photographing the drawing with his phone. Hart's reaction was candid. "Jeff, I don't have any experience in hardware, and the largest software team I've led is only about 40 people," he told Bezos. Bezos told him to proceed anyway. Hart thanked him and added, reportedly, "OK, well, remember that when we're done."

Building from Scratch at Lab126

Amazon's hardware division, Lab126 in the San Francisco Bay Area, became the engineering home for what would eventually become the Echo. The challenge was formidable. Amazon had no meaningful voice recognition capability in-house, so it went shopping: the company hired engineers who had worked at Nuance, the speech recognition firm whose technology underpinned Siri, and acquired two voice-focused startups, Yap and Evi, to absorb their talent and intellectual property. Engineers in Cambridge were tasked with building a speech recognition system competitive with what Google was developing.

Dave Limp, the Amazon executive who oversaw devices, recalled seeing Bezos's original pitch and thinking: "This is going to be hard. It foretold a magical experience. But it would require a lot of inventions." He was right on all counts. Bezos kept the pressure on personally, meeting with the Echo team once or twice a week, drilling into technical details, and, according to people familiar with the project, micromanaging decisions as granular as the selection of Alexa's voice. When prototypes were distributed to Amazon employees for in-home testing, including to Bezos himself, the results were humbling. Engineers reviewing feedback data could reportedly hear their boss growing so exasperated with Alexa's comprehension failures that he told the machine to "go shoot yourself in the head."

The Design Choices That Normalized Always-On Listening

The Echo launched in November 2014, initially as an invitation-only product, and it introduced a set of architectural decisions that felt innocuous at the time but have since defined more than a decade of privacy disputes. The most consequential was the wake word. To make the device feel effortless, engineers built in "keyword spotting" technology that required the device's microphones to remain active at all times, listening locally for the trigger phrase "Alexa." The device would not record or transmit audio until that word was detected. In practice, this meant Amazon had placed a permanently listening microphone in millions of homes.

The second critical decision was cloud processing. When the wake word fired, the device streamed the user's audio to Amazon's servers for interpretation and response. This was a technical necessity given the processing power constraints of early consumer hardware, but it also meant that every command, every query, every accidental activation was being captured and retained by Amazon. Recordings were stored on Amazon's servers and linked to user accounts indefinitely unless users manually deleted them, a setting most never found or used.

In late 2014, an engineer rigged an Echo to control a streaming TV device. Bezos saw it and immediately grasped the implications: the Echo was not just a voice interface for music and shopping queries. It was a hub for the connected home. That realization accelerated Amazon's push into smart home integration, expanding the footprint of always-on devices throughout living spaces.

The Arkansas Case and the Law Enforcement Frontier

The collision between always-on audio and criminal justice arrived early. In Bentonville, Arkansas, a man named James Bates was accused of murder after a coworker was found dead in his home. Investigators noticed an Amazon Echo in the house and served a subpoena to Amazon seeking any recordings the device might have captured. Amazon refused to hand over the audio, initially arguing the recordings were protected under the First Amendment, a novel and aggressive legal position that held that Alexa's responses constituted protected speech. After three months of resistance, Amazon ultimately provided the data after Bates himself gave permission. A judge later dismissed the murder charge.

The Arkansas case was a watershed. It forced courts, prosecutors, and privacy advocates to grapple with questions that had no settled legal answers: Who owns the audio a smart speaker captures? Does a guest in a home have a reasonable expectation of privacy against a device they did not consent to? Can prosecutors bypass the device owner entirely and subpoena Amazon directly? In the years since, the answer to that last question has become a resounding yes. Amazon has disclosed that, in just a six-month window, it received more than 2,400 subpoenas and hundreds of additional law enforcement requests for Alexa data. A 2017 New Hampshire murder case involving two women and a 2019 Florida investigation followed similar patterns, each demonstrating how quickly cloud-retained recordings had become a routine tool of criminal investigation.

Children's Data and the $25 Million Reckoning

The most significant regulatory consequence of Amazon's original data architecture came in 2023, when the Federal Trade Commission and the Department of Justice charged Amazon with violating the Children's Online Privacy Protection Act. The complaint was stark: Amazon had kept children's voice recordings and geolocation data for years, even after parents explicitly requested deletion. According to the FTC, Amazon not only failed to honor those deletion requests but actively used children's voice data to train and improve its speech recognition algorithms.

Amazon had marketed child-specific Alexa products, including the Echo Dot Kids Edition, and operated the FreeTime Unlimited service for children. The COPPA Rule required that companies obtain verifiable parental consent before collecting data from children under 13 and provide genuine mechanisms for deletion. Amazon collected the data, but the deletion promise, the FTC alleged, was largely illusory. The settlement required Amazon to pay a $25 million civil penalty and overhaul its data deletion practices. Samuel Levine, director of the FTC's Bureau of Consumer Protection, was direct in his assessment: "Amazon's history of misleading parents, keeping children's recordings indefinitely, and flouting parents' deletion requests violated COPPA and sacrificed privacy for profits."

The case crystallized a critique that privacy advocates had been making since 2014: the decision to retain voice recordings by default, rather than delete them by default, was a business choice, not a technical necessity. It served Amazon's interest in training better AI. It was made without meaningful public debate and before regulators had established any framework governing it.

From Voice Box to AI Agent

What Bezos set in motion in 2011 is now at the center of a much larger transformation. The original Echo was reactive, waiting for a wake word and executing discrete commands. The next generation of Alexa, reengineered with large language model capabilities, is being built to be proactive and agentic, capable of chaining together tasks, making decisions across multiple services, and operating with greater autonomy on a user's behalf. Amazon's Alexa Plus, unveiled in early 2025, represents this shift, embedding generative AI capabilities that allow the assistant to handle complex, multi-step requests rather than single-query lookups.

The governance questions this raises are direct extensions of the choices made in 2011. If a reactive Alexa created unresolved disputes over data ownership, law enforcement access, and children's privacy, an agentic Alexa, one that autonomously browses, purchases, schedules, and communicates on your behalf, multiplies those stakes considerably. The wake word that seemed like a minor convenience feature in 2014 normalized the idea that always-on listening was an acceptable trade-off for ease of use. The cloud processing pipeline that made the original Echo work became the legal and commercial infrastructure through which billions of intimate recordings now flow.

Bezos got his Star Trek computer. What no one designed for, in those early whiteboard sessions at Lab126, was the legal architecture that should have surrounded it.

Sources:

Know something we missed? Have a correction or additional information?

Submit a Tip