Inside Amazon's Trainium Lab Where AWS Is Building Its Own AI Empire

Amazon's custom AI chip lab is the secret weapon behind AWS's OpenAI deal and its plan to stop paying Nvidia billions.

Amazon let a reporter walk the floor of its Trainium development lab, and what's happening inside that facility explains one of the most consequential bets in the AI hardware race. AWS has spent years quietly building the silicon infrastructure to challenge Nvidia's dominance, and the Trainium chip sits at the center of that strategy. The timing is deliberate: as AI inference costs become the defining competitive variable for every cloud provider, the company that controls its own silicon controls its own destiny.

1. The Lab Itself

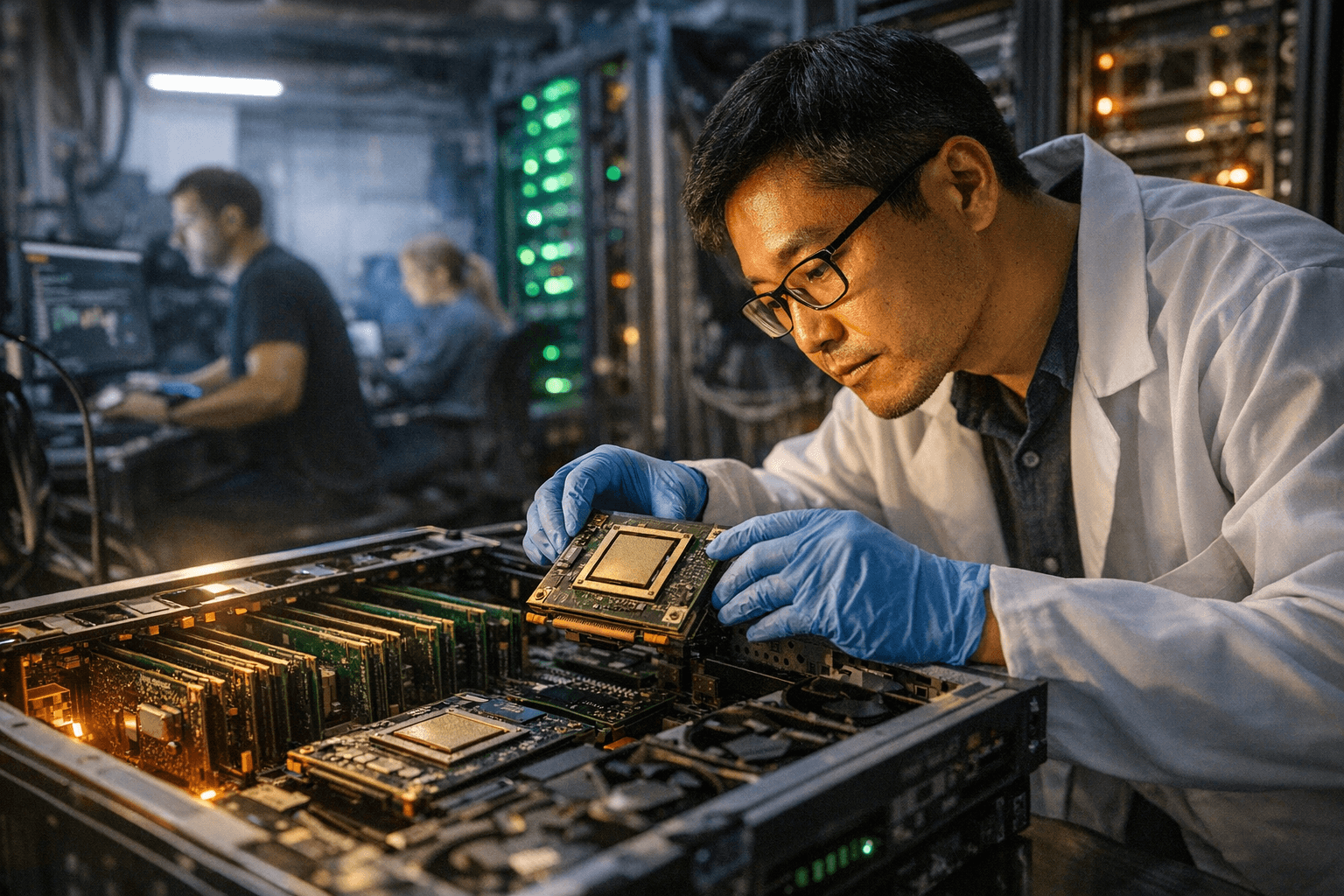

The Trainium development facility is where Amazon's in-house AI accelerator chips are designed, tested, and refined before reaching the AWS data centers that power cloud customers globally. Unlike the abstract announcements typical of chip launches, the physical lab offers a window into the iterative engineering process behind custom silicon, from early tape-out designs to thermal stress testing rigs. The facility represents years of investment by Amazon in building a semiconductor competency that most tech companies have never attempted to develop internally.

2. Why Amazon Built Its Own Chip

Amazon's motivation for developing Trainium is straightforward and financial: Nvidia's H100 and successor GPUs carry price tags that translate into billions of dollars in annual procurement costs for a company running one of the world's largest cloud infrastructures. By designing its own accelerators optimized specifically for the workloads AWS customers run, Amazon can dramatically reduce its dependence on external chip suppliers while improving the price-to-performance ratio it offers customers. The strategic calculus also includes supply chain resilience; owning the chip design means AWS isn't subject to Nvidia's production timelines or allocation decisions during periods of constrained supply.

3. Trainium's Technical Focus

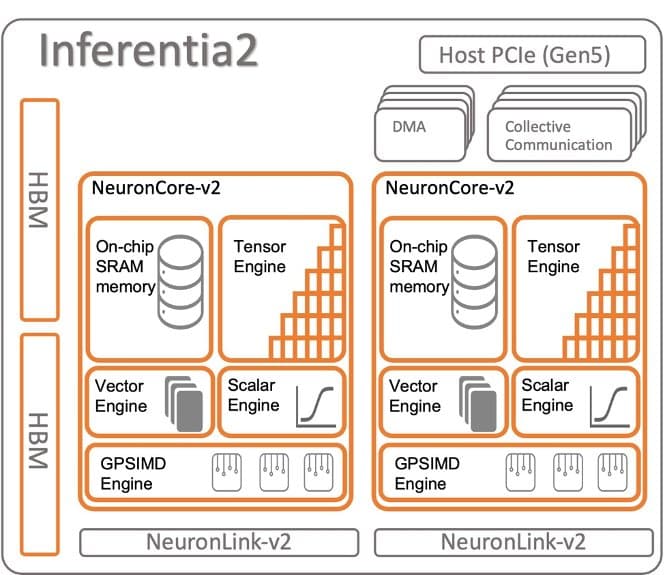

Trainium is engineered with a specific emphasis on AI training and, increasingly, inference workloads, which represent the fast-growing segment of AI compute demand as companies move from building models to deploying them at scale. The chip architecture reflects choices made for the types of neural network operations that dominate modern large language model workloads, prioritizing memory bandwidth and interconnect efficiency over raw floating-point throughput in ways that differ meaningfully from general-purpose GPU design. These architectural tradeoffs are what allow AWS to argue that Trainium offers better economics for specific AI tasks, even if it lacks the broad programmability of an Nvidia GPU.

4. The Inference Opportunity

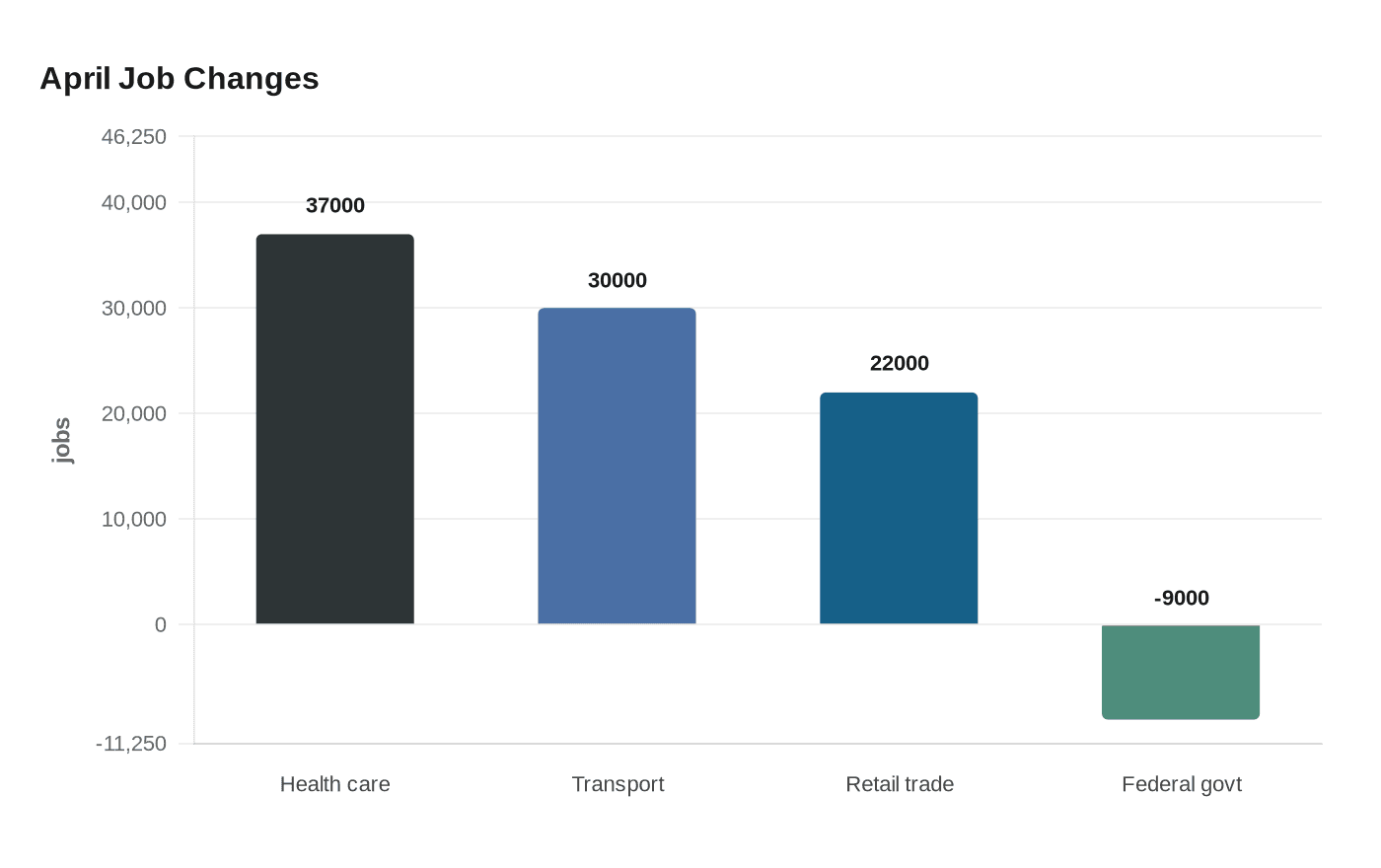

AI inference, the process of running a trained model to generate outputs, is rapidly becoming the largest and fastest-growing category of AI compute spend, outpacing training as deployments scale into consumer and enterprise products. Every time a user queries an AI assistant, gets a product recommendation, or receives an automated response, an inference computation occurs, and at cloud scale those computations add up to extraordinary hardware demand. AWS sees inference as the battleground where Trainium's cost advantages can be most compelling, since customers running continuous, high-volume inference workloads are acutely sensitive to the cost per query.

5. The OpenAI Connection

The OpenAI deal is the highest-profile validation of AWS's silicon ambitions, placing one of the world's most compute-hungry AI organizations inside Amazon's infrastructure ecosystem. The partnership signals that OpenAI, despite its historical ties to Microsoft Azure, is diversifying its cloud relationships in ways that give AWS a meaningful foothold in the frontier model market. For Amazon, having OpenAI run workloads on AWS infrastructure, potentially on Trainium hardware, is both a revenue opportunity and a proof-of-concept argument it can make to every other AI company evaluating where to run their models.

6. Competing With Nvidia and Google

Amazon is not alone in its push to build custom AI silicon; Google's TPUs and Microsoft's Maia chips represent parallel efforts by the other hyperscalers to reduce Nvidia dependence and capture more value from AI compute internally. The competitive dynamics mean that Trainium's success will be measured not just against Nvidia's products but against the custom silicon programs at rival cloud providers who are making the same long-term bet on vertical integration. AWS's advantage in this contest may ultimately come from its sheer scale as a cloud provider, giving it more real-world workload data to inform chip design iteration than any pure semiconductor company could access.

7. What This Means for Cloud Customers

For companies building AI products on AWS, Trainium's maturation represents a potential inflection point in the cost structure of AI deployment, with Amazon able to offer competitive pricing on inference workloads that were previously dominated by Nvidia-powered instances. The practical implication is that developers and ML engineers will face an increasingly meaningful choice between optimizing their models for Trainium's architecture versus staying on familiar GPU-based infrastructure, a tradeoff that involves both performance benchmarking and software ecosystem considerations. AWS has invested significantly in software tooling to lower the barrier to that migration, recognizing that hardware advantages mean little if the developer experience creates friction.

The Trainium lab is not just a chip factory; it is Amazon's clearest expression of a conviction that the companies controlling AI infrastructure at the silicon level will hold structural advantages in the cloud wars for the next decade.

Know something we missed? Have a correction or additional information?

Submit a Tip